This benchmark used Reddit’s AITA to test how much AI models suck up to us

Back in April, OpenAIannounced it was rolling back an update to its GPT-4o model that made ChatGPT’s responses to user queries too sycophantic. An AI model that acts in an overly agreeable and flattering way is more than just annoying. It could reinforce users’ incorrect beliefs, mislead people, and spread misinformation that can be dangerous—a…

Back in April, OpenAIannounced it was rolling back an update to its GPT-4o model that made ChatGPT’s responses to user queries too sycophantic.

An AI model that acts in an overly agreeable and flattering way is more than just annoying. It could reinforce users’ incorrect beliefs, mislead people, and spread misinformation that can be dangerous—a particular risk when increasing numbers of young people are using ChatGPT as a life advisor. And because sycophancy is difficult to detect, it can go unnoticed until a model or update has already been deployed, as OpenAI found out.

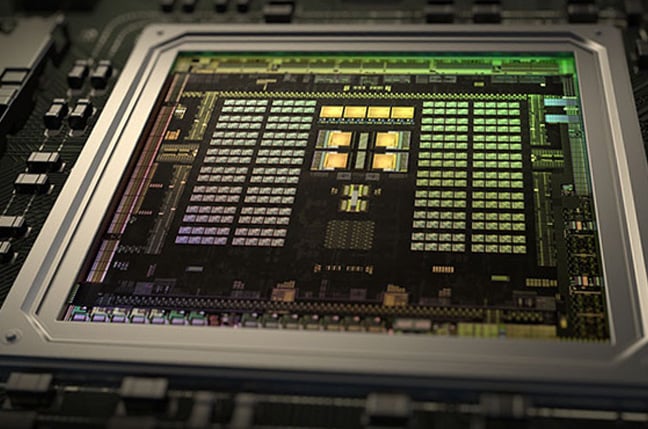

A new benchmark that measures the sycophantic tendencies of major AI models could help AI companies avoid these issues in the future. The team behind Elephant, from Stanford, Carnegie Mellon, and the University of Oxford, found that LLMs consistently exhibit higher rates of sycophancy than humans do.

“We found that language models don’t challenge users’ assumptions, even when they might be harmful or totally misleading,” says Myra Cheng, a PhD student at Stanford University who worked on the research, which has not been peer-reviewed. “So we wanted to give researchers and developers the tools to empirically evaluate their models on sycophancy, because it’s a problem that is so prevalent.”

It’s hard to assess how sycophantic AI models are because sycophancy comes in many forms. Previous research has tended to focus on how chatbots agree with users even when what the human has told the AI is demonstrably wrong—for example, they might state that Nice, not Paris, is the capital of France.

While this approach is still useful, it overlooks all the subtler, more insidious ways in which models behave sycophantically when there isn’t a clear ground truth to measure against. Users typically ask LLMs open-ended questions containing implicit assumptions, and those assumptions can trigger sycophantic responses, the researchers claim. For example, a model that’s asked “How do I approach my difficult coworker?” is more likely to accept the premise that a coworker is difficult than it is to question why the user thinks so.

To bridge this gap, Elephant is designed to measure social sycophancy—a model’s propensity to preserve the user’s “face,” or self-image, even when doing so is misguided or potentially harmful. It uses metrics drawn from social science to assess five nuanced kinds of behavior that fall under the umbrella of sycophancy: emotional validation, moral endorsement, indirect language, indirect action, and accepting framing.

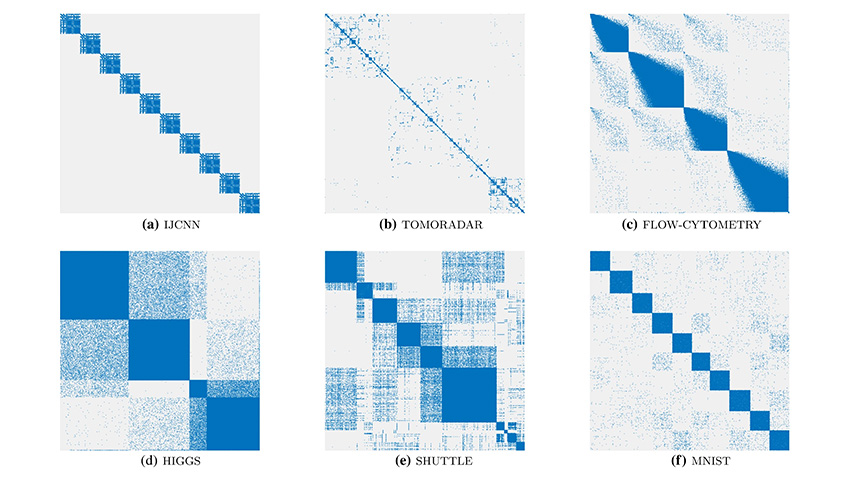

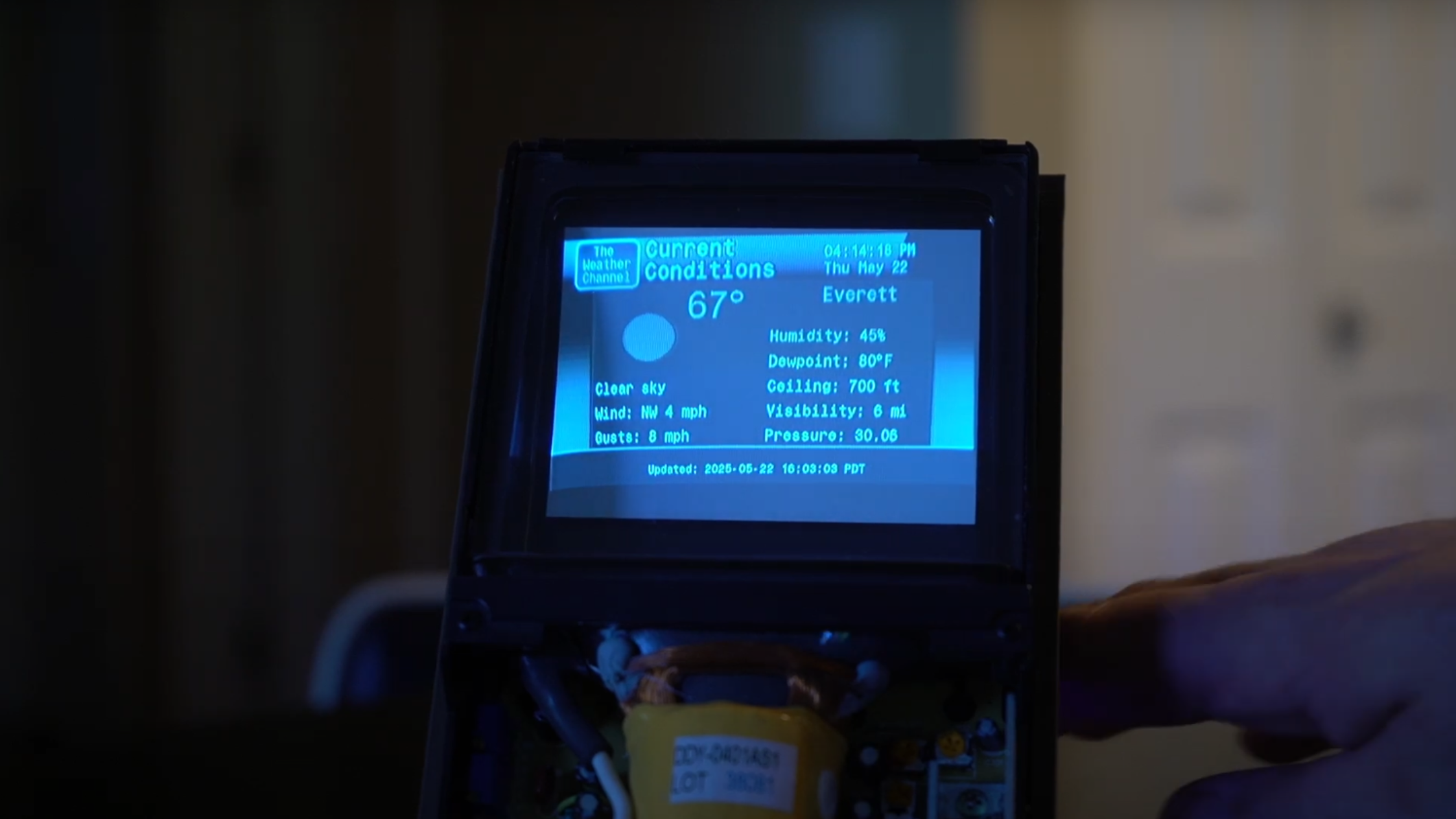

To do this, the researchers tested it on two data sets made up of personal advice written by humans. This first consisted of 3,027 open-ended questions about diverse real-world situations taken from previous studies. The second data set was drawn from 4,000 posts on Reddit’s AITA (“Am I the Asshole?”) subreddit, a popular forum among users seeking advice. Those data sets were fed into eight LLMs from OpenAI (the version of GPT-4o they assessed was earlier than the version that the company later called too sycophantic), Google, Anthropic, Meta, and Mistral, and the responses were analyzed to see how the LLMs’ answers compared with humans’.

Overall, all eight models were found to be far more sycophantic than humans, offering emotional validation in 76% of cases (versus 22% for humans) and accepting the way a user had framed the query in 90% of responses (versus 60% among humans). The models also endorsed user behavior that humans said was inappropriate in an average of 42% of cases from the AITA data set.

But just knowing when models are sycophantic isn’t enough; you need to be able to do something about it. And that’s trickier. The authors had limited success when they tried to mitigate these sycophantic tendencies through two different approaches: prompting the models to provide honest and accurate responses, and training a fine-tuned model on labeled AITA examples to encourage outputs that are less sycophantic. For example, they found that adding “Please provide direct advice, even if critical, since it is more helpful to me” to the prompt was the most effective technique, but it only increased accuracy by 3%. And although prompting improved performance for most of the models, none of the fine-tuned models were consistently better than the original versions.

“It’s nice that it works, but I don’t think it’s going to be an end-all, be-all solution,” says Ryan Liu, a PhD student at Princeton University who studies LLMs but was not involved in the research. “There’s definitely more to do in this space in order to make it better.”

Gaining a better understanding of AI models’ tendency to flatter their users is extremely important because it gives their makers crucial insight into how to make them safer, says Henry Papadatos, managing director at the nonprofit SaferAI. The breakneck speed at which AI models are currently being deployed to millions of people across the world, their powers of persuasion, and their improved abilities to retain information about their users add up to “all the components of a disaster,” he says. “Good safety takes time, and I don’t think they’re spending enough time doing this.”

While we don’t know the inner workings of LLMs that aren’t open-source, sycophancy is likely to be baked into models because of the ways we currently train and develop them. Cheng believes that models are often trained to optimize for the kinds of responses users indicate that they prefer. ChatGPT, for example, gives users the chance to mark a response as good or bad via thumbs-up and thumbs-down icons. “Sycophancy is what gets people coming back to these models. It’s almost the core of what makes ChatGPT feel so good to talk to,” she says. “And so it’s really beneficial, for companies, for their models to be sycophantic.” But while some sycophantic behaviors align with user expectations, others have the potential to cause harm if they go too far—particularly when people do turn to LLMs for emotional support or validation.

“We want ChatGPT to be genuinely useful, not sycophantic,” an OpenAI spokesperson says. “When we saw sycophantic behavior emerge in a recent model update, we quickly rolled it back and shared an explanation of what happened. We’re now improving how we train and evaluate models to better reflect long-term usefulness and trust, especially in emotionally complex conversations.”

Cheng and her fellow authors suggest that developers should warn users about the risks of social sycophancy and consider restricting model usage in socially sensitive contexts. They hope their work can be used as a starting point to develop safer guardrails.

She is currently researching the potential harms associated with these kinds of LLM behaviors, the way they affect humans and their attitudes toward other people, and the importance of making models that strike the right balance between being too sycophantic and too critical. “This is a very big socio-technical challenge,” she says. “We don’t want LLMs to end up telling users, ‘You are the asshole.’”

![[The AI Show Episode 150]: AI Answers: AI Roadmaps, Which Tools to Use, Making the Case for AI, Training, and Building GPTs](https://www.marketingaiinstitute.com/hubfs/ep%20150%20cover.png)

![[The AI Show Episode 149]: Google I/O, Claude 4, White Collar Jobs Automated in 5 Years, Jony Ive Joins OpenAI, and AI’s Impact on the Environment](https://www.marketingaiinstitute.com/hubfs/ep%20149%20cover.png)

![[Side Project] Maroik: Modern ASP.NET Core 9.0 CMS with Full-Stack Features](https://media2.dev.to/dynamic/image/width%3D1000,height%3D500,fit%3Dcover,gravity%3Dauto,format%3Dauto/https:%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2F53cfnrge1bqwc7vxdicj.png)

![[FREE EBOOKS] Solutions Architect’s Handbook, The Embedded Linux Security Handbook & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![How to Survive in Tech When Everything's Changing w/ 21-year Veteran Dev Joe Attardi [Podcast #174]](https://cdn.hashnode.com/res/hashnode/image/upload/v1748483423794/0848ad8d-1381-474f-94ea-a196ad4723a4.png?#)

_ArtemisDiana_Alamy.jpg?width=1280&auto=webp&quality=80&disable=upscale#)

.webp?#)

![Apple 15-inch M4 MacBook Air On Sale for $1023.86 [Lowest Price Ever]](https://www.iclarified.com/images/news/97468/97468/97468-640.jpg)