Data centers are at the heart of the AI revolution and here's how they are changing

As AI strains infrastructure, data centers evolve with modular design, renewable energy, and smart optimization.

As demand for AI and cloud computing soars, pundits are suggesting that the world is teetering on the edge of a potential data center crunch—where capacity can’t keep up with the digital load. Concerns and the hype have led to plummeting vacancy rates: in Northern Virginia, the world's largest data center market, for example, vacancy rates have fallen below 1%.

Echoing past fears of "peak oil" and "peak food," the spotlight now turns to "peak data." But rather than stall, the industry is evolving—adopting modular builds, renewable energy, and AI-optimized systems to redefine how tomorrow’s data centers will power an increasingly digital world.

1. Shift Toward Modular and Edge Data Centers

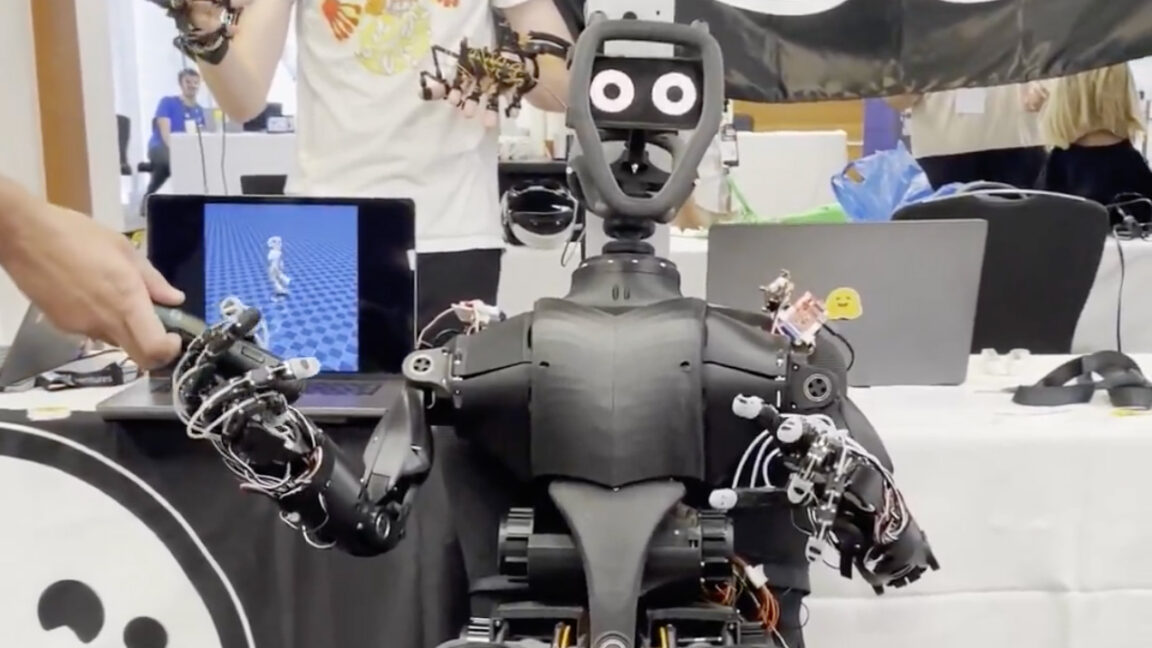

Future data centers will increasingly move away from massive centralized facilities alone, embracing smaller, modular, and edge-based data centers. The sector is already splitting out in hyperscale data centers one end and smaller, edge-oriented facilities on the other.

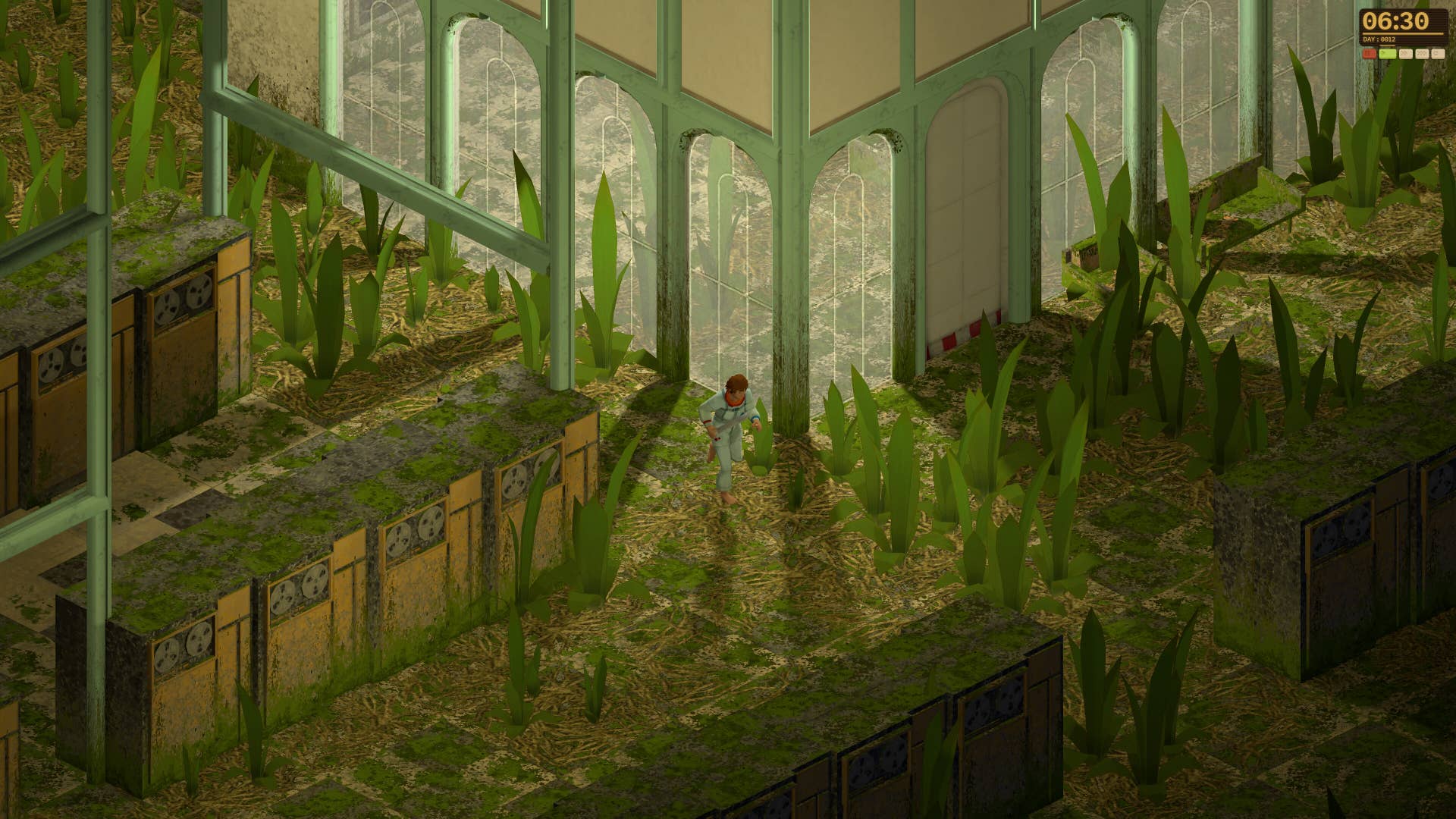

Smaller, modular and edge data centers can be built in a few months and tend to be located closer to end users to reduce latency. Unlike the huge campuses of hyperscale with facilities often covering millions of square feet these smaller data centers are sometimes built into repurposed buildings such as abandoned shopping malls, empty office towers, and factories in disuse, helping requalify ex-industrial brownfield areas.

Leaner centers can be rapidly deployed, located closer to end users for reduced latency, and tailored to specific workloads such as autonomous vehicles and AR.

2. Integration with Renewable and On-Site Power Sources

To address energy demands and grid constraints, future data centers will increasingly be co-located with power generation facilities, such as nuclear or renewable plants. This reduces reliance on strained grid infrastructure and improves energy stability. Some companies are investing in nuclear power. Nuclear power provides massive, always-on power that is also free of carbon emissions. Modular reactors are being considered to overcome grid bottlenecks, long wait times for power delivery, and local utility limits.

Similarly, they will be increasingly built in areas where the climate reduces operational strain. Lower cooling costs and access to water enables the use of energy-efficient liquid-cooling systems instead of air-cooling. We will be seeing more data centers pop up in places like Scandinavia and the Pacific Northwest.

3. Operational Optimization

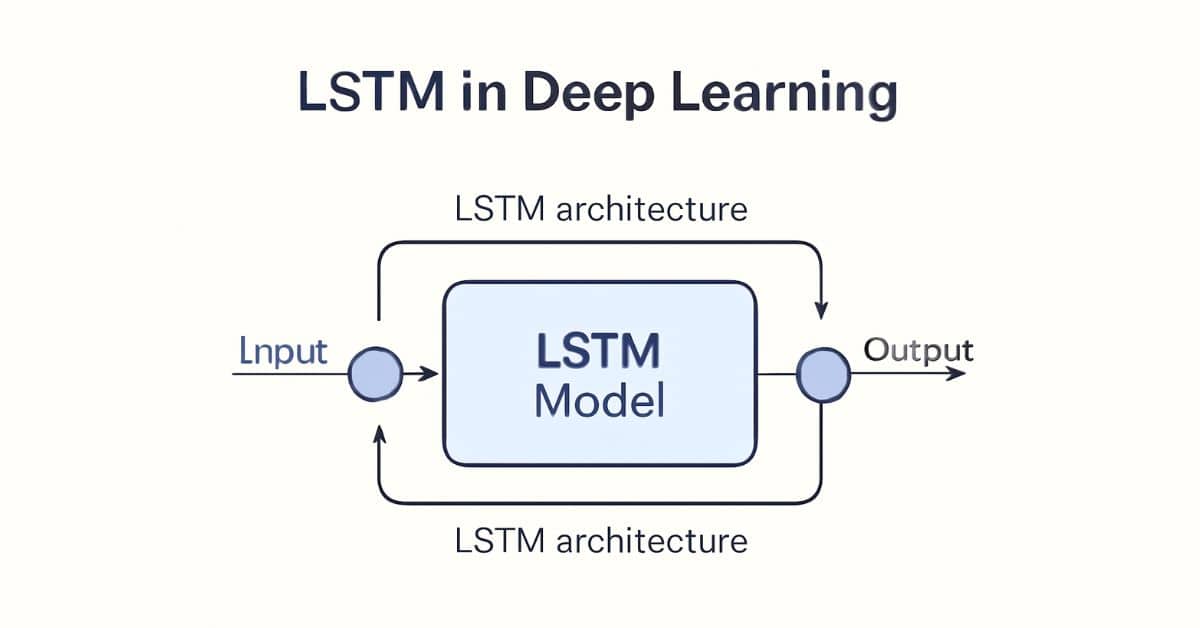

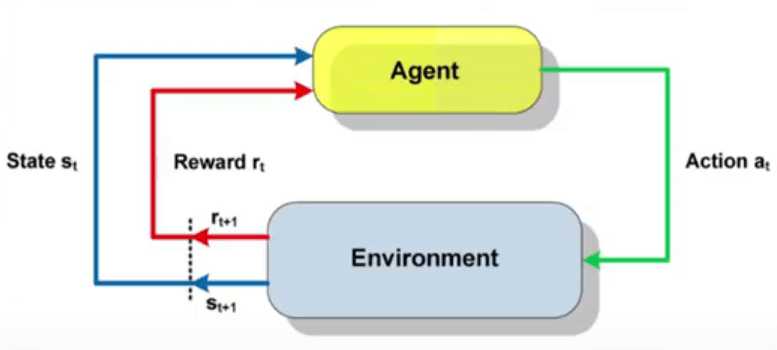

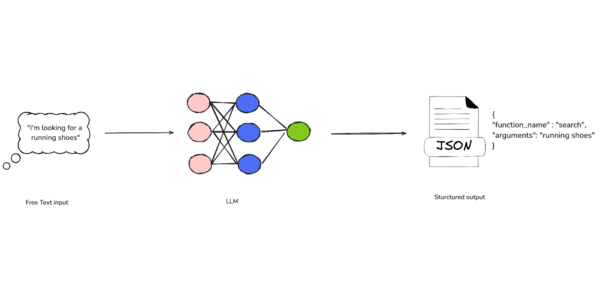

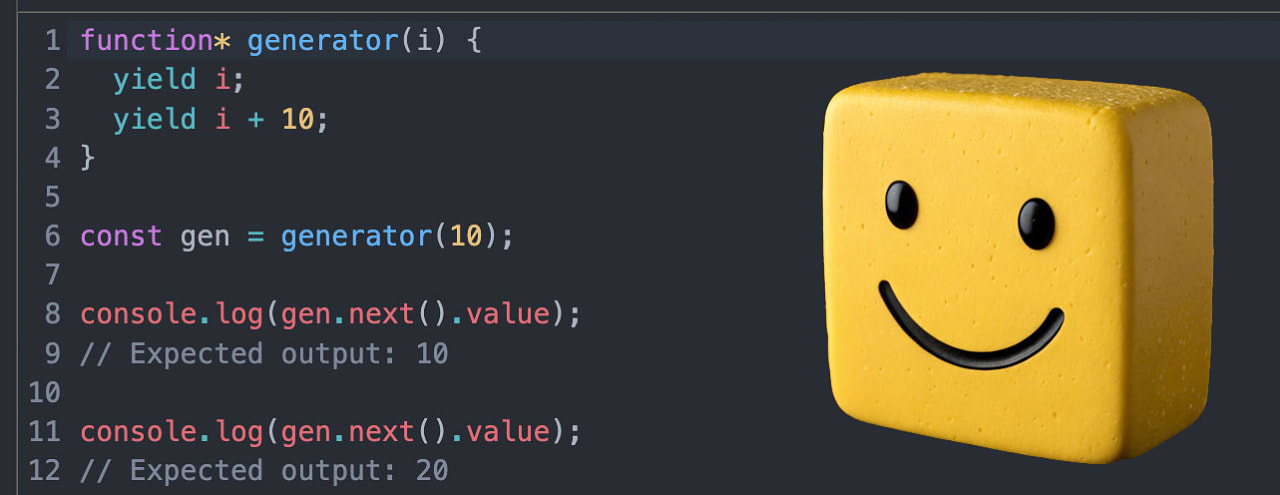

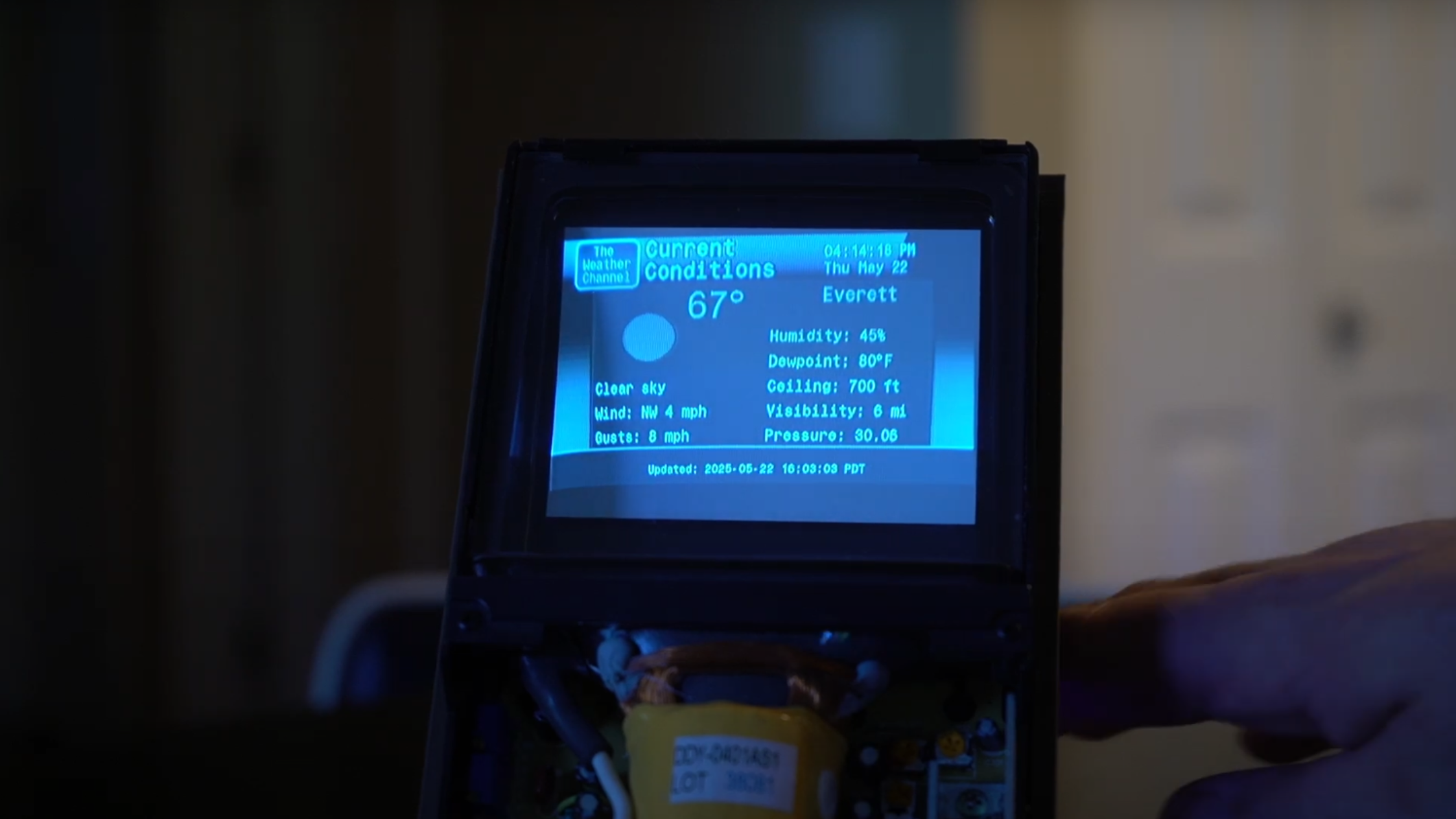

Artificial intelligence will play a major role in managing and optimizing data center operations, particularly for cooling and energy use. For instance, reinforcement learning algorithms are being used to cut energy use by optimizing cooling systems, achieving up to 21% energy savings.

Similarly, fixes like replacing legacy servers with more energy-efficient machines, with newer chips or thermal design, can significantly expand compute capacity, without requiring new premises.

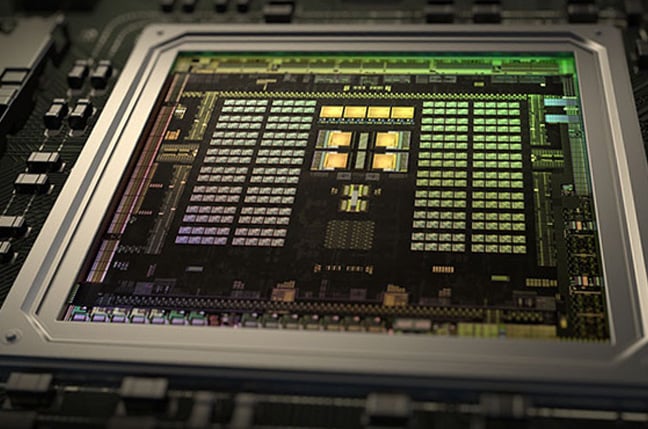

4. Hardware Density and Efficiency Improvements

Instead of only building new facilities, future capacity will be expanded by refreshing hardware with newer, denser, and more energy-efficient servers. This allows for more compute power in the same footprint, enabling quick scaling to meet surges in demand, particularly for AI workloads. These power-hungry centers are also putting a strain on electricity grids.

Future data centers will leverage new solutions such as load shifting to optimize energy efficiency. Google is already partnering with PJM Interconnection, the largest electrical grid operator in North America, to leverage AI to automate tasks such as viability assessments of connection applications, thus enhancing grid efficiency.

Issues are typically not due to lack of energy but insufficient transmission capacity.

In addition to this, fortunately, data centers are usually running well below full capacity specifically to accommodate future growth. This added capacity will prove useful as facilities accommodate unexpected traffic spikes, and rapid scaling needs without requiring new constructions.

5. Geographically and Politically Responsive Siting

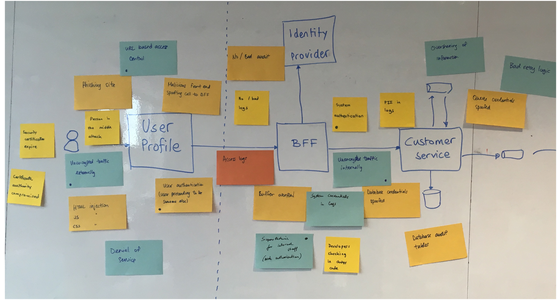

Future data center locations will be chosen based on climate efficiency, grid access, and political zoning policies but also availability of AI-skilled workforce. Data centers aren’t server rooms—they’re among the most complex IT infrastructure projects in existence, requiring seamless power, cooling, high-speed networking, and top-tier security.

Building them involves a wide range of experts, from engineers to logistics teams, coordinating everything from semiconductors to industrial HVAC systems. Data centers will thus drive up the demand for high-performance networking, thermal, power redundancy, and advanced cooling engineers.

Looking to the future

It’s clear that the recent surge in infrastructure demand to power GPUs and high-performance computing, for example, is being driven primarily by AI. In fact, training massive models like OpenAI’s GPT-4 or Google’s Gemini requires immense computational resources, consuming GPU cycles at an astonishing rate. These training runs often last weeks and involve thousands of specialized chips, drawing on power and cooling infrastructure.

But the story doesn’t end there: even when a model is trained, running these models in real-time to generate responses, make predictions, or process user inputs (so-called AI inference) adds a new layer of energy demand. While not as intense as training, inference must happen at scale and with low latency, which means it’s placing a steady, ongoing load on cloud infrastructure.

However, here’s a nuance that’s frequently glossed over in much of the hype: AI workloads don’t scale in a straight-forward, linear fashion: doubling the number of GPUs or increasing the size of a model will not always lead to proportionally better results. Experience has shown that as models grow in size, the performance gains actually may taper off or introduce new challenges, such as brittleness, hallucination, or the need for more careful fine-tuning.

In short

In short, the current AI boom is real, but it may not be boundless. Understanding the limitations of scale and the nonlinear nature of progress is crucial for policymakers, investors, and businesses alike as they plan for data center demand that is shaped by AI exponential growth.

The data center industry therefore stands at a pivotal crossroads. Far from buckling under the weight of AI tools and cloud-driven demand, however, it’s adapting at speed through smarter design, greener power, and more efficient hardware.

From modular builds in repurposed buildings to AI-optimized cooling systems and co-location with power plants, the future of data infrastructure will be leaner, more distributed, and strategically sited. As data becomes the world’s most valuable resource, the facilities that store, process, and protect it are becoming smarter, greener, and more essential than ever.

We list the best colocation providers.

This article was produced as part of TechRadarPro's Expert Insights channel where we feature the best and brightest minds in the technology industry today. The views expressed here are those of the author and are not necessarily those of TechRadarPro or Future plc. If you are interested in contributing find out more here: https://www.techradar.com/news/submit-your-story-to-techradar-pro

![[The AI Show Episode 150]: AI Answers: AI Roadmaps, Which Tools to Use, Making the Case for AI, Training, and Building GPTs](https://www.marketingaiinstitute.com/hubfs/ep%20150%20cover.png)

![[The AI Show Episode 149]: Google I/O, Claude 4, White Collar Jobs Automated in 5 Years, Jony Ive Joins OpenAI, and AI’s Impact on the Environment](https://www.marketingaiinstitute.com/hubfs/ep%20149%20cover.png)

![[Side Project] Maroik: Modern ASP.NET Core 9.0 CMS with Full-Stack Features](https://media2.dev.to/dynamic/image/width%3D1000,height%3D500,fit%3Dcover,gravity%3Dauto,format%3Dauto/https:%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2F53cfnrge1bqwc7vxdicj.png)

![[FREE EBOOKS] Solutions Architect’s Handbook, The Embedded Linux Security Handbook & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![How to Survive in Tech When Everything's Changing w/ 21-year Veteran Dev Joe Attardi [Podcast #174]](https://cdn.hashnode.com/res/hashnode/image/upload/v1748483423794/0848ad8d-1381-474f-94ea-a196ad4723a4.png?#)

_ArtemisDiana_Alamy.jpg?width=1280&auto=webp&quality=80&disable=upscale#)

.webp?#)

![Apple 15-inch M4 MacBook Air On Sale for $1023.86 [Lowest Price Ever]](https://www.iclarified.com/images/news/97468/97468/97468-640.jpg)