Building Production-Ready Custom AI Agents for Enterprise Workflows with Monitoring, Orchestration, and Scalability

In this tutorial, we walk you through the design and implementation of a custom agent framework built on PyTorch and key Python tooling, ranging from web intelligence and data science modules to advanced code generators. We’ll learn how to wrap core functionalities in monitored CustomTool classes, orchestrate multiple agents with tailored system prompts, and define […] The post Building Production-Ready Custom AI Agents for Enterprise Workflows with Monitoring, Orchestration, and Scalability appeared first on MarkTechPost.

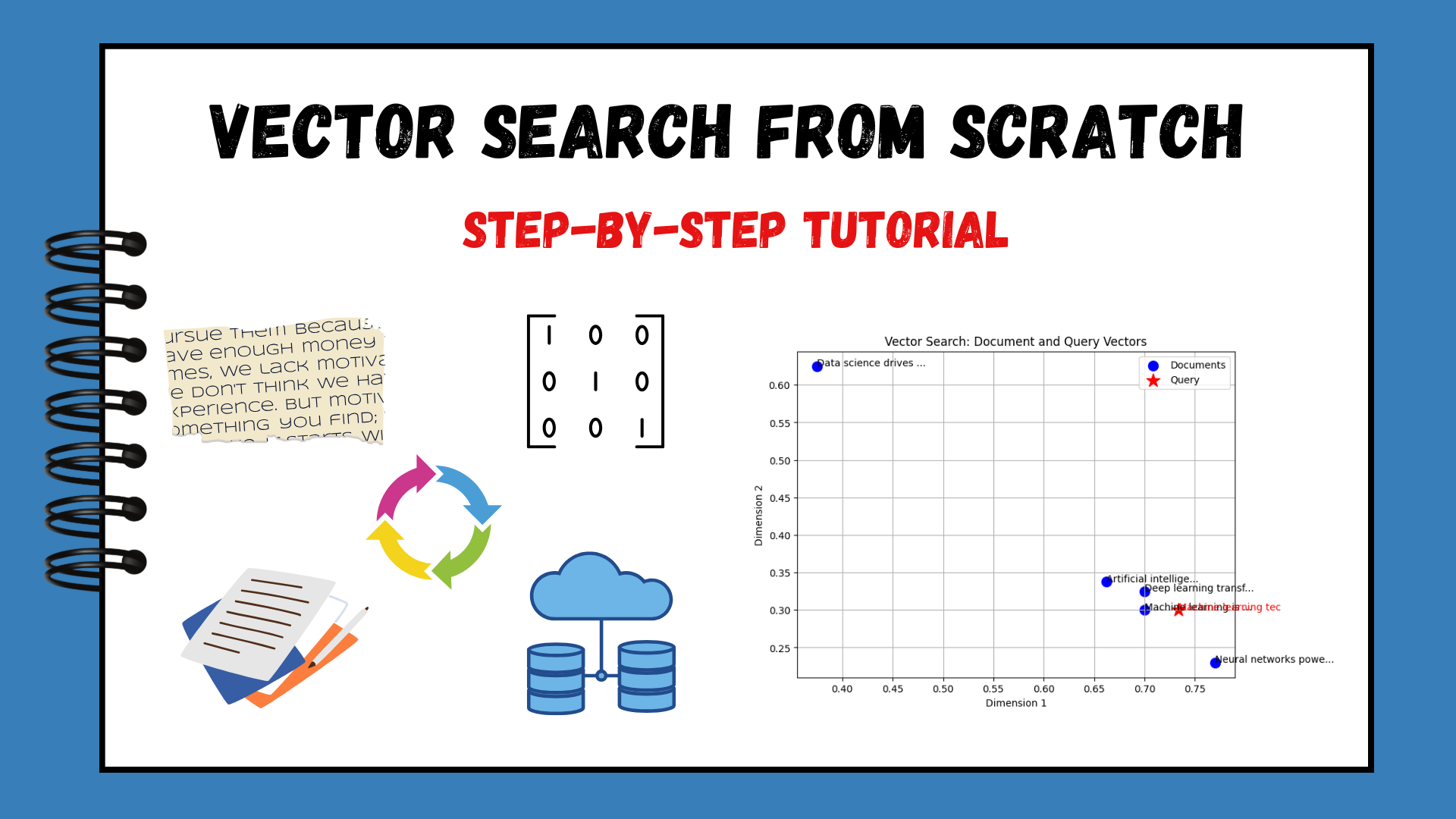

In this tutorial, we walk you through the design and implementation of a custom agent framework built on PyTorch and key Python tooling, ranging from web intelligence and data science modules to advanced code generators. We’ll learn how to wrap core functionalities in monitored CustomTool classes, orchestrate multiple agents with tailored system prompts, and define end-to-end workflows that automate tasks like competitive website analysis and data-processing pipelines. Along the way, we demonstrate real-world examples, complete with retry logic, logging, and performance metrics, so you can confidently deploy and scale these agents within your organization’s existing infrastructure.

!pip install -q torch transformers datasets pillow requests beautifulsoup4 pandas numpy scikit-learn openai

import os, json, asyncio, threading, time

import torch, pandas as pd, numpy as np

from PIL import Image

import requests

from io import BytesIO, StringIO

from concurrent.futures import ThreadPoolExecutor

from functools import wraps, lru_cache

from typing import Dict, List, Optional, Any, Callable, Union

import logging

from dataclasses import dataclass

import inspect

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

API_TIMEOUT = 15

MAX_RETRIES = 3We begin by installing and importing all the core libraries, including PyTorch and Transformers, as well as data handling libraries such as pandas and NumPy, and utilities like BeautifulSoup for web scraping and scikit-learn for machine learning. We configure a standardized logging setup to capture information and error messages, and define global constants for API timeouts and retry limits, ensuring our tools behave predictably in production.

@dataclass

class ToolResult:

"""Standardized tool result structure"""

success: bool

data: Any

error: Optional[str] = None

execution_time: float = 0.0

metadata: Dict[str, Any] = None

class CustomTool:

"""Base class for custom tools"""

def __init__(self, name: str, description: str, func: Callable):

self.name = name

self.description = description

self.func = func

self.calls = 0

self.avg_execution_time = 0.0

self.error_rate = 0.0

def execute(self, *args, **kwargs) -> ToolResult:

"""Execute tool with monitoring"""

start_time = time.time()

self.calls += 1

try:

result = self.func(*args, **kwargs)

execution_time = time.time() - start_time

self.avg_execution_time = ((self.avg_execution_time * (self.calls - 1)) + execution_time) / self.calls

return ToolResult(

success=True,

data=result,

execution_time=execution_time,

metadata={'tool_name': self.name, 'call_count': self.calls}

)

except Exception as e:

execution_time = time.time() - start_time

self.error_rate = (self.error_rate * (self.calls - 1) + 1) / self.calls

logger.error(f"Tool {self.name} failed: {str(e)}")

return ToolResult(

success=False,

data=None,

error=str(e),

execution_time=execution_time,

metadata={'tool_name': self.name, 'call_count': self.calls}

)We define a ToolResult dataclass to encapsulate every execution’s outcome, whether it succeeded, how long it took, any returned data, and error details if it failed. Our CustomTool base class then wraps individual functions with a unified execute method that tracks call counts, measures execution time, computes an average runtime, and logs any errors. By standardizing tool results and performance metrics this way, we ensure consistency and observability across all our custom utilities.

class CustomAgent:

"""Custom agent implementation with tool management"""

def __init__(self, name: str, system_prompt: str = "", max_iterations: int = 5):

self.name = name

self.system_prompt = system_prompt

self.max_iterations = max_iterations

self.tools = {}

self.conversation_history = []

self.performance_metrics = {}

def add_tool(self, tool: CustomTool):

"""Add a tool to the agent"""

self.tools[tool.name] = tool

def run(self, task: str) -> Dict[str, Any]:

"""Execute a task using available tools"""

logger.info(f"Agent {self.name} executing task: {task}")

task_lower = task.lower()

results = []

if any(keyword in task_lower for keyword in ['analyze', 'website', 'url', 'web']):

if 'advanced_web_intelligence' in self.tools:

import re

url_pattern = r'https?://[^\s]+'

urls = re.findall(url_pattern, task)

if urls:

result = self.tools['advanced_web_intelligence'].execute(urls[0])

results.append(result)

elif any(keyword in task_lower for keyword in ['data', 'analyze', 'stats', 'csv']):

if 'advanced_data_science_toolkit' in self.tools:

if 'name,age,salary' in task:

data_start = task.find('name,age,salary')

data_part = task[data_start:]

result = self.tools['advanced_data_science_toolkit'].execute(data_part, 'stats')

results.append(result)

elif any(keyword in task_lower for keyword in ['generate', 'code', 'api', 'client']):

if 'advanced_code_generator' in self.tools:

result = self.tools['advanced_code_generator'].execute(task)

results.append(result)

return {

'agent': self.name,

'task': task,

'results': [r.data if r.success else {'error': r.error} for r in results],

'execution_summary': {

'tools_used': len(results),

'success_rate': sum(1 for r in results if r.success) / len(results) if results else 0,

'total_time': sum(r.execution_time for r in results)

}

}We encapsulate our AI logic in a CustomAgent class that holds a set of tools, a system prompt, and execution history, then routes each incoming task to the right tool based on simple keyword matching. In the run() method, we log the task, select the appropriate tool (web intelligence, data analysis, or code generation), execute it, and aggregate the results into a standardized response that includes success rates and timing metrics. This design enables us to easily extend agents by adding new tools and maintains our orchestration as both transparent and measurable.

print(" Read More

Read More

![[The AI Show Episode 154]: AI Answers: The Future of AI Agents at Work, Building an AI Roadmap, Choosing the Right Tools, & Responsible AI Use](https://www.marketingaiinstitute.com/hubfs/ep%20154%20cover.png)

![[The AI Show Episode 153]: OpenAI Releases o3-Pro, Disney Sues Midjourney, Altman: “Gentle Singularity” Is Here, AI and Jobs & News Sites Getting Crushed by AI Search](https://www.marketingaiinstitute.com/hubfs/ep%20153%20cover.png)

![[FREE EBOOKS] The Chief AI Officer’s Handbook, Natural Language Processing with Python & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

.png?width=1920&height=1920&fit=bounds&quality=70&format=jpg&auto=webp#)

![GrandChase tier list of the best characters available [June 2025]](https://media.pocketgamer.com/artwork/na-33057-1637756796/grandchase-ios-android-3rd-anniversary.jpg?#)

_Frank_Peters_Alamy.jpg?width=1280&auto=webp&quality=80&disable=upscale#)

![Apple Weighs Acquisition of AI Startup Perplexity in Internal Talks [Report]](https://www.iclarified.com/images/news/97674/97674/97674-640.jpg)

![Oakley and Meta Launch Smart Glasses for Athletes With AI, 3K Camera, More [Video]](https://www.iclarified.com/images/news/97665/97665/97665-640.jpg)

![How to Get Your Parents to Buy You a Mac, According to Apple [Video]](https://www.iclarified.com/images/news/97671/97671/97671-640.jpg)

![New accessibility settings announced for Steam Big Picture Mode and SteamOS [Beta]](https://www.ghacks.net/wp-content/uploads/2025/06/New-accessibility-settings-announced-for-Steam-Big-Picture-Mode-and-SteamOS.jpg)