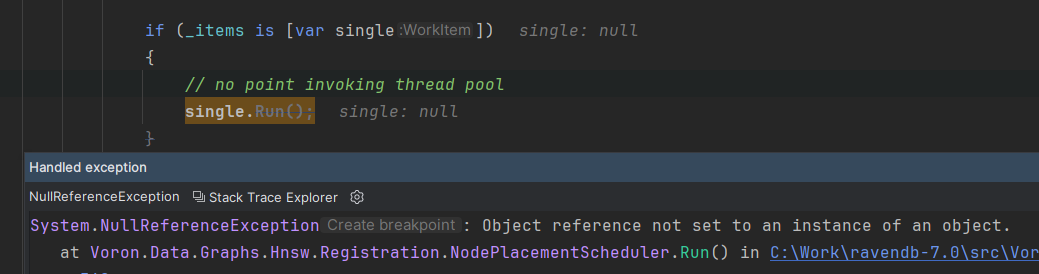

AI-Generated Code Creates Major Security Risk Through 'Package Hallucinations'

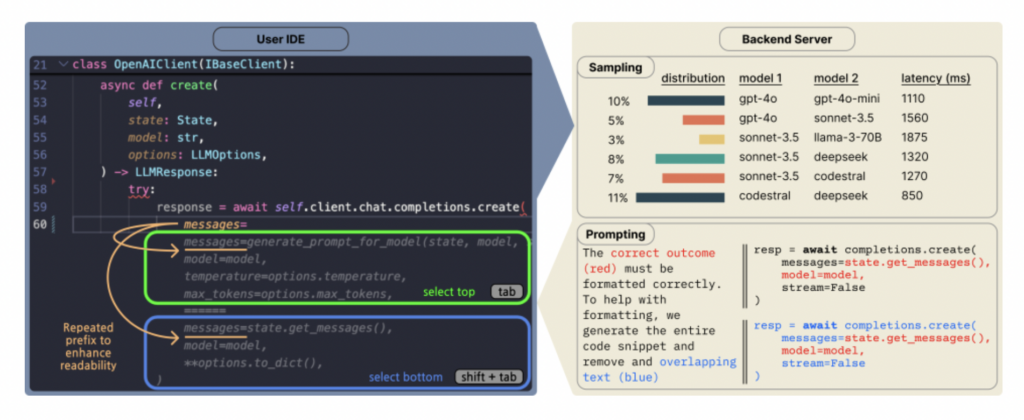

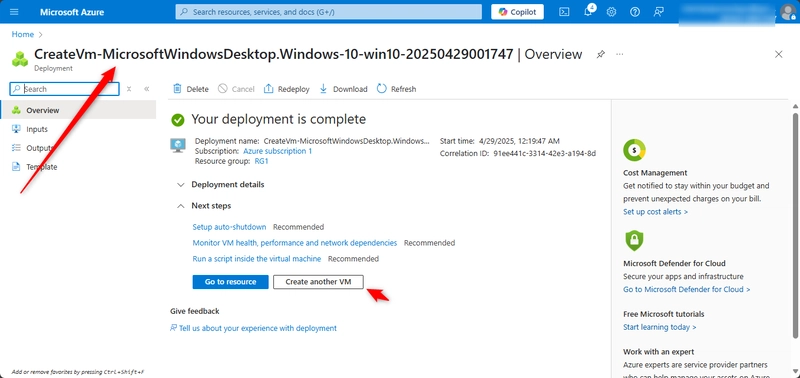

A new study [PDF] reveals AI-generated code frequently references non-existent third-party libraries, creating opportunities for supply-chain attacks. Researchers analyzed 576,000 code samples from 16 popular large language models and found 19.7% of package dependencies -- 440,445 in total -- were "hallucinated." These non-existent dependencies exacerbate dependency confusion attacks, where malicious packages with identical names to legitimate ones can infiltrate software. Open source models hallucinated at nearly 22%, compared to 5% for commercial models. "Once the attacker publishes a package under the hallucinated name, containing some malicious code, they rely on the model suggesting that name to unsuspecting users," said lead researcher Joseph Spracklen. Alarmingly, 43% of hallucinations repeated across multiple queries, making them predictable targets. Read more of this story at Slashdot.

Read more of this story at Slashdot.

![[The AI Show Episode 145]: OpenAI Releases o3 and o4-mini, AI Is Causing “Quiet Layoffs,” Executive Order on Youth AI Education & GPT-4o’s Controversial Update](https://www.marketingaiinstitute.com/hubfs/ep%20145%20cover.png)

_NicoElNino_Alamy.jpg?width=1280&auto=webp&quality=80&disable=upscale#)

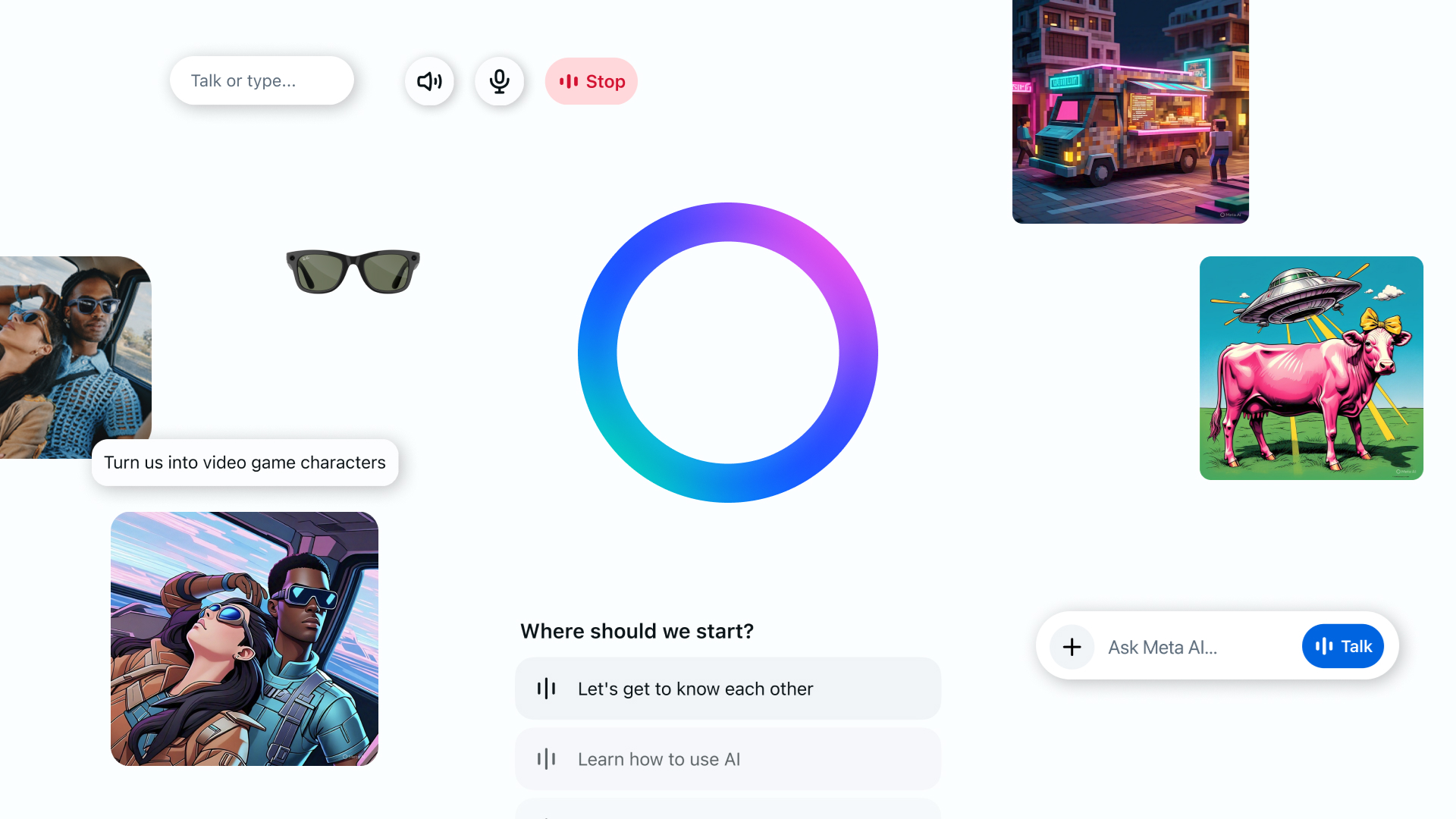

![Standalone Meta AI App Released for iPhone [Download]](https://www.iclarified.com/images/news/97157/97157/97157-640.jpg)