When AI Bug Hunters Mess with Curl: A Maintainer’s Funny, Frustrating Story

Picture this: you’re Daniel Stenberg, the guy who’s been keeping curl—that handy little tool that moves data around in tons of apps—working like a charm for years. You’re used to the usual chaos of running an open-source project, including the oddball bug reports that pop up. But lately, things have gotten weird. In his blog post "The I in LLM Stands for Intelligence," Stenberg spills the tea on a problem that’s equal parts hilarious and annoying: people using AI tools like ChatGPT or Bard(now Gemini) to crank out bug reports that sound real but are totally made up. It’s not the superhero AI moment you’d expect—it’s more like a comedy of errors. Bug Hunting Goes High-Tech (Sort Of) Bug bounties are awesome—companies pay people to find weak spots in their software, and for curl, it’s drawn a mix of pros and dreamers hoping to strike it rich. Then came these big AI language tools, hyped up for writing stories or helping with code super fast. Sounds like a perfect match for finding bugs, right? Well, not exactly. Stenberg’s been getting reports where folks toss curl’s code into an AI, hit “go,” and send whatever comes out to HackerOne, hoping for a paycheck. Problem is, a lot of these “bugs” don’t even exist. One time, a guy admitted he used Bard, and the “flaw” it found was pure nonsense. The reports look fancy—full of techy words and fixes—but they’re fake. Sometimes people mix in their own words or use the AI to tidy up bad English, which Stenberg doesn’t mind. But when it’s just lazy AI junk? That’s when he’s ready to bang his head on the desk. Why Fake Bugs Are a Big Deal For Stenberg, these AI-made flops aren’t just a laugh—they’re a pain. When a security report lands, you’ve got to stop everything and check it out. If it’s real, you fix it and save the day. But if it’s garbage, you’ve just wasted time you could’ve spent making curl better. “If the report turned out to be crap,” he says, “we didn’t make things safer, and we lost time.” And with more people jumping on the AI train, the stack of silly reports keeps growing. You can sometimes spot the AI stuff—it’s got that too-perfect, robotic vibe and falls apart when you ask questions. One report claimed there was a big error in curl’s code, and Stenberg spent 19 minutes digging into it, only to find nothing wrong. The guy’s follow-ups were a mess. Another time, a Bard report got called out quick when the submitter fessed up. It’s like the AI’s playing a prank, and Stenberg’s stuck dealing with the fallout. “Smart” AI? More Like Sneaky Stenberg’s blog title is a total jab, and you can feel his smirk. These AI tools are great at copying what they’ve seen—think of them as super-talented copycats—but they’re not actually smart. They’ll whip up a story that sounds good, but they don’t really get what’s going on with code or the real world. He says it’s like pairing a tool that’s already bad at spotting real issues with one that’s perfect for making up tales. To wannabe bug hunters, it’s a goldmine: let the AI do the work, cash in. To Stenberg, it’s a giant “ugh.” How to Fix the Mess? Stenberg’s not just complaining—he’s tossing around ideas. HackerOne doesn’t make it simple to ditch the repeat offenders (though he later found a “block” button), so he’s stuck sorting through the junk. He thinks maybe people will get better at spotting AI clues—like the overly neat writing or the way it dodges tough questions. Someone suggested charging a tiny fee to submit reports, giving it back if it’s not AI trash, but Stenberg’s not sure. He doesn’t want to scare off the good helpers just to dodge the slackers. For now, it’s like junk mail—you deal with it and move on. Why This Matters to Everyone This isn’t just about curl—it’s a sneak peek at what happens when cool tech meets people looking for shortcuts. Stenberg’s story is funny in a “you’ve got to be kidding” way, but it’s also a heads-up. People working on free projects—or really anyone using tech—are facing the same thing: AI can be a buddy or a troublemaker, depending on who’s in charge. “I bet we’ll see more AI-made nonsense,” he says, and you can almost hear him sigh. The “I” in LLM might mean intelligence, but right now, it’s dishing out more silliness than smarts.

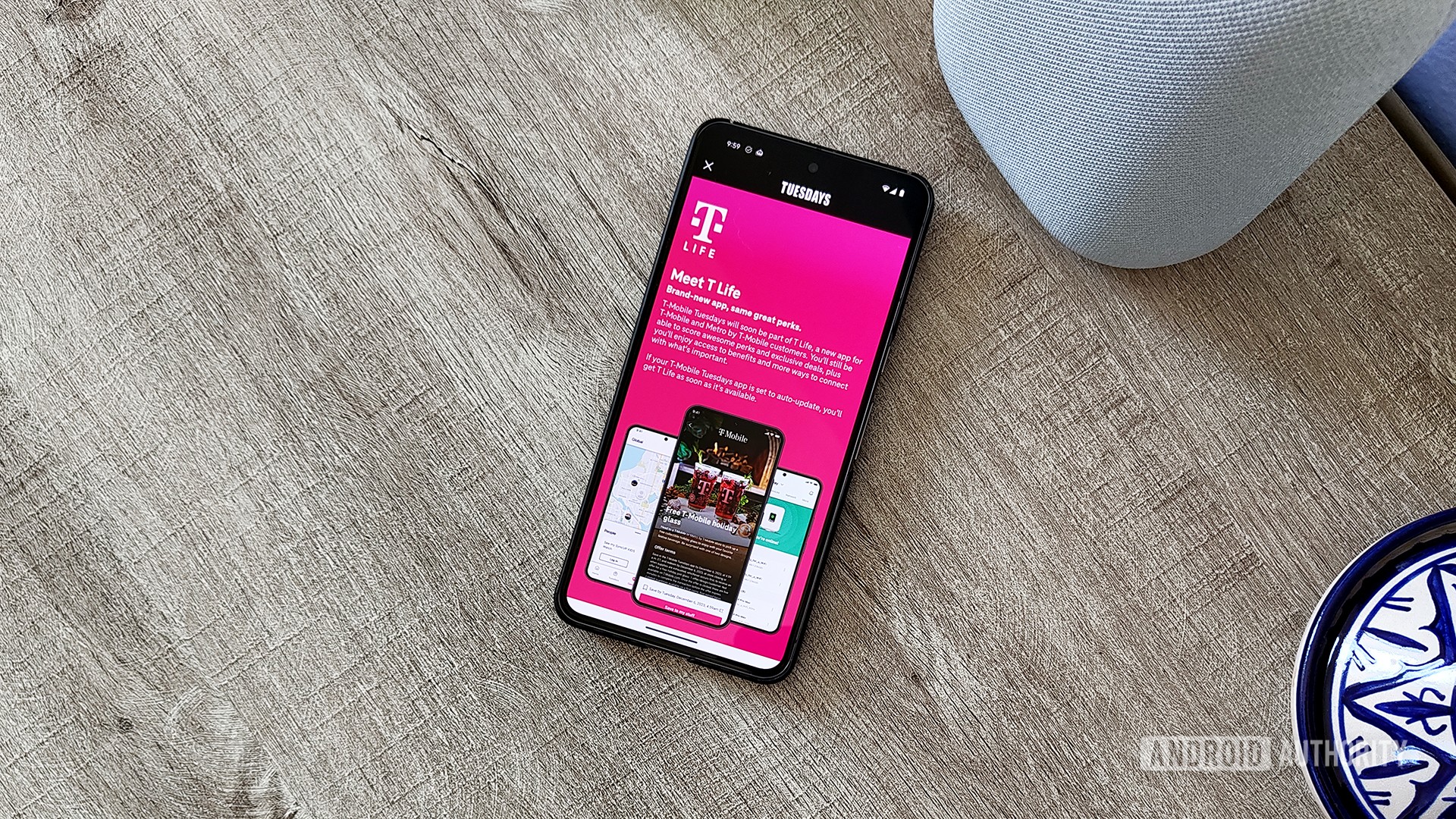

Picture this: you’re Daniel Stenberg, the guy who’s been keeping curl—that handy little tool that moves data around in tons of apps—working like a charm for years. You’re used to the usual chaos of running an open-source project, including the oddball bug reports that pop up. But lately, things have gotten weird. In his blog post "The I in LLM Stands for Intelligence," Stenberg spills the tea on a problem that’s equal parts hilarious and annoying: people using AI tools like ChatGPT or Bard(now Gemini) to crank out bug reports that sound real but are totally made up. It’s not the superhero AI moment you’d expect—it’s more like a comedy of errors.

Bug Hunting Goes High-Tech (Sort Of)

Bug bounties are awesome—companies pay people to find weak spots in their software, and for curl, it’s drawn a mix of pros and dreamers hoping to strike it rich. Then came these big AI language tools, hyped up for writing stories or helping with code super fast. Sounds like a perfect match for finding bugs, right? Well, not exactly. Stenberg’s been getting reports where folks toss curl’s code into an AI, hit “go,” and send whatever comes out to HackerOne, hoping for a paycheck. Problem is, a lot of these “bugs” don’t even exist.

One time, a guy admitted he used Bard, and the “flaw” it found was pure nonsense. The reports look fancy—full of techy words and fixes—but they’re fake. Sometimes people mix in their own words or use the AI to tidy up bad English, which Stenberg doesn’t mind. But when it’s just lazy AI junk? That’s when he’s ready to bang his head on the desk.

Why Fake Bugs Are a Big Deal

For Stenberg, these AI-made flops aren’t just a laugh—they’re a pain. When a security report lands, you’ve got to stop everything and check it out. If it’s real, you fix it and save the day. But if it’s garbage, you’ve just wasted time you could’ve spent making curl better. “If the report turned out to be crap,” he says, “we didn’t make things safer, and we lost time.” And with more people jumping on the AI train, the stack of silly reports keeps growing.

You can sometimes spot the AI stuff—it’s got that too-perfect, robotic vibe and falls apart when you ask questions. One report claimed there was a big error in curl’s code, and Stenberg spent 19 minutes digging into it, only to find nothing wrong. The guy’s follow-ups were a mess. Another time, a Bard report got called out quick when the submitter fessed up. It’s like the AI’s playing a prank, and Stenberg’s stuck dealing with the fallout.

“Smart” AI? More Like Sneaky

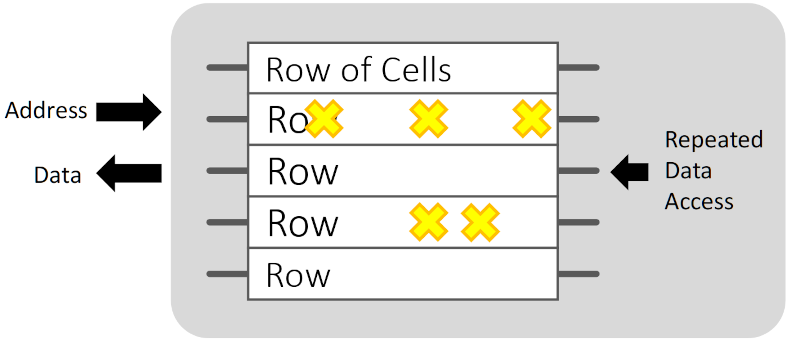

Stenberg’s blog title is a total jab, and you can feel his smirk. These AI tools are great at copying what they’ve seen—think of them as super-talented copycats—but they’re not actually smart. They’ll whip up a story that sounds good, but they don’t really get what’s going on with code or the real world. He says it’s like pairing a tool that’s already bad at spotting real issues with one that’s perfect for making up tales. To wannabe bug hunters, it’s a goldmine: let the AI do the work, cash in. To Stenberg, it’s a giant “ugh.”

How to Fix the Mess?

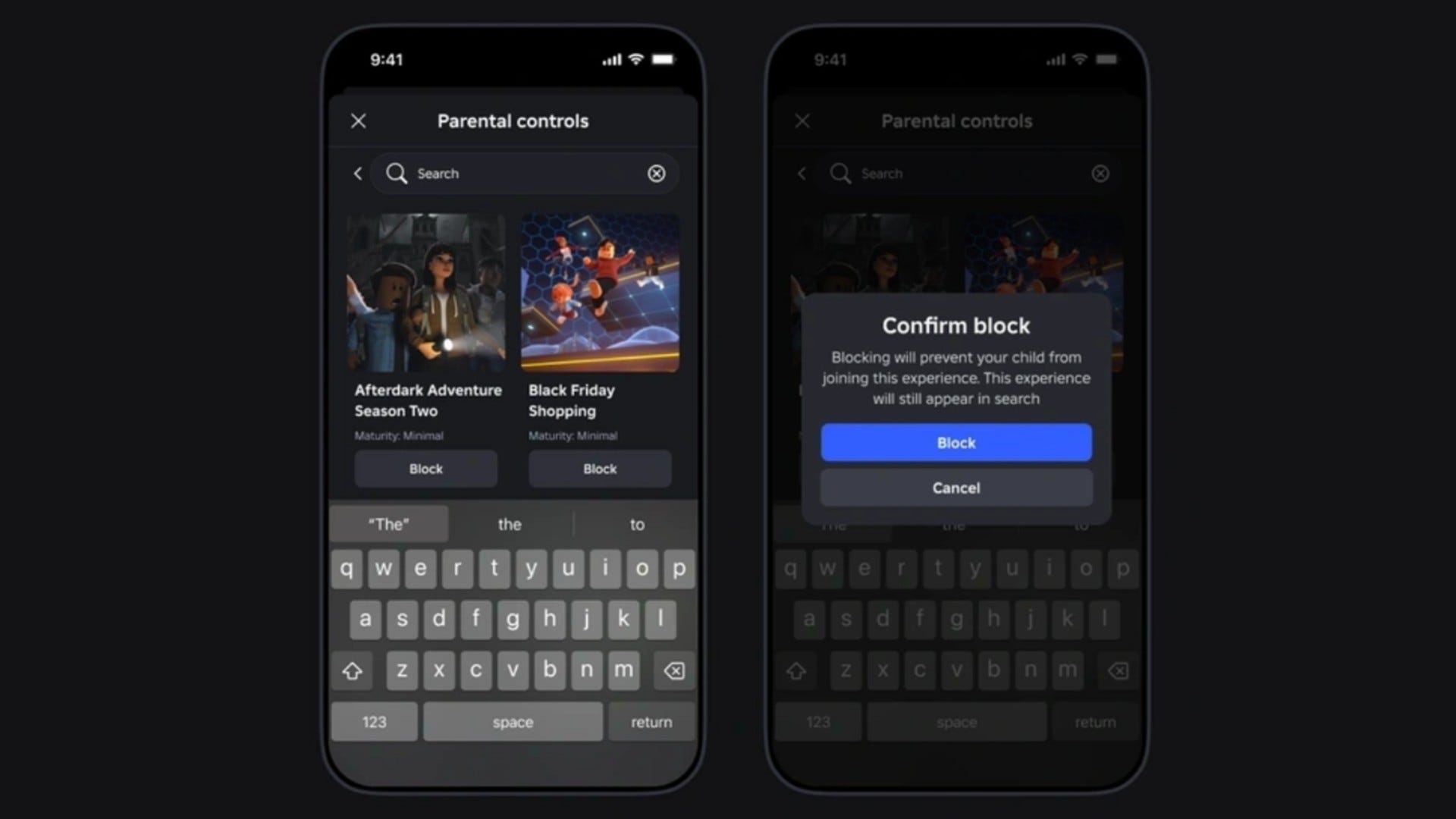

Stenberg’s not just complaining—he’s tossing around ideas. HackerOne doesn’t make it simple to ditch the repeat offenders (though he later found a “block” button), so he’s stuck sorting through the junk. He thinks maybe people will get better at spotting AI clues—like the overly neat writing or the way it dodges tough questions. Someone suggested charging a tiny fee to submit reports, giving it back if it’s not AI trash, but Stenberg’s not sure. He doesn’t want to scare off the good helpers just to dodge the slackers. For now, it’s like junk mail—you deal with it and move on.

Why This Matters to Everyone

This isn’t just about curl—it’s a sneak peek at what happens when cool tech meets people looking for shortcuts. Stenberg’s story is funny in a “you’ve got to be kidding” way, but it’s also a heads-up. People working on free projects—or really anyone using tech—are facing the same thing: AI can be a buddy or a troublemaker, depending on who’s in charge. “I bet we’ll see more AI-made nonsense,” he says, and you can almost hear him sigh. The “I” in LLM might mean intelligence, but right now, it’s dishing out more silliness than smarts.

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[DEALS] Microsoft Office Professional 2021 for Windows: Lifetime License (75% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

_Anthony_Brown_Alamy.jpg?#)

_Hanna_Kuprevich_Alamy.jpg?#)

.png?#)

![Hands-on: We got to play Nintendo Switch 2 for nearly six hours yesterday [Video]](https://i0.wp.com/9to5toys.com/wp-content/uploads/sites/5/2025/04/Switch-FI-.jpg.jpg?resize=1200%2C628&ssl=1)

![Fitbit redesigns Water stats and logging on Android, iOS [U]](https://i0.wp.com/9to5google.com/wp-content/uploads/sites/4/2023/03/fitbit-logo-2.jpg?resize=1200%2C628&quality=82&strip=all&ssl=1)

![YouTube Announces New Creation Tools for Shorts [Video]](https://www.iclarified.com/images/news/96923/96923/96923-640.jpg)

![Apple Faces New Tariffs but Has Options to Soften the Blow [Kuo]](https://www.iclarified.com/images/news/96921/96921/96921-640.jpg)