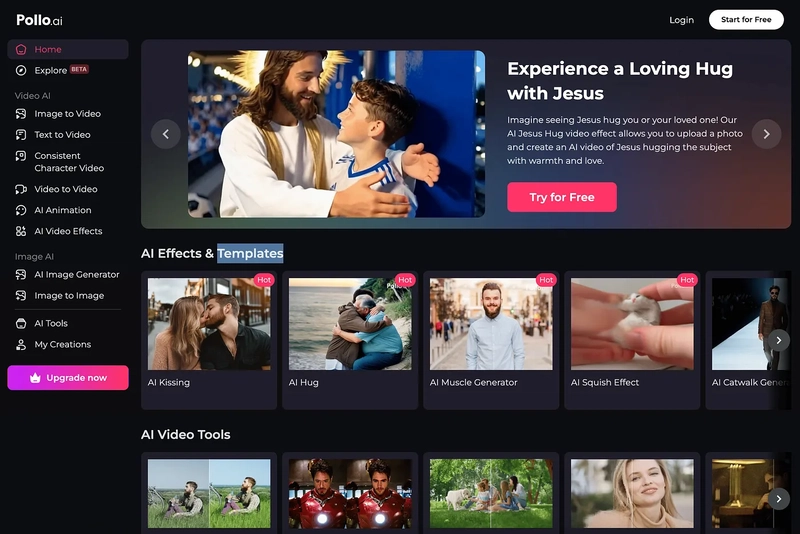

“Nudify” deepfakes stored unprotected online

A generative AI nudify service has been found storing explicit deepfakes in an unprotected cloud database.

Yesterday, we told you about how millions of pictures from specialized dating apps had been stored online without any kind of password protection.

Now it’s the turn of an AI “nudify” service.

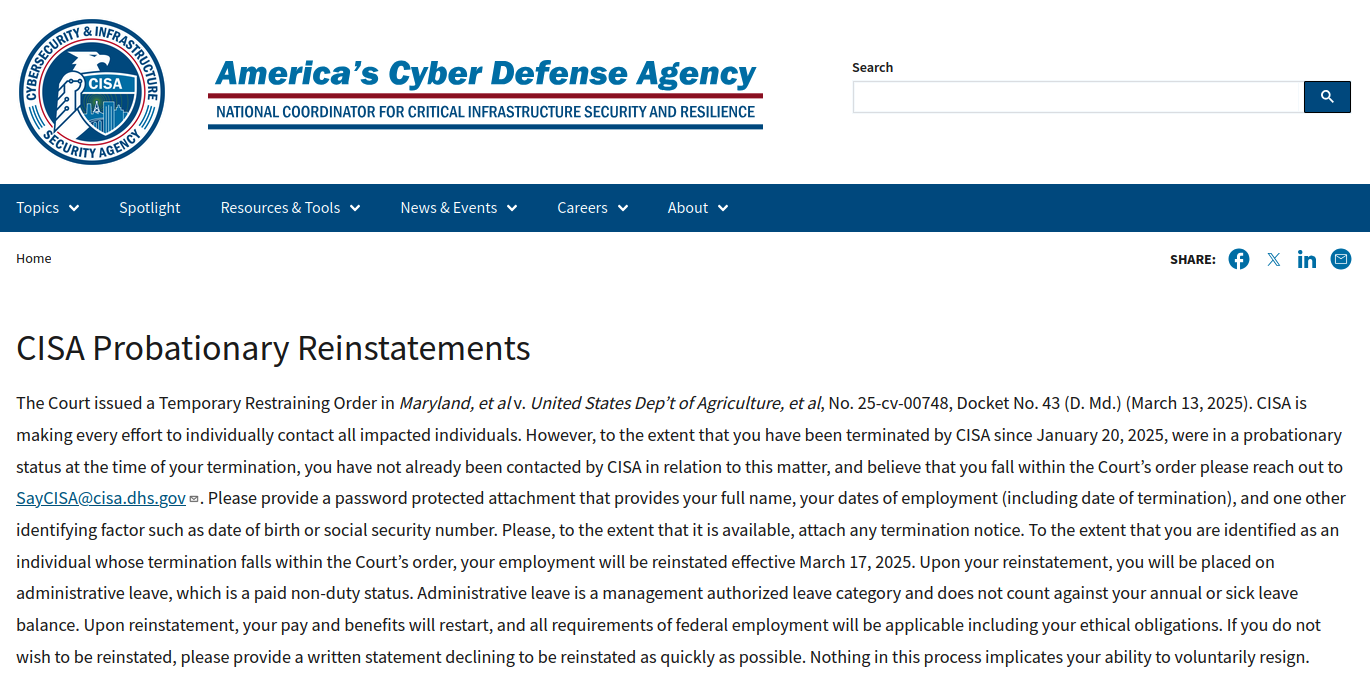

A researcher, famous for finding unprotected cloud storage buckets, has uncovered an unprotected AWS bucket belonging to the nudify service.

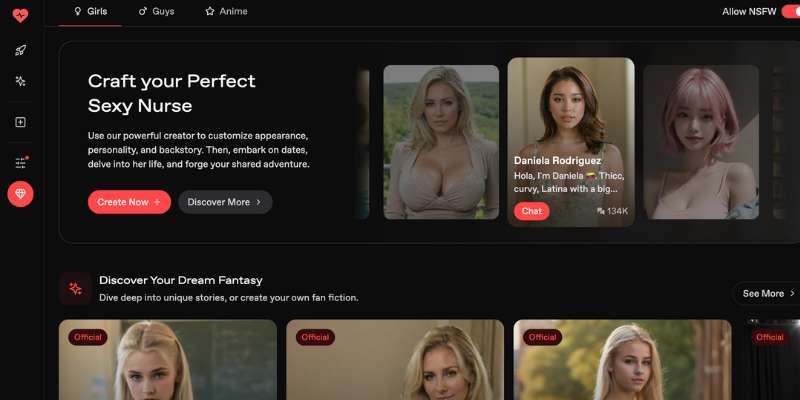

The rising popularity of these nudify services apparently has caused a selection of companies without any security awareness to hop on the money train. Millions of people use these services to turn normal pictures into nude images, and it only takes a few minutes.

South Korean AI company GenNomis by AI-NOMIS or somebody acting at their behalf stored 93,485 images and json files with a total size of 47.8 GB in a non-password-protected nor encrypted, but publicly exposed database.

Looking at the service, GenNomis is an AI-powered image generation platform that allows users to transform text descriptions into images, create AI personas, turn images to videos, face-swap images, remove backgrounds, etc., and all that without restrictions. It also provides a marketplace, where users can buy and sell these images as “artwork.”

The researcher saw numerous pornographic images, including what appeared to be disturbing AI-generated portrayals of very young people. Even though the GenNomis guidelines prohibit explicit images of children and any other illegal activities, the researcher found many of them. That doesn’t mean they were available to buy on the platform, but they were at least created.

Some of the deepfakes are hard to discern from real images, and as such may lead to serious privacy, ethical, and legal risks. Not to mention the humiliation for the owners of those images or parts thereof who didn’t consent. Sadly, there are many examples where young people have taken their own lives over sextortion attempts.

The researcher contacted the company about what he had found. He told The Register:

“They took it down immediately with no reply.”

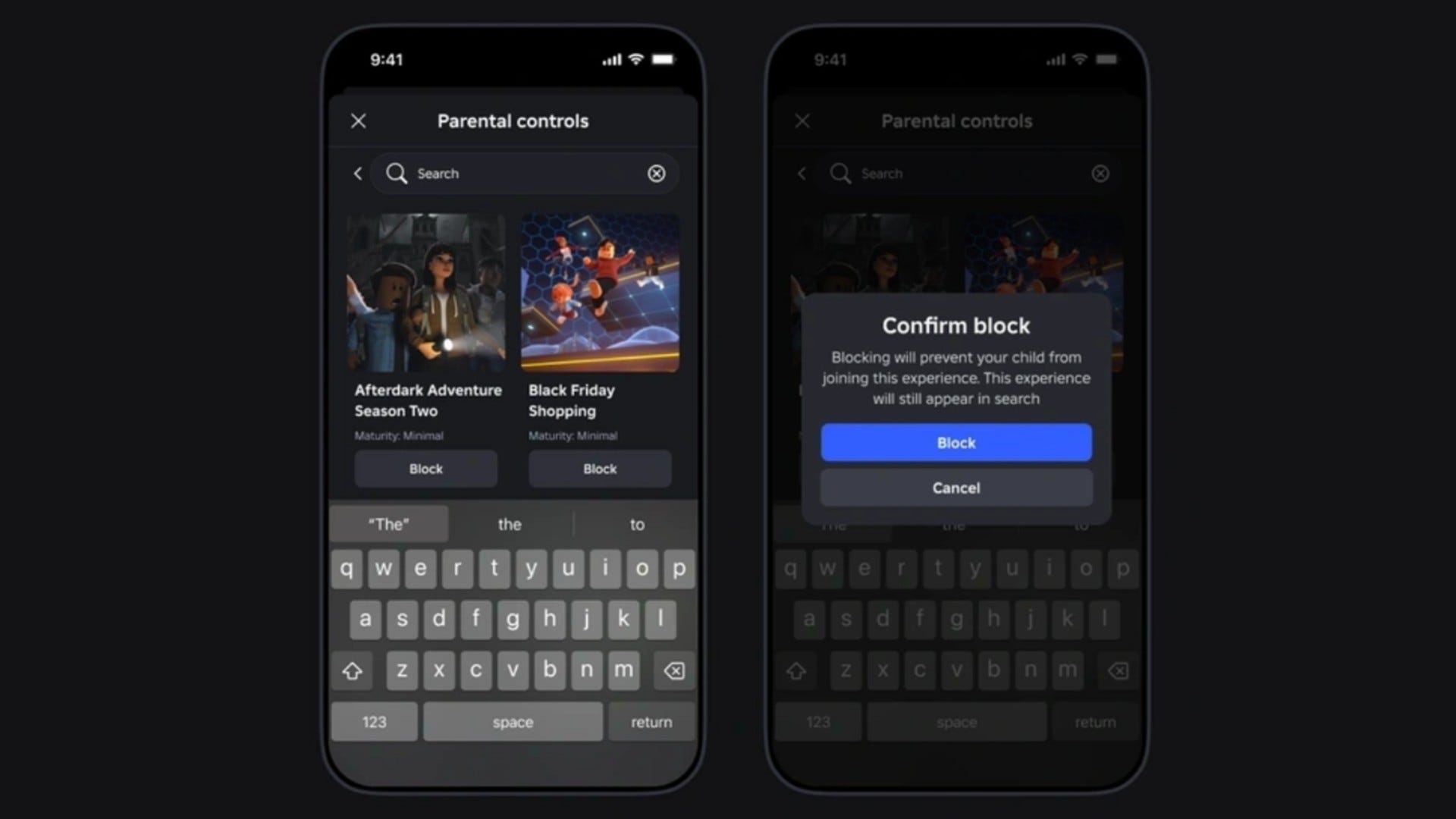

Keep your children safe from nudify services

We’ve seen many cases where social media and other platforms have used the content of their users to train their AI. Some people have a tendency to shrug it off because they don’t see the dangers, but let us explain the possible problems.

In this case, it’s at the extreme end of what the content could be used for.

- Deepfakes: Users of this generative AI could have used the nudify service on publicly available pictures to create explicit deepfakes without consent. AI generated content, like deepfakes, can be used to spread misinformation, damage your reputation or privacy, or defraud people you know.

- Metadata: Users often forget that the images they upload to social media also contain metadata, such as where the photo was taken. This information could potentially be sold to third parties or used in ways the photographer didn’t intend.

- Intellectual property. Never upload anything you didn’t create or own. Artists and photographers may feel their work is being exploited without proper compensation or attribution.

- Bias: AI models trained on biased datasets can perpetuate and amplify societal biases.

- Facial recognition: Although facial recognition is not the hot topic it once used to be, it still exists. And actions or statements done by your images (real or not) may be linked to your persona.

- Memory: Once a picture is online, it is almost impossible to get it completely removed. It may continue to exist in caches, backups, and snapshots.

If you want to continue using social media platforms that is obviously your choice, but consider the above when uploading pictures of you, your loved ones, or even complete strangers.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[DEALS] Microsoft Office Professional 2021 for Windows: Lifetime License (75% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

_Anthony_Brown_Alamy.jpg?#)

_Hanna_Kuprevich_Alamy.jpg?#)

.png?#)

![Hands-on: We got to play Nintendo Switch 2 for nearly six hours yesterday [Video]](https://i0.wp.com/9to5toys.com/wp-content/uploads/sites/5/2025/04/Switch-FI-.jpg.jpg?resize=1200%2C628&ssl=1)

![Fitbit redesigns Water stats and logging on Android, iOS [U]](https://i0.wp.com/9to5google.com/wp-content/uploads/sites/4/2023/03/fitbit-logo-2.jpg?resize=1200%2C628&quality=82&strip=all&ssl=1)

![YouTube Announces New Creation Tools for Shorts [Video]](https://www.iclarified.com/images/news/96923/96923/96923-640.jpg)

![Apple Faces New Tariffs but Has Options to Soften the Blow [Kuo]](https://www.iclarified.com/images/news/96921/96921/96921-640.jpg)