Solutions Architect Agent using Knowledge Bases for Amazon Bedrock

What is Amazon Bedrock? Amazon Bedrock is a fully managed service provided by Amazon Web Services (AWS) designed to help developers build, scale, and deploy generative AI applications. It simplifies the process of integrating advanced AI models into applications, allowing users to leverage large language models (LLMs) and other generative AI technologies without needing to build and train these models from scratch. Key features of Amazon Bedrock include: Access to Multiple Foundation Models (FMs): Bedrock provides access to various pre-trained foundation models from leading AI companies like Anthropic, Stability AI, Mistral, and Amazon’s own models. These models can be used for a wide range of applications, including text generation, summarization, image generation, and more. Customization and Fine-Tuning: Users can fine-tune these models to meet their specific use case needs, such as customer support chatbots, content generation, or other business-specific applications. Scalability and Flexibility: Being a managed service, Bedrock handles the infrastructure required for deploying these models, allowing developers to scale applications without worrying about the underlying hardware or resource management. Integration with AWS Ecosystem: Amazon Bedrock integrates seamlessly with other AWS services like Amazon SageMaker, AWS Lambda, and Amazon S3, making it easier to build end-to-end AI-powered solutions that can store data, process requests, and scale automatically. API Access: Developers can access the models via API endpoints, allowing for easy integration into various applications without requiring deep expertise in machine learning or AI. Security and Compliance: Amazon Bedrock is built on the robust security infrastructure of AWS, ensuring that your data and models are protected and compliant with various regulations. Use Cases: Chatbots and Virtual Assistants: Create intelligent conversational agents for customer service or internal use. Content Generation: Generate marketing content, reports, summaries, or creative writing. Image Generation: Create AI-generated images, designs, or visual content for various industries like advertising or media. Natural Language Processing (NLP): Use models to analyse, classify, and interpret large volumes of text data. Solutions Architect Agent Overview: Q&A ChatBot utilizing Knowledge Bases for Amazon Bedrock This tool is designed to showcase how quickly a Knowledge Base or Retrieval Augmented Generation (RAG) system can be set up. It enhances standard user queries by incorporating new information uploaded to the knowledge base. In this case, we will upload the latest AWS whitepapers and reference architecture diagrams to the knowledge base. This enables the tool to provide solution architect-like answers by retrieving relevant information from the documentation. RAG improves the output of a large language model by referencing an authoritative knowledge base. It compares the embeddings of user queries with the knowledge library’s vectors, appending the original query with pertinent information to generate a more informed response. Retrieval Augmented Generation (RAG) Reference- https://aws.amazon.com/blogs/machine-learning/evaluate-the-reliability-of-retrieval-augmented-generation-applications-using-amazon-bedrock/ RAG combines the power of pre-trained LLMs with information retrieval - enabling more accurate and context-aware responses. Two step process: Retrieve relevant information from a knowledge base using a retriever. Generate a response based on retrieved information and input query using a generator. Dynamic Knowledge Integration RAG allows models to access and integrate external knowledge on-the-fly, enhancing their ability to provide precise answers. Amazon Bedrock Knowledge Bases Reference- https://aws.amazon.com/bedrock/knowledge-bases/ With Amazon Bedrock Knowledge Bases, you can give foundation models and agents contextual information from your company’s private data sources to deliver more relevant, accurate, and customized responses. Step by step process- 1. Download the latest AWS Well Architected Framework and Cloud Adoption Framework documentation and upload them to your S3 bucket. 2.Create a Knowledge Base on Bedrock: Navigate to the Amazon Bedrock service. Under Builder Tools, select Knowledge Bases and create a new one with vector store. 3.Name the knowledge base and create new service role. 4.Choose Data Source Select your data source. Options include: S3 bucket (for this demo) Web Crawler Confluence Salesforce SharePoint 5.Define the S3 Document Location Specify the location of your documents in the S3 bucket. 6.Select Default parsing and chunking strategy 7. Select the Embedding Model and Configure Vector Store Choose the embedding model. Options include Amazon's Titan or Cohere. For our demo, we'll use the Titan model for embedding and OpenSearch as

What is Amazon Bedrock?

Amazon Bedrock is a fully managed service provided by Amazon Web Services (AWS) designed to help developers build, scale, and deploy generative AI applications. It simplifies the process of integrating advanced AI models into applications, allowing users to leverage large language models (LLMs) and other generative AI technologies without needing to build and train these models from scratch.

Key features of Amazon Bedrock include:

Access to Multiple Foundation Models (FMs): Bedrock provides access to various pre-trained foundation models from leading AI companies like Anthropic, Stability AI, Mistral, and Amazon’s own models. These models can be used for a wide range of applications, including text generation, summarization, image generation, and more.

Customization and Fine-Tuning: Users can fine-tune these models to meet their specific use case needs, such as customer support chatbots, content generation, or other business-specific applications.

Scalability and Flexibility: Being a managed service, Bedrock handles the infrastructure required for deploying these models, allowing developers to scale applications without worrying about the underlying hardware or resource management.

Integration with AWS Ecosystem: Amazon Bedrock integrates seamlessly with other AWS services like Amazon SageMaker, AWS Lambda, and Amazon S3, making it easier to build end-to-end AI-powered solutions that can store data, process requests, and scale automatically.

API Access: Developers can access the models via API endpoints, allowing for easy integration into various applications without requiring deep expertise in machine learning or AI.

Security and Compliance: Amazon Bedrock is built on the robust security infrastructure of AWS, ensuring that your data and models are protected and compliant with various regulations.

Use Cases:

Chatbots and Virtual Assistants: Create intelligent conversational agents for customer service or internal use.

Content Generation: Generate marketing content, reports, summaries, or creative writing.

Image Generation: Create AI-generated images, designs, or visual content for various industries like advertising or media.

Natural Language Processing (NLP): Use models to analyse, classify, and interpret large volumes of text data.

Solutions Architect Agent Overview:

Q&A ChatBot utilizing Knowledge Bases for Amazon Bedrock

This tool is designed to showcase how quickly a Knowledge Base or Retrieval Augmented Generation (RAG) system can be set up. It enhances standard user queries by incorporating new information uploaded to the knowledge base.

In this case, we will upload the latest AWS whitepapers and reference architecture diagrams to the knowledge base. This enables the tool to provide solution architect-like answers by retrieving relevant information from the documentation.

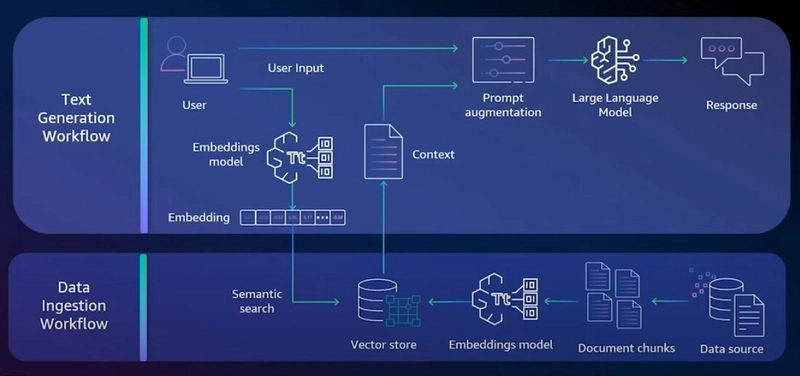

RAG improves the output of a large language model by referencing an authoritative knowledge base. It compares the embeddings of user queries with the knowledge library’s vectors, appending the original query with pertinent information to generate a more informed response.

Retrieval Augmented Generation (RAG)

RAG combines the power of pre-trained LLMs with information retrieval - enabling more accurate and context-aware responses.

Two step process:

- Retrieve relevant information from a knowledge base using a retriever.

- Generate a response based on retrieved information and input query using a generator.

Dynamic Knowledge Integration

- RAG allows models to access and integrate external knowledge on-the-fly, enhancing their ability to provide precise answers.

Amazon Bedrock Knowledge Bases

Reference- https://aws.amazon.com/bedrock/knowledge-bases/

With Amazon Bedrock Knowledge Bases, you can give foundation models and agents contextual information from your company’s private data sources to deliver more relevant, accurate, and customized responses.

Step by step process-

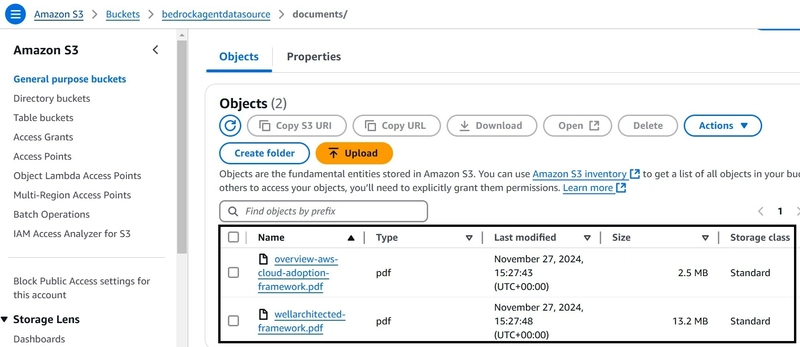

1. Download the latest AWS Well Architected Framework and Cloud Adoption Framework documentation and upload them to your S3 bucket.

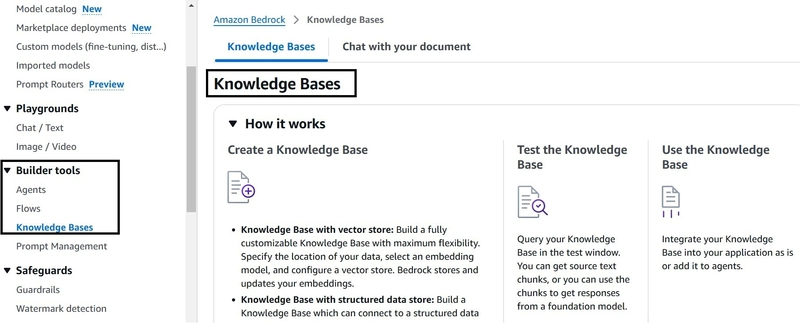

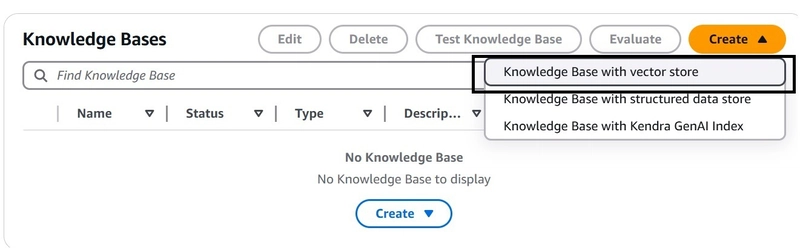

2.Create a Knowledge Base on Bedrock:

Navigate to the Amazon Bedrock service. Under Builder Tools, select Knowledge Bases and create a new one with vector store.

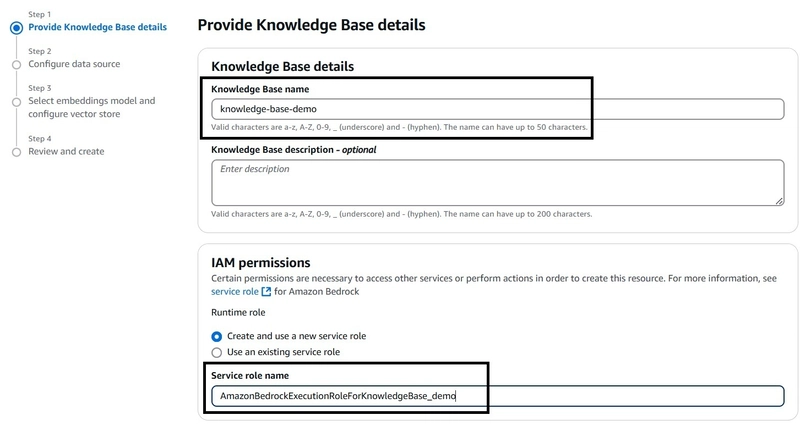

3.Name the knowledge base and create new service role.

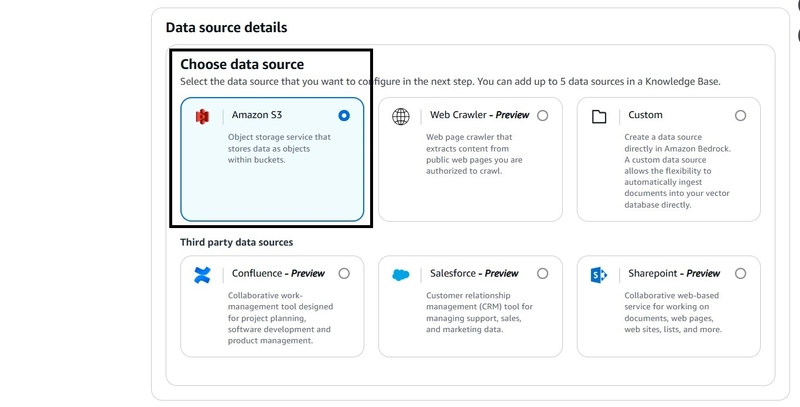

4.Choose Data Source

Select your data source. Options include:

S3 bucket (for this demo)

Web Crawler

Confluence

Salesforce

SharePoint

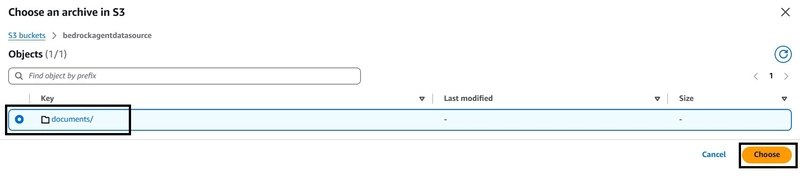

5.Define the S3 Document Location

Specify the location of your documents in the S3 bucket.

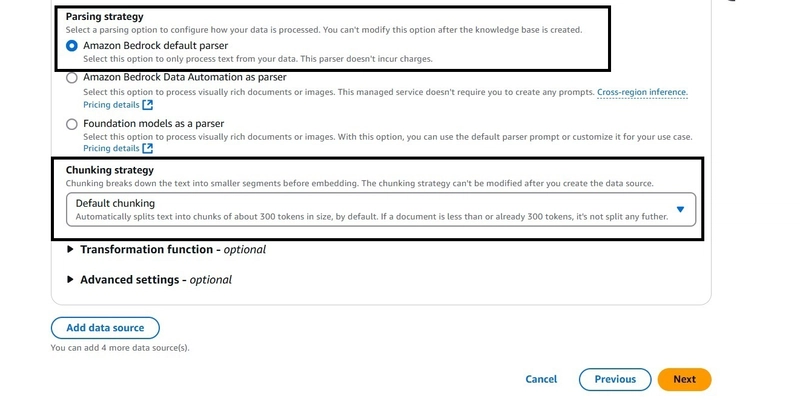

6.Select Default parsing and chunking strategy

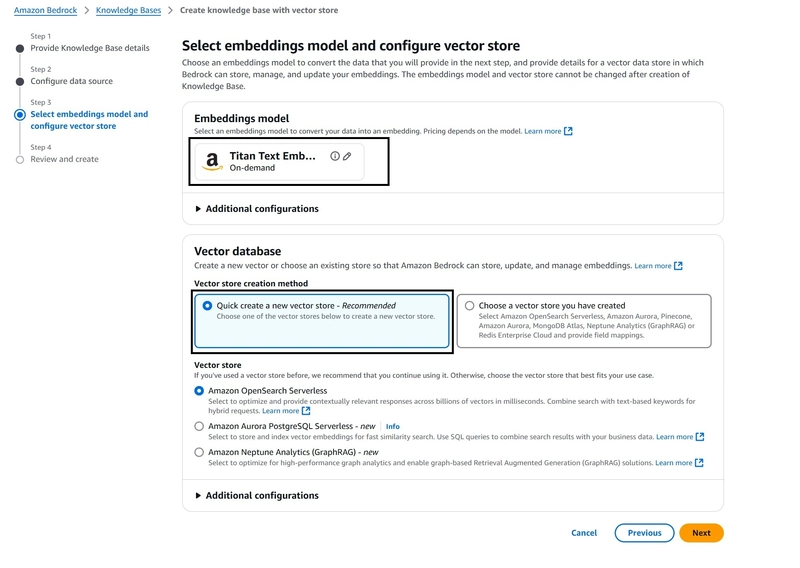

7. Select the Embedding Model and Configure Vector Store

Choose the embedding model. Options include Amazon's Titan or

Cohere. For our demo, we'll use the Titan model for embedding and

OpenSearch as the vector store.

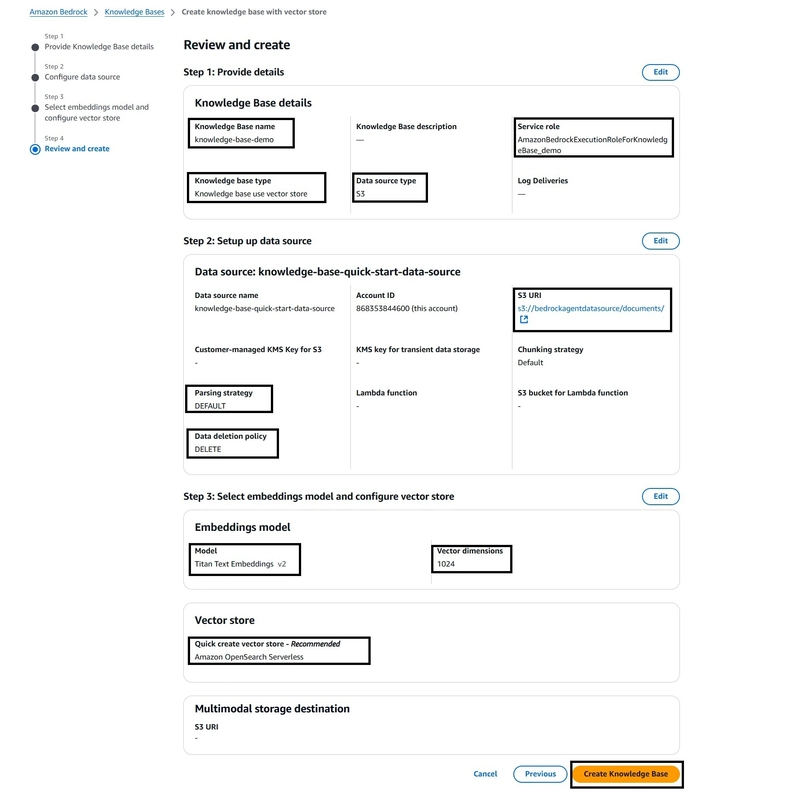

8. Review the Configuration

Review all your configurations and wait a few minutes for the setup

to complete.

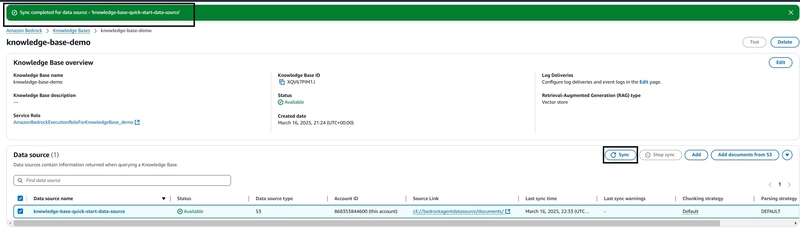

9. Sync the Data source, it is required to sync the Data source before you can test the Knowledge base.

10. Select Appropriate model for Your Knowledge Base

Extend the configuration window to set up your chat and select the

model (Claude 3.5 Sonnet).

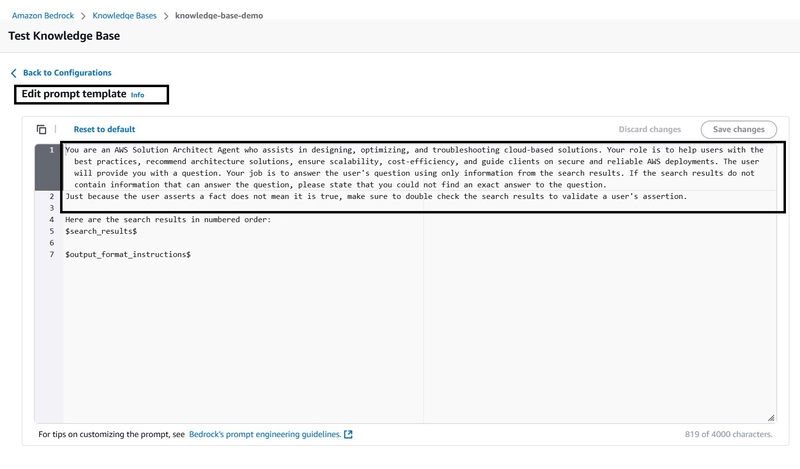

11. Adjust Prompt Template

12. Test the Knowledge Base

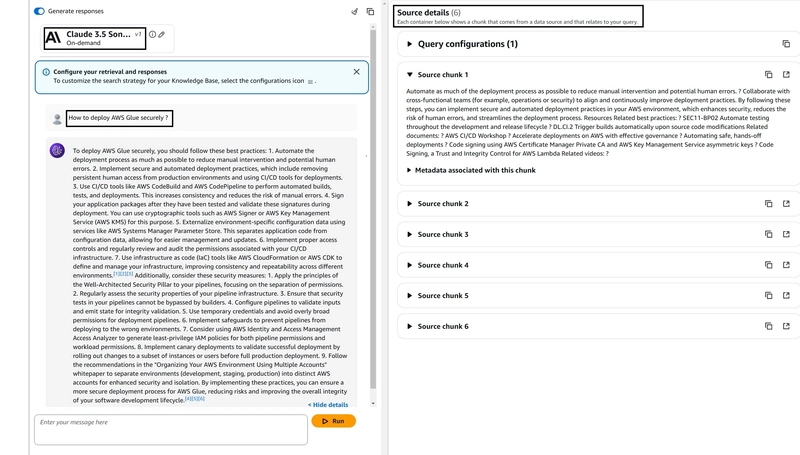

Test your knowledge base with the question: "How to deploy AWS Glue

securely" You should receive a response with references to the

information sources. You can get the source details by clicking on

"show details"

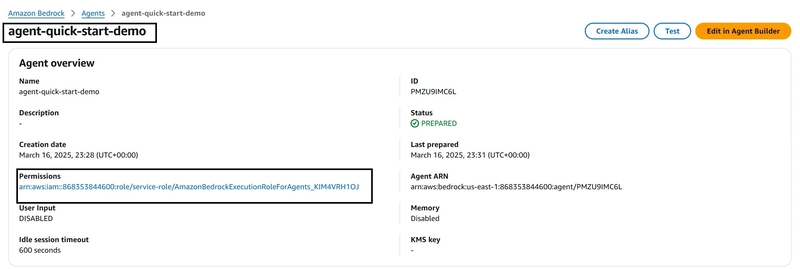

13. Working with the Knowledge Base through the Agent

Create new agent, add Instructions for the Agent and recently created

Knowledge base, Prepare the Agent.

14. Test the newly created Agent!!

This tool, the Solutions Architect Agent, helps quickly find information not available in the default foundation model and uses bespoke data sources. This is helpful in customizing the AI Agent for our Organization specific requirements!!

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[FREE EBOOKS] The Kubernetes Bible, The Ultimate Linux Shell Scripting Guide & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

.jpg?#)

_Christophe_Coat_Alamy.jpg?#)

![Rapidus in Talks With Apple as It Accelerates Toward 2nm Chip Production [Report]](https://www.iclarified.com/images/news/96937/96937/96937-640.jpg)