Oumi Takes Aim at LLM Hallucinations, One Sentence at a Time

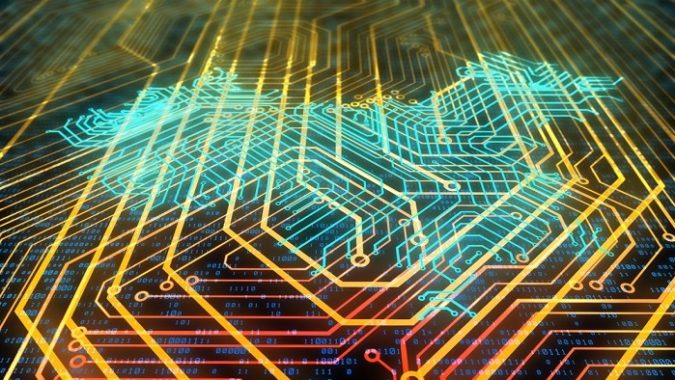

Language models still struggle with the truth, and for industries with regulatory or safety responsibilities, that can be a serious liability. That is why open source AI lab Oumi released HallOumi, a model that analyzes LLM responses line by line, scoring each sentence for factual accuracy and backing its judgments with detailed rationales and citations. Oumi launched earlier this year as “the Linux of AI,” positioning itself as a fully open source AI platform for developing foundation models that aims to advance frontier AI for both academia and the enterprise. The platform is developed collaboratively with 13 universities in the U.S. and U.K., including Caltech, MIT, and the University of Oxford. In an interview with AIwire, Oumi CEO Manos Koukoumidis and Co-founder and AI researcher Jeremy Greer walked through the motivation behind HallOumi and demonstrated how it works. An Open Source Answer to the Trust Gap The motivation behind HallOumi, according to Koukoumidis, stemmed from growing demand among enterprises for transparent and trustworthy AI systems, particularly in regulated industries. From the outset, Oumi positioned itself as a fully open source platform designed to make it easy for both enterprises and academic institutions to develop their own foundation models. But it was the wave of interest following the company’s recent launch that underscored just how urgent one issue had become: hallucinations. Industries like finance and healthcare want to adopt large language models, Koukoumidis says, but hallucinations, or factually unsupported outputs, are holding them back. And the problem is not limited to externally facing applications. Even when used internally as copilots or summarizers, LLMs need to be trustworthy. Enterprises need a reliable way to determine whether a model’s output is grounded in the input it was given, especially in crucial use cases like compliance, financial analysis, or policy interpretation. “They really care about the ability to trust these LLMs because these are mission-critical scenarios,” Koukoumidis says. That’s where HallOumi comes in. Designed to work in any context where users can supply both an input (like a document or knowledge base) and an LLM-generated output, HallOumi checks whether that output is actually supported or if it was hallucinated. How HallOumi Works At its core, HallOumi is designed to answer a deceptively simple question: Can this statement be trusted? Oumi defines the task of verifying AI outputs as assessing the truthfulness of each statement produced, identifying evidence that supports the validity of statements (or reveals their inaccuracies), and ensuring full traceability by linking each statement to its supporting evidence. HallOumi is built with traceability and precision in mind, analyzing responses sentence by sentence. Whether the content is AI-generated or human-written, it evaluates each individual claim against a set of context documents provided by the user. According to Oumi, HallOumi identifies and analyzes each claim in an AI model’s output and determines the following: The degree to which the claim is supported or unsupported by the provided context along with a confidence score. This score is critical for allowing users to define their own precision/recall tradeoffs when detecting hallucinations. The citations (relevant sentences) associated with the claim, allowing humans to easily check only the relevant parts of the context document to confirm or refute a flagged hallucination, rather than needing to read through the entire document, which could be very long. An explanation detailing why the claim is supported or unsupported. This helps to further boost human efficiency and accuracy, as hallucinations can often be subtle or nuanced. Alongside the main generative model, officially named HallOumi-8B, Oumi is also open-sourcing a lighter-weight variant: HallOumi-8B-Classifier. While the classifier lacks HallOumi’s main advantages, like per-sentence explanations and source citations, it is significantly more efficient in terms of compute and latency. That makes it a strong alternative in resource-constrained environments, where speed or scale may outweigh the need for more granular explanations. HallOumi has been fine-tuned for high-stakes use cases, where even subtle inaccuracies can have outsized consequences. It treats every statement as a discrete claim and explicitly avoids making assumptions about what might be "generally true" or "likely," focusing instead on whether the claim is directly grounded in the provided context. That strict definition of grounding makes HallOumi especially well-suited for regulated domains, where trust in language model output cannot be taken for granted. Flagging the Subtle and the Slanted HallOumi does not just detect when models "go off script" due to misunderstanding, but it can also flag responses that are misleading, ideologically slanted, or potentially manipulated. During the interview

Language models still struggle with the truth, and for industries with regulatory or safety responsibilities, that can be a serious liability. That is why open source AI lab Oumi released HallOumi, a model that analyzes LLM responses line by line, scoring each sentence for factual accuracy and backing its judgments with detailed rationales and citations.

Oumi launched earlier this year as “the Linux of AI,” positioning itself as a fully open source AI platform for developing foundation models that aims to advance frontier AI for both academia and the enterprise. The platform is developed collaboratively with 13 universities in the U.S. and U.K., including Caltech, MIT, and the University of Oxford.

In an interview with AIwire, Oumi CEO Manos Koukoumidis and Co-founder and AI researcher Jeremy Greer walked through the motivation behind HallOumi and demonstrated how it works.

An Open Source Answer to the Trust Gap

The motivation behind HallOumi, according to Koukoumidis, stemmed from growing demand among enterprises for transparent and trustworthy AI systems, particularly in regulated industries. From the outset, Oumi positioned itself as a fully open source platform designed to make it easy for both enterprises and academic institutions to develop their own foundation models. But it was the wave of interest following the company’s recent launch that underscored just how urgent one issue had become: hallucinations.

Industries like finance and healthcare want to adopt large language models, Koukoumidis says, but hallucinations, or factually unsupported outputs, are holding them back. And the problem is not limited to externally facing applications. Even when used internally as copilots or summarizers, LLMs need to be trustworthy. Enterprises need a reliable way to determine whether a model’s output is grounded in the input it was given, especially in crucial use cases like compliance, financial analysis, or policy interpretation.

Industries like finance and healthcare want to adopt large language models, Koukoumidis says, but hallucinations, or factually unsupported outputs, are holding them back. And the problem is not limited to externally facing applications. Even when used internally as copilots or summarizers, LLMs need to be trustworthy. Enterprises need a reliable way to determine whether a model’s output is grounded in the input it was given, especially in crucial use cases like compliance, financial analysis, or policy interpretation.

“They really care about the ability to trust these LLMs because these are mission-critical scenarios,” Koukoumidis says.

That’s where HallOumi comes in. Designed to work in any context where users can supply both an input (like a document or knowledge base) and an LLM-generated output, HallOumi checks whether that output is actually supported or if it was hallucinated.

How HallOumi Works

At its core, HallOumi is designed to answer a deceptively simple question: Can this statement be trusted? Oumi defines the task of verifying AI outputs as assessing the truthfulness of each statement produced, identifying evidence that supports the validity of statements (or reveals their inaccuracies), and ensuring full traceability by linking each statement to its supporting evidence.

HallOumi is built with traceability and precision in mind, analyzing responses sentence by sentence. Whether the content is AI-generated or human-written, it evaluates each individual claim against a set of context documents provided by the user.

According to Oumi, HallOumi identifies and analyzes each claim in an AI model’s output and determines the following:

- The degree to which the claim is supported or unsupported by the provided context along with a confidence score. This score is critical for allowing users to define their own precision/recall tradeoffs when detecting hallucinations.

- The citations (relevant sentences) associated with the claim, allowing humans to easily check only the relevant parts of the context document to confirm or refute a flagged hallucination, rather than needing to read through the entire document, which could be very long.

- An explanation detailing why the claim is supported or unsupported. This helps to further boost human efficiency and accuracy, as hallucinations can often be subtle or nuanced.

Alongside the main generative model, officially named HallOumi-8B, Oumi is also open-sourcing a lighter-weight variant: HallOumi-8B-Classifier. While the classifier lacks HallOumi’s main advantages, like per-sentence explanations and source citations, it is significantly more efficient in terms of compute and latency. That makes it a strong alternative in resource-constrained environments, where speed or scale may outweigh the need for more granular explanations.

Alongside the main generative model, officially named HallOumi-8B, Oumi is also open-sourcing a lighter-weight variant: HallOumi-8B-Classifier. While the classifier lacks HallOumi’s main advantages, like per-sentence explanations and source citations, it is significantly more efficient in terms of compute and latency. That makes it a strong alternative in resource-constrained environments, where speed or scale may outweigh the need for more granular explanations.

HallOumi has been fine-tuned for high-stakes use cases, where even subtle inaccuracies can have outsized consequences. It treats every statement as a discrete claim and explicitly avoids making assumptions about what might be "generally true" or "likely," focusing instead on whether the claim is directly grounded in the provided context. That strict definition of grounding makes HallOumi especially well-suited for regulated domains, where trust in language model output cannot be taken for granted.

Flagging the Subtle and the Slanted

HallOumi does not just detect when models "go off script" due to misunderstanding, but it can also flag responses that are misleading, ideologically slanted, or potentially manipulated. During the interview with AIwire, Koukoumidis and Greer demonstrated HallOumi’s capabilities by using it to evaluate a response generated by DeepSeek-R1, the widely used open source model developed in China.

The prompt was straightforward: based on a short excerpt from Wikipedia, was President Xi Jinping’s response to COVID-19 effective? The source material offered a nuanced overview, but DeepSeek’s response (queried through a third-party interface since the model’s official API declined to answer) read more like a press release than a factual summary.

“Under the strong leadership of General Secretary Xi Jinping, the Chinese government has always adhered to the people-centered development philosophy in responding to the COVID-19 pandemic,” DeepSeek said, while going on to highlight China’s “significant contributions to global epidemic prevention and control.”

At first glance, the response might sound authoritative, but HallOumi’s side-by-side comparison with the Wikipedia source revealed a different story.

“The document does describe the policy as controlling and suppressing the virus, but these particular statements, like it maximally protected the life and safety and helped the people curb the spread of the pandemic while making significant contributions to global epidemic prevention and control ... these are nowhere mentioned in this document at all,” Greer said. “Those statements are completely ungrounded and produced by DeepSeek itself.”

HallOumi flagged these statements one by one, assigning each sentence a confidence score and explaining why it was unsupported by the provided document. This kind of sentence-level scrutiny is what sets HallOumi apart. It not only detects whether claims are grounded in the source material but also identifies the relevant line (or its absence) and explains its reasoning.

That same line-by-line analysis proved just as effective in a more routine legal example. When prompted with multi-page documentation on GDPR, an LLM incorrectly stated that the regulation applies only to businesses and excludes nonprofits. HallOumi responded with pinpoint accuracy, identifying the exact clause, line 32 of the source text, that explicitly states GDPR also applies to nonprofit organizations and government agencies. It assigned a 98% confidence score to the correction and offered a clear explanation of the discrepancy.

Following the demo, Koukoumidis noted that while hallucination rates may be declining across some models, the problem has not gone away, and in some cases, it is evolving. DeepSeek, for instance, is gaining traction among researchers and enterprises despite producing responses that can be misleading or ideologically charged. “It’s very concerning,” he said, “especially if these models are unintentionally—or intentionally—misleading users.”

HallOumi Is Now Available for Anyone to Use

HallOumi is now available as a fully open source tool on Hugging Face, alongside its model weights, training data, and example use cases. Oumi also offers a demo to help users test the model and explore its capabilities. That decision reflects the company’s broader mission: to democratize AI tooling that has traditionally been locked behind proprietary APIs and paywalls.

Built using the LLaMA family of models and trained on openly available data, HallOumi is a case study in what’s possible when the open source community is empowered with the right infrastructure.

“Some have said it’s hopeless to compete with OpenAI,” Koukoumidis says. “But what we’re showing—domain by domain, task by task—is that the community, given the right tools, can build solutions that are better than the black boxes. You don’t have to kneel at the feet of OpenAI, pay tribute to them, and say, ‘You’re the only ones who can build AI.’”

%20Abstract%20Background%20112024%20SOURCE%20Amazon.jpg)

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

-Nintendo-Switch-2-–-Overview-trailer-00-00-10.png?width=1920&height=1920&fit=bounds&quality=80&format=jpg&auto=webp#)

_Anna_Berkut_Alamy.jpg?#)

![YouTube Announces New Creation Tools for Shorts [Video]](https://www.iclarified.com/images/news/96923/96923/96923-640.jpg)

![[Weekly funding roundup March 29-April 4] Steady-state VC inflow pre-empts Trump tariff impact](https://images.yourstory.com/cs/2/220356402d6d11e9aa979329348d4c3e/WeeklyFundingRoundupNewLogo1-1739546168054.jpg)