Zero-Shot Prompting: The Cleanest Trick in Prompt Engineering

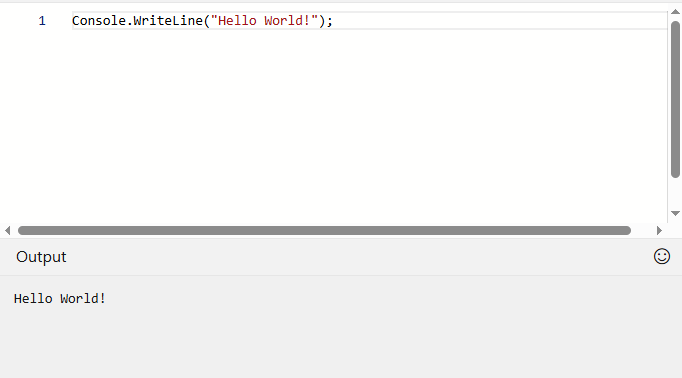

“One prompt to rule them all, one prompt to guide them, one prompt to shape them all and in the context bind them — in the land of tokens where the models lie.” — G(andalf)PT-4o Somewhere between over-engineering your prompts and throwing spaghetti at GPT, there’s a sweet spot — and it’s called zero-shot prompting. It’s the prompt engineering equivalent of walking up to a whiteboard, writing a single sentence, and getting a full-blown answer without further explanation. No examples. No hand-holding. Just clarity. But how? Let’s break it down — without sounding like an instruction manual. What Even Is Zero-Shot Prompting? It’s simple, really. You ask the model to do something directly — and hope it gets the gist. Example: Translate the following sentence into French: "I forgot my umbrella." There’s no preamble, no training, no examples of English-to-French translation. Yet most modern LLMs will nail it. That’s zero-shot. The magic? These models have already seen enough training data to “understand” what translating means — or at least fake it really well. It’s like asking a very smart intern to improvise a task they’ve never explicitly done — but have read about thousands of times.

“One prompt to rule them all, one prompt to guide them, one prompt to shape them all and in the context bind them — in the land of tokens where the models lie.”

— G(andalf)PT-4o

Somewhere between over-engineering your prompts and throwing spaghetti at GPT, there’s a sweet spot — and it’s called zero-shot prompting.

It’s the prompt engineering equivalent of walking up to a whiteboard, writing a single sentence, and getting a full-blown answer without further explanation. No examples. No hand-holding. Just clarity.

But how?

Let’s break it down — without sounding like an instruction manual.

What Even Is Zero-Shot Prompting?

It’s simple, really. You ask the model to do something directly — and hope it gets the gist.

Example:

Translate the following sentence into French: "I forgot my umbrella."

There’s no preamble, no training, no examples of English-to-French translation. Yet most modern LLMs will nail it. That’s zero-shot.

The magic? These models have already seen enough training data to “understand” what translating means — or at least fake it really well.

It’s like asking a very smart intern to improvise a task they’ve never explicitly done — but have read about thousands of times.

%20Abstract%20Background%20112024%20SOURCE%20Amazon.jpg)

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

-Nintendo-Switch-2-–-Overview-trailer-00-00-10.png?width=1920&height=1920&fit=bounds&quality=80&format=jpg&auto=webp#)

_Anna_Berkut_Alamy.jpg?#)

![YouTube Announces New Creation Tools for Shorts [Video]](https://www.iclarified.com/images/news/96923/96923/96923-640.jpg)

![[Weekly funding roundup March 29-April 4] Steady-state VC inflow pre-empts Trump tariff impact](https://images.yourstory.com/cs/2/220356402d6d11e9aa979329348d4c3e/WeeklyFundingRoundupNewLogo1-1739546168054.jpg)