First Open Large Language Model for Kazakh Language Achieves State-of-the-Art Performance

This is a Plain English Papers summary of a research paper called First Open Large Language Model for Kazakh Language Achieves State-of-the-Art Performance. If you like these kinds of analysis, you should join AImodels.fyi or follow us on Twitter. Overview Llama-3.1-Sherkala-8B-Chat is a language model specifically designed for Kazakh Built on Meta's Llama-3.1-8B foundation model through continued pretraining Used 19.5B tokens of high-quality Kazakh text data Features instruction tuning using a Kazakh-specific dataset Outperforms other models on Kazakh language tasks Released under an open license for research and commercial use Plain English Explanation The researchers created a new language model called Llama-3.1-Sherkala-8B-Chat that can understand and generate text in Kazakh, a language spoken by around 20 million people worldwide. Instead of building a model from scratch, they took Meta's existing Llama-3.1-8B model and co... Click here to read the full summary of this paper

This is a Plain English Papers summary of a research paper called First Open Large Language Model for Kazakh Language Achieves State-of-the-Art Performance. If you like these kinds of analysis, you should join AImodels.fyi or follow us on Twitter.

Overview

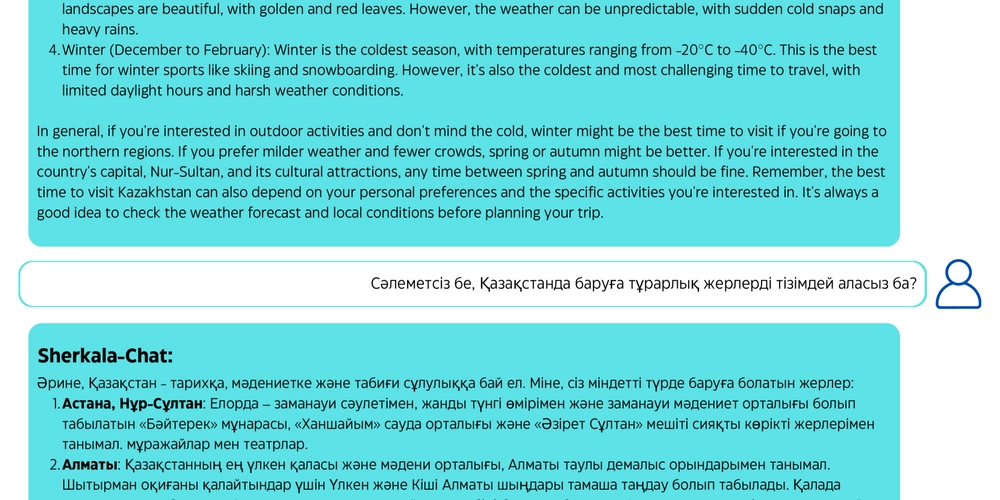

- Llama-3.1-Sherkala-8B-Chat is a language model specifically designed for Kazakh

- Built on Meta's Llama-3.1-8B foundation model through continued pretraining

- Used 19.5B tokens of high-quality Kazakh text data

- Features instruction tuning using a Kazakh-specific dataset

- Outperforms other models on Kazakh language tasks

- Released under an open license for research and commercial use

Plain English Explanation

The researchers created a new language model called Llama-3.1-Sherkala-8B-Chat that can understand and generate text in Kazakh, a language spoken by around 20 million people worldwide. Instead of building a model from scratch, they took Meta's existing Llama-3.1-8B model and co...

%20Abstract%20Background%20112024%20SOURCE%20Amazon.jpg)

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

-Nintendo-Switch-2-–-Overview-trailer-00-00-10.png?width=1920&height=1920&fit=bounds&quality=80&format=jpg&auto=webp#)

_Anna_Berkut_Alamy.jpg?#)

![YouTube Announces New Creation Tools for Shorts [Video]](https://www.iclarified.com/images/news/96923/96923/96923-640.jpg)

![[Weekly funding roundup March 29-April 4] Steady-state VC inflow pre-empts Trump tariff impact](https://images.yourstory.com/cs/2/220356402d6d11e9aa979329348d4c3e/WeeklyFundingRoundupNewLogo1-1739546168054.jpg)