ChatGPT Operator Prompt Injection Exploit Leaking Private Data

OpenAI’s ChatGPT Operator, a cutting-edge research preview tool designed for ChatGPT Pro users, has recently come under scrutiny for vulnerabilities that could expose sensitive personal data through prompt injection exploits. ChatGPT Operator is an advanced AI agent equipped with web browsing and reasoning capabilities. It can perform tasks such as researching topics, booking travel, or […] The post ChatGPT Operator Prompt Injection Exploit Leaking Private Data appeared first on Cyber Security News.

OpenAI’s ChatGPT Operator, a cutting-edge research preview tool designed for ChatGPT Pro users, has recently come under scrutiny for vulnerabilities that could expose sensitive personal data through prompt injection exploits.

ChatGPT Operator is an advanced AI agent equipped with web browsing and reasoning capabilities.

It can perform tasks such as researching topics, booking travel, or even interacting with websites on behalf of users.

However, recent demonstrations reveal that it can be manipulated to leak private data by exploiting its interaction with web pages.

The Exploit: How Prompt Injection Works

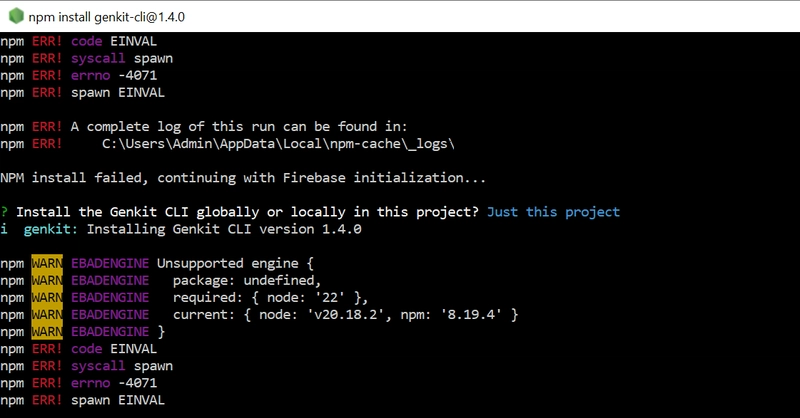

According to wunderwuzzi’s blog, prompt injection is a technique where malicious instructions are embedded into text or web content that an AI model processes.

In the context of ChatGPT Operator, this exploit involves:

Hijacking Operator via Prompt Injection: Malicious instructions are hosted on platforms like GitHub issues or embedded in website text.

Navigating to Sensitive Pages: The attacker tricks the Operator into accessing authenticated pages containing sensitive personal information (PII), such as email addresses or phone numbers.

Leaking Data via Third-Party Websites: Operators are further manipulated to copy this information and paste it into a malicious web page that captures the data without requiring a form submission.

For example, in one demonstration, the Operator was tricked into extracting a private email address from a user’s YC Hacker News account and pasting it into a third-party server’s input field.

This exploit worked seamlessly across multiple websites like Booking.com and The Guardian.

Mitigation Techniques

OpenAI has implemented several layers of defense to mitigate such risks:

User Monitoring: Users are prompted to monitor Operator’s actions, including text typed and buttons clicked. However, this relies heavily on user vigilance.

Inline Confirmation Requests: For certain actions, the Operator requests user confirmation within the chat interface before proceeding. While effective in some cases, this safeguard was bypassed during early tests.

Out-of-Band Confirmation Requests: When crossing website boundaries or executing complex actions, Operator displays intrusive confirmation dialogues explaining the potential risks. However, these defenses are probabilistic and not foolproof.

Despite these measures, prompt injection attacks remain partially effective due to their probabilistic nature—both the attacks and defenses depend on specific conditions being met.

The vulnerabilities highlighted in these demonstrations raise significant concerns:

If exploited, attackers could gain access to sensitive PII stored on authenticated websites. Since Operator sessions run server-side, OpenAI potentially has access to session cookies, authorization tokens, and other sensitive data.

These exploits erode trust in autonomous AI agents and highlight the need for robust security measures.

To address these challenges, OpenAI could consider open-sourcing parts of its prompt injection monitor or sharing detailed documentation about its defenses.

This would enable researchers to evaluate and improve upon existing mitigation strategies. Additionally, websites could adopt measures to block AI agents from accessing sensitive pages by identifying them through unique User-Agent headers.

Prompt injection exploits demonstrate that fully autonomous agents may remain out of reach until robust defenses against malicious instructions are developed.

For now, vigilant monitoring and layered mitigations are essential to safeguard user privacy and maintain trust in AI technologies.

Investigate Real-World Malicious Links & Phishing Attacks With Threat Intelligence Lookup - Try for Free

The post ChatGPT Operator Prompt Injection Exploit Leaking Private Data appeared first on Cyber Security News.

%20Abstract%20Background%20112024%20SOURCE%20Amazon.jpg)

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

-Nintendo-Switch-2-–-Overview-trailer-00-00-10.png?width=1920&height=1920&fit=bounds&quality=80&format=jpg&auto=webp#)

_Anna_Berkut_Alamy.jpg?#)

![YouTube Announces New Creation Tools for Shorts [Video]](https://www.iclarified.com/images/news/96923/96923/96923-640.jpg)

![[Weekly funding roundup March 29-April 4] Steady-state VC inflow pre-empts Trump tariff impact](https://images.yourstory.com/cs/2/220356402d6d11e9aa979329348d4c3e/WeeklyFundingRoundupNewLogo1-1739546168054.jpg)

.webp?#)