New Memory-Based Neural Network Activation Cuts Computing Costs by 30%

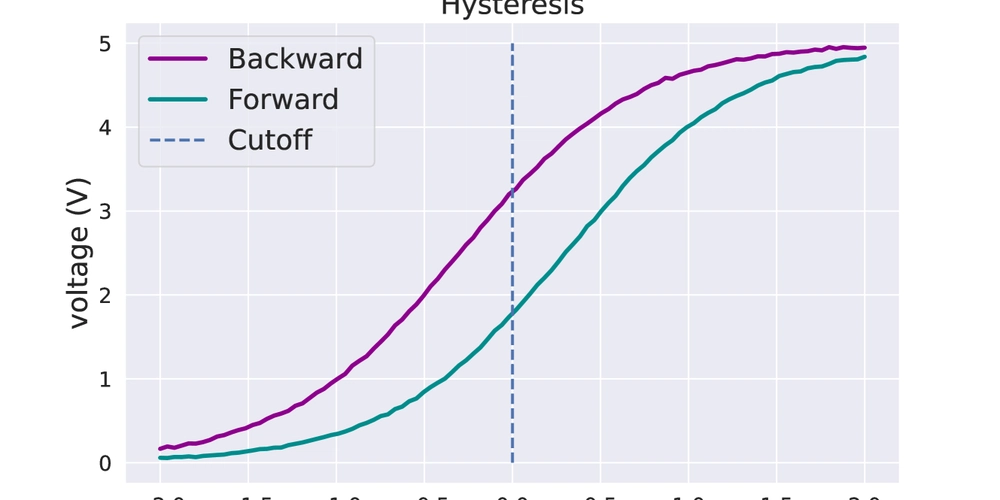

This is a Plain English Papers summary of a research paper called New Memory-Based Neural Network Activation Cuts Computing Costs by 30%. If you like these kinds of analysis, you should join AImodels.fyi or follow us on Twitter. Overview Introduces HeLU (Hysteresis Linear Unit) - a new activation function for neural networks Achieves better inference efficiency compared to ReLU Shows improved performance on computer vision tasks Reduces computational costs while maintaining accuracy Demonstrates compatibility with existing neural network architectures Plain English Explanation Think of neural networks like a chain of mathematical operations that help computers understand patterns. At each step, they need to decide what information to pass forward - this is where activation functions come in. The new [Hysteresis Linear Unit (HeLU)](https://aimodels.fy... Click here to read the full summary of this paper

This is a Plain English Papers summary of a research paper called New Memory-Based Neural Network Activation Cuts Computing Costs by 30%. If you like these kinds of analysis, you should join AImodels.fyi or follow us on Twitter.

Overview

- Introduces HeLU (Hysteresis Linear Unit) - a new activation function for neural networks

- Achieves better inference efficiency compared to ReLU

- Shows improved performance on computer vision tasks

- Reduces computational costs while maintaining accuracy

- Demonstrates compatibility with existing neural network architectures

Plain English Explanation

Think of neural networks like a chain of mathematical operations that help computers understand patterns. At each step, they need to decide what information to pass forward - this is where activation functions come in. The new [Hysteresis Linear Unit (HeLU)](https://aimodels.fy...

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[FREE EBOOKS] The Kubernetes Bible, The Ultimate Linux Shell Scripting Guide & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

.jpg?#)

_Christophe_Coat_Alamy.jpg?#)

![Rapidus in Talks With Apple as It Accelerates Toward 2nm Chip Production [Report]](https://www.iclarified.com/images/news/96937/96937/96937-640.jpg)