Build RAG Chatbot with LangChain, Milvus, GPT-4o mini, and text-embedding-3-large

Retrieval-Augmented Generation (RAG) is a game-changer for GenAI applications, especially in conversational AI. It combines the power of pre-trained large language models (LLMs) like OpenAI’s GPT with external knowledge sources stored in vector databases such as Milvus and Zilliz Cloud, allowing for more accurate, contextually relevant, and up-to-date response generation. This tutorial shows you how to build a simple RAG chatbot in Python using the following components: LangChain: An open-source framework that helps you orchestrate the interaction between LLMs, vector stores, embedding models, etc, making it easier to integrate a RAG pipeline. Milvus: An open-source vector database optimized for store, index, and search large-scale vector embeddings efficiently, perfect for use cases like RAG, semantic search, and recommender systems. GPT-4o mini : A compact, high-performance large language model developed by OpenAI. text-embedding-3-large: OpenAI's text embedding model, generating embeddings with 1536 dimensions, designed for tasks like semantic search and similarity matching. By the end of this tutorial, you’ll have a functional chatbot capable of answering questions based on a custom knowledge base. Note: Since we may use proprietary models in this process, make sure you have the required API key beforehand. Step 1: Install and Set Up LangChain Install LangChain and related modules: %pip install --quiet --upgrade langchain-text-splitters langchain-community langgraph Step 2: Install and Set Up GPT-4o mini pip install -qU langchain-openai import getpass import os if not os.environ.get("OPENAI_API_KEY"): os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter API key for OpenAI: ") from langchain_openai import ChatOpenAI llm = ChatOpenAI(model="gpt-4o-mini") Step 3: Install and Set Up text-embedding-3-large pip install -qU langchain-openai import getpass import os if not os.environ.get("OPENAI_API_KEY"): os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter API key for OpenAI: ") from langchain_openai import OpenAIEmbeddings embeddings = OpenAIEmbeddings(model="text-embedding-3-large") Step 4: Install and Set Up Milvus pip install -qU langchain-milvus from langchain_milvus import Milvus vector_store = Milvus( connection_args={"uri": "./milvus.db"}, embedding_function=embeddings, auto_id=True, drop_old=True, ) Step 5: Build a RAG Chatbot Now that you’ve set up all components, let’s start to build a simple chatbot. We’ll use the Milvus introduction doc as a private knowledge base. You can replace it with your own dataset to customize your RAG chatbot. import bs4 from langchain import hub from langchain_community.document_loaders import WebBaseLoader from langchain_core.documents import Document from langchain_text_splitters import RecursiveCharacterTextSplitter from langgraph.graph import START, StateGraph from typing_extensions import List, TypedDict # Load and chunk contents of the blog loader = WebBaseLoader( web_paths=("https://milvus.io/docs/overview.md",), bs_kwargs=dict( parse_only=bs4.SoupStrainer( class_=("doc-style doc-post-content") ) ), ) docs = loader.load() text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200) all_splits = text_splitter.split_documents(docs) # Index chunks _ = vector_store.add_documents(documents=all_splits) # Define prompt for question-answering prompt = hub.pull("rlm/rag-prompt") # Define state for application class State(TypedDict): question: str context: List[Document] answer: str # Define application steps def retrieve(state: State): retrieved_docs = vector_store.similarity_search(state["question"]) return {"context": retrieved_docs} def generate(state: State): docs_content = "\n\n".join(doc.page_content for doc in state["context"]) messages = prompt.invoke({"question": state["question"], "context": docs_content}) response = llm.invoke(messages) return {"answer": response.content} # Compile application and test graph_builder = StateGraph(State).add_sequence([retrieve, generate]) graph_builder.add_edge(START, "retrieve") graph = graph_builder.compile() Test the Chatbot Yeah! You've built your own chatbot. Let's ask the chatbot a question. response = graph.invoke({"question": "What data types does Milvus support?"}) print(response["answer"]) Example Output Milvus supports various data types including sparse vectors, binary vectors, JSON, and arrays. Additionally, it handles common numerical and character types, making it versatile for different data modeling needs. This allows users to manage unstructured or multi-modal data efficiently. What Have You Learned? You've learned how to build a complete RAG pipeline, implementing vector similarity search with Milvus and contextual response generation with G

Retrieval-Augmented Generation (RAG) is a game-changer for GenAI applications, especially in conversational AI. It combines the power of pre-trained large language models (LLMs) like OpenAI’s GPT with external knowledge sources stored in vector databases such as Milvus and Zilliz Cloud, allowing for more accurate, contextually relevant, and up-to-date response generation.

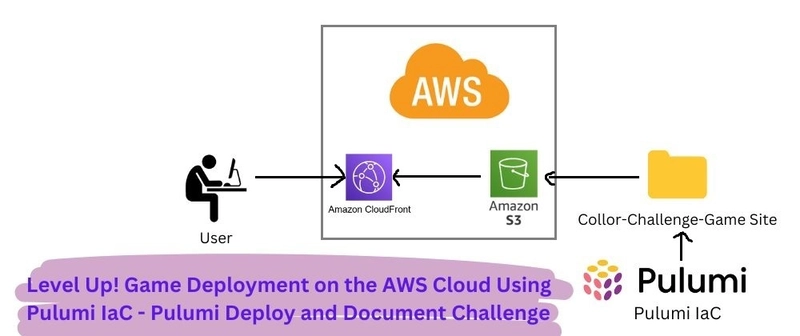

This tutorial shows you how to build a simple RAG chatbot in Python using the following components:

- LangChain: An open-source framework that helps you orchestrate the interaction between LLMs, vector stores, embedding models, etc, making it easier to integrate a RAG pipeline.

- Milvus: An open-source vector database optimized for store, index, and search large-scale vector embeddings efficiently, perfect for use cases like RAG, semantic search, and recommender systems.

- GPT-4o mini : A compact, high-performance large language model developed by OpenAI.

- text-embedding-3-large: OpenAI's text embedding model, generating embeddings with 1536 dimensions, designed for tasks like semantic search and similarity matching.

By the end of this tutorial, you’ll have a functional chatbot capable of answering questions based on a custom knowledge base.

Note: Since we may use proprietary models in this process, make sure you have the required API key beforehand.

Step 1: Install and Set Up LangChain

Install LangChain and related modules:

%pip install --quiet --upgrade langchain-text-splitters langchain-community langgraph

Step 2: Install and Set Up GPT-4o mini

pip install -qU langchain-openai

import getpass

import os

if not os.environ.get("OPENAI_API_KEY"):

os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter API key for OpenAI: ")

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(model="gpt-4o-mini")

Step 3: Install and Set Up text-embedding-3-large

pip install -qU langchain-openai

import getpass

import os

if not os.environ.get("OPENAI_API_KEY"):

os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter API key for OpenAI: ")

from langchain_openai import OpenAIEmbeddings

embeddings = OpenAIEmbeddings(model="text-embedding-3-large")

Step 4: Install and Set Up Milvus

pip install -qU langchain-milvus

from langchain_milvus import Milvus

vector_store = Milvus(

connection_args={"uri": "./milvus.db"},

embedding_function=embeddings,

auto_id=True,

drop_old=True,

)

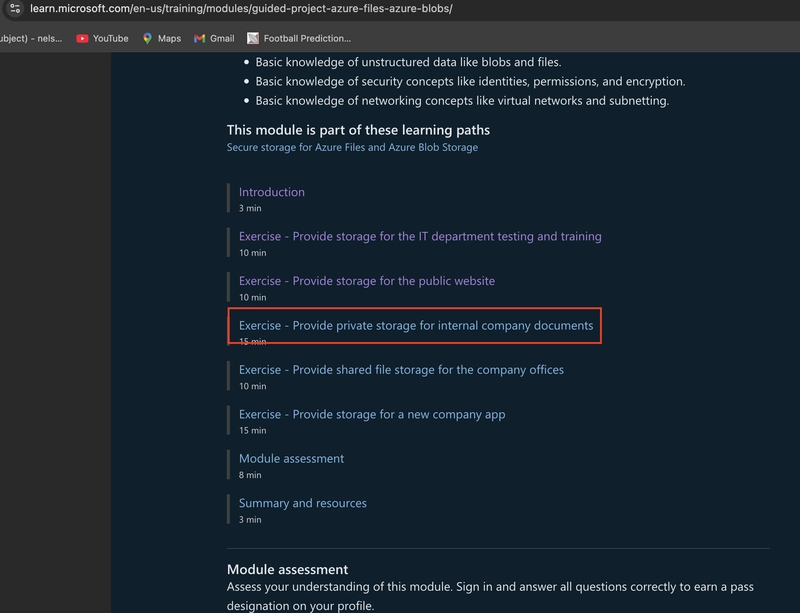

Step 5: Build a RAG Chatbot

Now that you’ve set up all components, let’s start to build a simple chatbot. We’ll use the Milvus introduction doc as a private knowledge base. You can replace it with your own dataset to customize your RAG chatbot.

import bs4

from langchain import hub

from langchain_community.document_loaders import WebBaseLoader

from langchain_core.documents import Document

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langgraph.graph import START, StateGraph

from typing_extensions import List, TypedDict

# Load and chunk contents of the blog

loader = WebBaseLoader(

web_paths=("https://milvus.io/docs/overview.md",),

bs_kwargs=dict(

parse_only=bs4.SoupStrainer(

class_=("doc-style doc-post-content")

)

),

)

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200)

all_splits = text_splitter.split_documents(docs)

# Index chunks

_ = vector_store.add_documents(documents=all_splits)

# Define prompt for question-answering

prompt = hub.pull("rlm/rag-prompt")

# Define state for application

class State(TypedDict):

question: str

context: List[Document]

answer: str

# Define application steps

def retrieve(state: State):

retrieved_docs = vector_store.similarity_search(state["question"])

return {"context": retrieved_docs}

def generate(state: State):

docs_content = "\n\n".join(doc.page_content for doc in state["context"])

messages = prompt.invoke({"question": state["question"], "context": docs_content})

response = llm.invoke(messages)

return {"answer": response.content}

# Compile application and test

graph_builder = StateGraph(State).add_sequence([retrieve, generate])

graph_builder.add_edge(START, "retrieve")

graph = graph_builder.compile()

Test the Chatbot

Yeah! You've built your own chatbot. Let's ask the chatbot a question.

response = graph.invoke({"question": "What data types does Milvus support?"})

print(response["answer"])

Example Output

Milvus supports various data types including sparse vectors, binary vectors, JSON, and arrays. Additionally, it handles common numerical and character types, making it versatile for different data modeling needs. This allows users to manage unstructured or multi-modal data efficiently.

What Have You Learned?

You've learned how to build a complete RAG pipeline, implementing vector similarity search with Milvus and contextual response generation with GPT-4o mini. Through hands-on development, you've discovered how to optimize document chunking for better retrieval and manage application state with LangGraph. You've also learned practical implementation details like setting up API authentication and configuring vector stores. While this tutorial covered the basics, RAG systems are incredibly versatile - you can extend this foundation by using different document sources, embedding models, or LLMs to create increasingly sophisticated AI applications.

We'd Love to Hear What You Think!

We’d love to hear your thoughts!

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[DEALS] The Premium Learn to Code Certification Bundle (97% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

.webp?#)

_Christophe_Coat_Alamy.jpg?#)

(1).webp?#)

![Apple Considers Delaying Smart Home Hub Until 2026 [Gurman]](https://www.iclarified.com/images/news/96946/96946/96946-640.jpg)

![iPhone 17 Pro Won't Feature Two-Toned Back [Gurman]](https://www.iclarified.com/images/news/96944/96944/96944-640.jpg)

![Tariffs Threaten Apple's $999 iPhone Price Point in the U.S. [Gurman]](https://www.iclarified.com/images/news/96943/96943/96943-640.jpg)