4o Image Gen - Diffusion/Transformer Cross-over Trend?

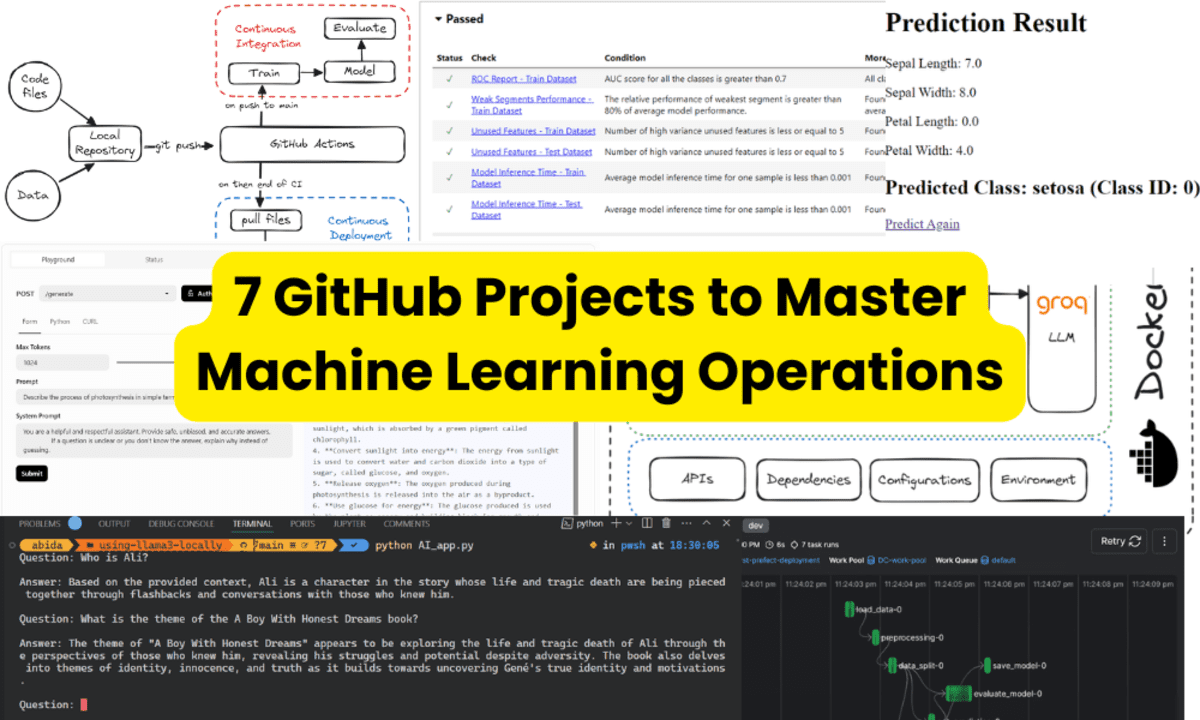

In March we saw 2 major releases (Google, OpenAI) of image generation tools that are very much different from what we're used to: Continuity - You can now proceed with the generated picture and iterate (e.g., it's possible to have the same character across frames). Edits with prompts - nothing like previous workarounds with in-paint, you can now ask to repaint/re-color an object or modify smth. Much better text/font rendering - no more hallucinated texts in non-existing languages. Instruction following - remember the "no elephant in the picture" meme, it's now fixed (as well as plenty of other quirks) :) Previously image gen was the domain of diffusion models. That's how Midjourney, Flux, DALL-E, Stable Diffusion work. With them every prompt is a one-off generation, i.e. there's no concept of dialog or context that we are used to in chatbots. Chatbots that generated images used external diffusion models to create pictures. And chatbots/LLMs - those were dominated by decoder only autoregressive transformer models. To repeat, since the Gen AI revolution unfolding in 2021-2022 we've seen 2 different technological concepts with distinct areas, were: Transformer models dominated LLMs. They are based on next token prediction, producing a sequence of tokens one at a time. Diffusion models reigned image gen. They use noising and denoising the image, all pixels at once. In the March releases of Gemini 2 Flash and 4o image gen, both companies say they do native image gen new. OpenAI also stated their model is autoregressive. While there are not many details shared, it's reasonable to assume that the new techniques built upon the transformer models, which we've seen before are repurposed for image inputs in multi-modal LLMs. If we go 1 month back, to February 2025, there were 2 worth noting releases: Inception Labs introduced their Mercury Coder Small LLM that demonstrates similar to GPT-4o performance (i.e. how smart it is) while at 10x the speed (generating 800 tokens/second) A team from Chine introduced LLaDA - an open source 8B model rivalling Llama 3 8B. What's so special about the models? They apply diffusion - a similar concept previously used for image generation: I.e. these 2 models have brought diffusion models (apparently with some tweaks and clever modifications) into language modeling. And they did it while matching small LLMs' performance and promising new capabilities, such as much faster generation! ... and in March we saw the opposite move, LLMs stepping on the turf of image gen. Let's see how this cross-over unfolds! P.S> I was very much excited about diffusion models back in February - that seemed like something very new and unconventional, applying diffusion to LLMs and building chat models were transformers uncontested. The other major architectural concept applied to chatbots was the Mamba architecture. Yet it doesn't seem that Mamba models got any traction compared to their transformer counterparts. Hoping for the best and for attention to shift away from transformers to exploring the newer LLM architectures.

In March we saw 2 major releases (Google, OpenAI) of image generation tools that are very much different from what we're used to:

- Continuity - You can now proceed with the generated picture and iterate (e.g., it's possible to have the same character across frames).

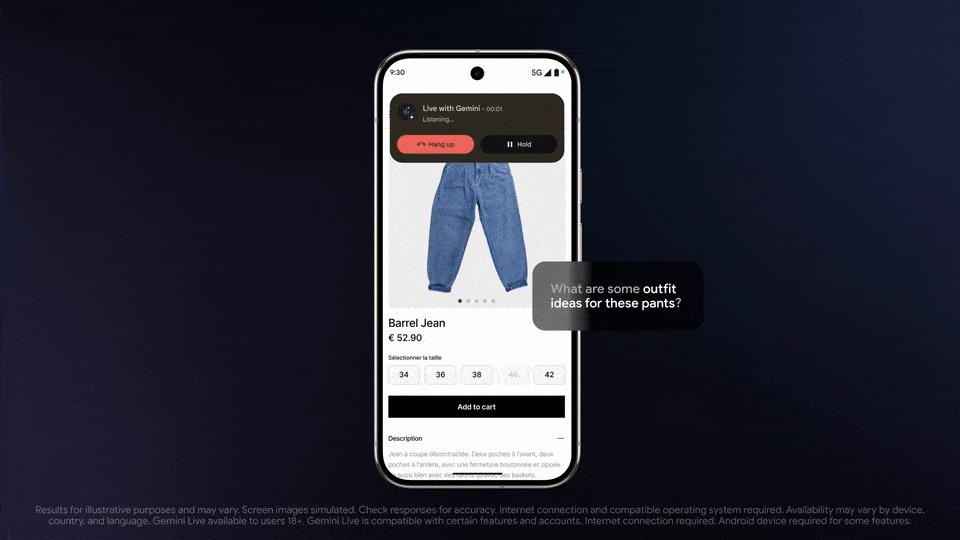

- Edits with prompts - nothing like previous workarounds with in-paint, you can now ask to repaint/re-color an object or modify smth.

- Much better text/font rendering - no more hallucinated texts in non-existing languages.

- Instruction following - remember the "no elephant in the picture" meme, it's now fixed (as well as plenty of other quirks) :)

Previously image gen was the domain of diffusion models. That's how Midjourney, Flux, DALL-E, Stable Diffusion work. With them every prompt is a one-off generation, i.e. there's no concept of dialog or context that we are used to in chatbots. Chatbots that generated images used external diffusion models to create pictures. And chatbots/LLMs - those were dominated by decoder only autoregressive transformer models.

To repeat, since the Gen AI revolution unfolding in 2021-2022 we've seen 2 different technological concepts with distinct areas, were:

- Transformer models dominated LLMs. They are based on next token prediction, producing a sequence of tokens one at a time.

- Diffusion models reigned image gen. They use noising and denoising the image, all pixels at once.

In the March releases of Gemini 2 Flash and 4o image gen, both companies say they do native image gen new. OpenAI also stated their model is autoregressive. While there are not many details shared, it's reasonable to assume that the new techniques built upon the transformer models, which we've seen before are repurposed for image inputs in multi-modal LLMs.

If we go 1 month back, to February 2025, there were 2 worth noting releases:

- Inception Labs introduced their Mercury Coder Small LLM that demonstrates similar to GPT-4o performance (i.e. how smart it is) while at 10x the speed (generating 800 tokens/second)

- A team from Chine introduced LLaDA - an open source 8B model rivalling Llama 3 8B.

What's so special about the models? They apply diffusion - a similar concept previously used for image generation:

I.e. these 2 models have brought diffusion models (apparently with some tweaks and clever modifications) into language modeling. And they did it while matching small LLMs' performance and promising new capabilities, such as much faster generation!

... and in March we saw the opposite move, LLMs stepping on the turf of image gen. Let's see how this cross-over unfolds!

P.S>

I was very much excited about diffusion models back in February - that seemed like something very new and unconventional, applying diffusion to LLMs and building chat models were transformers uncontested. The other major architectural concept applied to chatbots was the Mamba architecture. Yet it doesn't seem that Mamba models got any traction compared to their transformer counterparts. Hoping for the best and for attention to shift away from transformers to exploring the newer LLM architectures.

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![Amazon Makes Last-Minute Bid for TikTok [Report]](https://www.iclarified.com/images/news/96917/96917/96917-640.jpg)

![Apple Seeds tvOS 18.5 Beta to Developers [Download]](https://www.iclarified.com/images/news/96913/96913/96913-640.jpg)

![Apple Releases macOS Sequoia 15.5 Beta to Developers [Download]](https://www.iclarified.com/images/news/96915/96915/96915-640.jpg)

![Apple Releases iOS 18.5 Beta and iPadOS 18.5 Beta [Download]](https://www.iclarified.com/images/news/96907/96907/96907-640.jpg)

![Some T-Mobile customers can track real-time location of other users and random kids without permission [UPDATED]](https://m-cdn.phonearena.com/images/article/169135-two/Some-T-Mobile-customers-can-track-real-time-location-of-other-users-and-random-kids-without-permission-UPDATED.jpg?#)