"Unlocking Neural Networks: The Power of Requirements-Based Testing"

In the rapidly evolving landscape of artificial intelligence, neural networks stand as a beacon of innovation, yet they often come shrouded in complexity and uncertainty. Have you ever felt overwhelmed by the intricacies of ensuring that these powerful models meet their intended requirements? You're not alone. Many developers and engineers grapple with the challenge of validating neural networks to guarantee they perform accurately and reliably in real-world applications. Enter Requirements-Based Testing—a transformative approach that can unlock the full potential of your AI systems while mitigating risks associated with deployment failures. In this blog post, we will delve into what Requirements-Based Testing entails, explore its myriad benefits for AI development, and address common challenges faced during implementation along with practical solutions. By adopting best practices tailored for effective testing, you'll be equipped to navigate the complexities of neural network validation confidently. So if you're ready to elevate your understanding and application of testing methodologies within AI frameworks, join us on this enlightening journey toward mastering Requirements-Based Testing—your key to unlocking robust neural network performance! Understanding Neural Networks Neural networks, particularly deep neural networks (DNNs), are pivotal in machine learning and artificial intelligence. Their architecture mimics the human brain's interconnected neuron structure, enabling them to learn from vast datasets. The rbt4dnn method emphasizes the necessity of rigorous testing for DNNs to ensure reliability and safety in critical applications. By employing structured natural language requirements, this approach generates comprehensive test suites that align with system specifications. This methodology not only addresses the challenges of formalizing functional requirements but also leverages generative models for effective fault detection. Evaluating these networks against diverse inputs is crucial; it enhances their robustness and accuracy across various tasks. Key Components of Testing Neural Networks The effectiveness of testing methodologies like rbt4dnn lies in their ability to produce realistic test inputs through semantic feature spaces and glossary terms tailored for specific datasets such as MNIST or CelebA-HQ. Additionally, fine-tuning pre-trained models ensures optimal performance while maintaining energy efficiency—a growing concern within telecommunications where neural circuit policies can significantly reduce computational overhead without sacrificing accuracy. These advancements underscore a broader trend towards integrating sophisticated testing frameworks into machine learning workflows, ultimately enhancing both functionality and user trust in AI systems. What is Requirements-Based Testing? Requirements-Based Testing (RBT) is a systematic approach to software testing that ensures the developed system meets its specified requirements. In the context of deep neural networks (DNNs), RBT focuses on generating test suites derived from structured natural language requirements, enhancing reliability and safety in critical applications. The rbt4dnn method exemplifies this by utilizing generative models to create diverse test inputs aligned with functional specifications, addressing gaps in traditional testing methodologies for machine learning systems. Key Components of RBT The process involves defining feature-based functional requirements specific to DNN classifiers, which are crucial for accurate predictions based on semantic features. By employing glossary terms and scene graphs, RBT facilitates realistic input generation that reflects real-world scenarios. This not only aids in fault detection but also improves overall model performance through rigorous evaluation against various datasets like MNIST and CelebA-HQ. The emphasis on formalizing these requirements highlights the importance of thorough testing protocols tailored to machine learning components, ultimately ensuring enhanced reliability across applications such as autonomous driving systems and image synthesis models. Benefits of Requirements-Based Testing in AI Requirements-based testing (RBT) offers significant advantages for artificial intelligence, particularly in the context of deep neural networks (DNNs). By aligning test suites with structured natural language requirements, RBT enhances the reliability and safety of AI systems. This method ensures that DNNs are rigorously evaluated against predefined functional specifications, which is crucial given their complexity and potential impact on critical applications like autonomous driving. Enhanced Fault Detection One key benefit of RBT is its ability to improve fault detection within neural networks. Utilizing generative models to create diverse test inputs based on semantic feature spaces allows for a more comprehensive assessment of model performance. The rbt4dnn m

In the rapidly evolving landscape of artificial intelligence, neural networks stand as a beacon of innovation, yet they often come shrouded in complexity and uncertainty. Have you ever felt overwhelmed by the intricacies of ensuring that these powerful models meet their intended requirements? You're not alone. Many developers and engineers grapple with the challenge of validating neural networks to guarantee they perform accurately and reliably in real-world applications. Enter Requirements-Based Testing—a transformative approach that can unlock the full potential of your AI systems while mitigating risks associated with deployment failures. In this blog post, we will delve into what Requirements-Based Testing entails, explore its myriad benefits for AI development, and address common challenges faced during implementation along with practical solutions. By adopting best practices tailored for effective testing, you'll be equipped to navigate the complexities of neural network validation confidently. So if you're ready to elevate your understanding and application of testing methodologies within AI frameworks, join us on this enlightening journey toward mastering Requirements-Based Testing—your key to unlocking robust neural network performance!

Understanding Neural Networks

Neural networks, particularly deep neural networks (DNNs), are pivotal in machine learning and artificial intelligence. Their architecture mimics the human brain's interconnected neuron structure, enabling them to learn from vast datasets. The rbt4dnn method emphasizes the necessity of rigorous testing for DNNs to ensure reliability and safety in critical applications. By employing structured natural language requirements, this approach generates comprehensive test suites that align with system specifications. This methodology not only addresses the challenges of formalizing functional requirements but also leverages generative models for effective fault detection. Evaluating these networks against diverse inputs is crucial; it enhances their robustness and accuracy across various tasks.

Key Components of Testing Neural Networks

The effectiveness of testing methodologies like rbt4dnn lies in their ability to produce realistic test inputs through semantic feature spaces and glossary terms tailored for specific datasets such as MNIST or CelebA-HQ. Additionally, fine-tuning pre-trained models ensures optimal performance while maintaining energy efficiency—a growing concern within telecommunications where neural circuit policies can significantly reduce computational overhead without sacrificing accuracy. These advancements underscore a broader trend towards integrating sophisticated testing frameworks into machine learning workflows, ultimately enhancing both functionality and user trust in AI systems.

What is Requirements-Based Testing?

Requirements-Based Testing (RBT) is a systematic approach to software testing that ensures the developed system meets its specified requirements. In the context of deep neural networks (DNNs), RBT focuses on generating test suites derived from structured natural language requirements, enhancing reliability and safety in critical applications. The rbt4dnn method exemplifies this by utilizing generative models to create diverse test inputs aligned with functional specifications, addressing gaps in traditional testing methodologies for machine learning systems.

Key Components of RBT

The process involves defining feature-based functional requirements specific to DNN classifiers, which are crucial for accurate predictions based on semantic features. By employing glossary terms and scene graphs, RBT facilitates realistic input generation that reflects real-world scenarios. This not only aids in fault detection but also improves overall model performance through rigorous evaluation against various datasets like MNIST and CelebA-HQ. The emphasis on formalizing these requirements highlights the importance of thorough testing protocols tailored to machine learning components, ultimately ensuring enhanced reliability across applications such as autonomous driving systems and image synthesis models.

Benefits of Requirements-Based Testing in AI

Requirements-based testing (RBT) offers significant advantages for artificial intelligence, particularly in the context of deep neural networks (DNNs). By aligning test suites with structured natural language requirements, RBT enhances the reliability and safety of AI systems. This method ensures that DNNs are rigorously evaluated against predefined functional specifications, which is crucial given their complexity and potential impact on critical applications like autonomous driving.

Enhanced Fault Detection

One key benefit of RBT is its ability to improve fault detection within neural networks. Utilizing generative models to create diverse test inputs based on semantic feature spaces allows for a more comprehensive assessment of model performance. The rbt4dnn methodology showcases how realistic scenarios can be generated using glossary terms and scene graphs, leading to better identification of faults during testing phases.

Improved Reliability and Safety

Moreover, by focusing on formalized requirements tailored for machine learning classifiers, RBT facilitates accurate predictions while minimizing risks associated with erroneous outputs. This systematic approach not only strengthens system robustness but also fosters trust among users by ensuring that AI technologies operate reliably under various conditions. As ongoing research continues to refine these methodologies, the integration of RBT into AI development will likely become increasingly vital for achieving optimal performance standards across industries.# Common Challenges and Solutions

Testing deep neural networks (DNNs) presents unique challenges, particularly in aligning testing methodologies with functional requirements. One significant challenge is the difficulty in formalizing these requirements for systems that incorporate machine learning components. The rbt4dnn method addresses this by utilizing structured natural language to generate test suites tailored to specific DNN functionalities, ensuring comprehensive coverage of potential faults.

Another common issue lies in generating realistic and diverse test inputs necessary for effective fault detection. By employing generative models alongside glossary terms and scene graphs, the rbt4dnn approach enhances input variability, which is crucial for evaluating model performance across different datasets like MNIST or CelebA-HQ. This strategy not only improves accuracy but also aids in refining system requirements based on identified failures during testing phases.

Addressing Fault Detection Limitations

The integration of Neural Circuit Policies (NCPs) into energy-efficient designs further exemplifies innovative solutions within this domain. NCPs optimize energy consumption while maintaining computational efficiency compared to traditional models such as LSTM. As researchers continue exploring sparsely structured architectures like Liquid Time-Constant Networks (LTCs), advancements are made toward improving robustness against noisy data—an essential factor when deploying DNNs in real-world applications where reliability is paramount.

By focusing on these challenges and their corresponding solutions, stakeholders can enhance the safety and effectiveness of AI systems through rigorous testing aligned with established requirements.

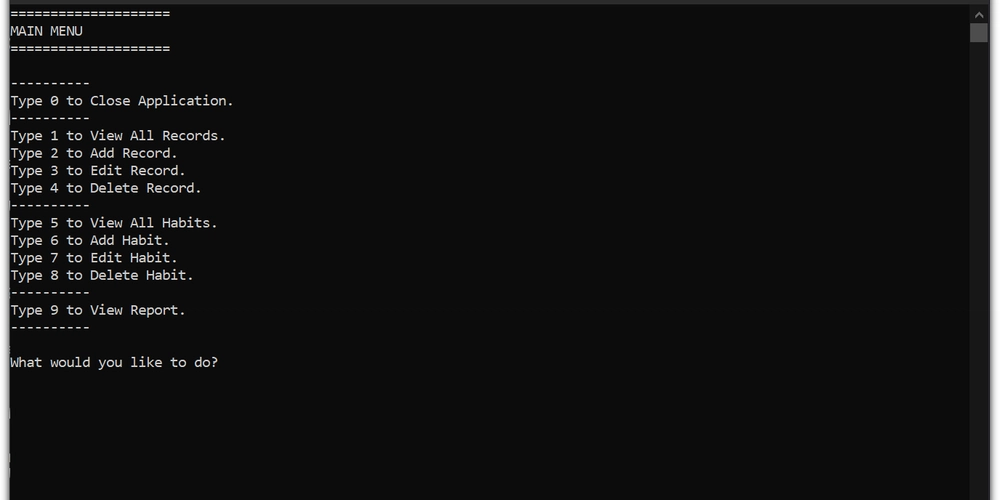

Best Practices for Effective Testing

Effective testing of deep neural networks (DNNs) is crucial to ensure their reliability and safety, especially in critical systems. One best practice involves utilizing the rbt4dnn method, which generates test suites based on structured natural language requirements. This approach ensures that tests are aligned with system specifications, enhancing fault detection capabilities. Incorporating generative models can also produce diverse and realistic test inputs, improving overall testing efficacy.

Key Strategies for Implementation

-

Define Clear Requirements: Establishing well-defined functional requirements is essential for generating accurate test cases that reflect real-world scenarios.

-

Utilize Generative Models: Employ generative models to create varied datasets that mimic potential operational conditions of DNNs, thereby increasing robustness against unforeseen failures.

-

Iterate on Test Suites: Regularly refine and update your test suites based on feedback from previous tests or new insights gained during development phases.

By adhering to these practices, organizations can significantly enhance the effectiveness of their testing processes while ensuring compliance with safety standards in machine learning applications.

Future Trends in Neural Network Testing

The landscape of neural network testing is evolving, driven by the increasing complexity and criticality of deep learning systems. One prominent trend is the adoption of requirements-based testing methodologies, such as rbt4dnn, which leverage structured natural language to generate comprehensive test suites tailored to specific system requirements. This approach addresses a significant gap in traditional testing practices by ensuring that tests align closely with functional specifications, thereby enhancing reliability and safety.

Generative Models and Fault Detection

Generative models are becoming essential tools for creating diverse and realistic test inputs. By utilizing techniques like scene graphs and glossary terms, these models can produce varied datasets that effectively challenge neural networks during evaluation. Furthermore, advancements in fault detection mechanisms within logic circuits highlight the potential for generative approaches to identify weaknesses early in development cycles.

As machine learning continues to integrate into critical applications—such as autonomous driving—the emphasis on robust testing frameworks will only intensify. Ongoing research aims at refining these methods further while exploring their implications across various domains including telecommunications and smart contracts within blockchain technology. The future promises enhanced accuracy through continuous optimization of both generative models and formal requirement specifications.

In conclusion, the exploration of requirements-based testing in neural networks reveals its critical role in enhancing AI system reliability and performance. By understanding the intricate workings of neural networks and integrating a structured approach to testing based on specific requirements, developers can significantly mitigate risks associated with AI deployment. The benefits are manifold; they include improved accuracy, increased transparency, and better alignment between user expectations and actual outcomes. However, challenges such as data bias and model complexity must be addressed through innovative solutions like automated testing frameworks and continuous integration practices. Adopting best practices ensures that testing is thorough and effective while keeping pace with future trends in technology will further refine these processes. Ultimately, embracing requirements-based testing not only strengthens neural network applications but also fosters trust in AI systems across various industries.

FAQs on "Unlocking Neural Networks: The Power of Requirements-Based Testing"

1. What are neural networks and how do they function?

Neural networks are computational models inspired by the human brain, designed to recognize patterns in data. They consist of interconnected nodes (neurons) organized in layers, where each connection has an associated weight that adjusts as learning occurs. By processing input data through these layers, neural networks can perform tasks such as classification, regression, and more.

2. What is requirements-based testing in the context of AI?

Requirements-based testing is a software testing approach that ensures a system meets specified requirements before deployment. In AI and neural networks, this involves validating that the model's outputs align with predefined criteria or performance metrics based on user needs or business objectives.

3. What are some benefits of using requirements-based testing for neural networks?

The key benefits include improved accuracy and reliability of AI systems, enhanced compliance with regulatory standards, better alignment with user expectations, early detection of defects during development phases, and increased confidence among stakeholders regarding system performance.

4. What common challenges might arise when implementing requirements-based testing for neural networks?

Common challenges include defining clear and measurable requirements due to the complexity of AI models; managing evolving specifications throughout development; ensuring comprehensive test coverage across various scenarios; handling large datasets effectively; and addressing potential biases within training data.

5. How can organizations adopt best practices for effective testing of neural networks?

Organizations should start by establishing clear functional and non-functional requirements for their models. Implementing automated testing frameworks can streamline processes while maintaining thorough documentation helps track changes over time. Regularly reviewing test cases against real-world scenarios will also ensure relevance as technology evolves.

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[DEALS] The Premium Learn to Code Certification Bundle (97% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

_Christophe_Coat_Alamy.jpg?#)

(1).webp?#)

![iPhone 17 Pro Won't Feature Two-Toned Back [Gurman]](https://www.iclarified.com/images/news/96944/96944/96944-640.jpg)

![Tariffs Threaten Apple's $999 iPhone Price Point in the U.S. [Gurman]](https://www.iclarified.com/images/news/96943/96943/96943-640.jpg)