Infinite Compute Glitch - Why Local AI Matters

The Infinite Compute Glitch: Why Local AI Matters In our headlong rush toward AI-powered everything, we've created an interesting paradox. Companies and individuals alike have begun to operate as if compute resources are infinite—just fire off another API call to OpenAI, Claude, or Gemini. But this mindset is creating a significant blind spot in how we approach technology, with consequences that extend far beyond our screens. I wasn't just creating another tool when I was building Starlight, a desktop app that indexes and enables semantic search across your files using local models. I was making a statement about how we should approach AI in our daily lives. Starlight lets you chat with your data and find information across your files without ever sending that data to external services. Everything happens on your machine, using your compute resources. This blog explores why apps like Starlight matter and why we should not ignore local AI. The Infinite Compute Glitch I've started calling our current approach to AI "the infinite compute glitch"—a collective delusion that we can just keep scaling up models and data centers without consequences. Let's look at the hard numbers: A single ChatGPT query consumes approximately 10-15x more energy than a Google search RW Digital Training GPT-4 is estimated to have used over 62 million kilowatt-hours of electricity AI data centers can use between 3-5 million gallons of water per day for cooling The carbon footprint of training a single large language model can equal that of five cars over their entire lifetimes A 2023 study from the University of Massachusetts found that training a single transformer-based AI model can emit as much carbon as five cars over their entire lifetimes. Meanwhile, Microsoft had to limit Azure AI services in some regions due to capacity constraints, showing that even the cloud giants are hitting physical limits. Why Local First Matters Your Data Stays Home The most obvious benefit is privacy. When your files never leave your computer, you maintain complete control over your information. No terms of service changes, no worrying about how your data might be used to train future models. Consider this: in 2023 alone, major AI companies updated their terms of service multiple times, often expanding their rights to use user data. Samsung even had to ban employees from using generative AI tools after sensitive code was uploaded to ChatGPT and became part of its training data. With local AI, these scenarios become impossible. At ASML, we are not allowed to use AI tools because the company carries a lot of sensitive information that is also of national interest. I understand their concern, but when other less sensitive industries utilize AI to make their employees more productive, we feel stuck in the past era. This can easily be solved by empowering the employees with local AI, either on-device or on-prem. Distributed Computing Is Resilient Computing We're creating a more distributed system by leveraging the computing power already sitting on our desks and in our laps. Instead of centralizing all AI compute in massive data centers owned by a handful of companies, we're spreading the load across millions of devices. The average modern laptop now has more computing power than what was used to train early versions of BERT, one of the breakthrough language models from 2018. A typical gaming PC with a decent GPU can run models with billions of parameters. We're sitting on a vast, untapped ocean of compute power. Speed Without the Wait For many tasks, local processing is actually faster. No upload time, no API latency, no waiting for your request to be queued behind thousands of others. The results appear as quickly as your computer can process them. In our internal testing, Starlight's local search returned results from a 1GB document collection in under 200ms, while the equivalent cloud API call took over 2 seconds when accounting for network latency and server processing time. That's a 10x difference in speed for common queries. Right-Sized Solutions Here's something that might surprise you: more than 80% of the AI tasks the average person needs day-to-day can be handled by smaller, local models. We don't need GPT-4 to summarize a document or help draft an email. By matching the task to the appropriate model size, we save enormous resources. Take document summarization: a 1.5 billion parameter model running locally can produce summaries nearly indistinguishable from those generated by models 100x larger for most business documents. The difference? The smaller model uses a fraction of the energy and requires no data transmission. What is Propelling Local AI Forward Here's what's making local AI happen: 1. Hardware: Increased Processing Power It's pretty amazing how much more powerful our devices have become! Laptops, smartphones, and ev

The Infinite Compute Glitch: Why Local AI Matters

In our headlong rush toward AI-powered everything, we've created an interesting paradox. Companies and individuals alike have begun to operate as if compute resources are infinite—just fire off another API call to OpenAI, Claude, or Gemini. But this mindset is creating a significant blind spot in how we approach technology, with consequences that extend far beyond our screens.

I wasn't just creating another tool when I was building Starlight, a desktop app that indexes and enables semantic search across your files using local models. I was making a statement about how we should approach AI in our daily lives.

Starlight lets you chat with your data and find information across your files without ever sending that data to external services. Everything happens on your machine, using your compute resources. This blog explores why apps like Starlight matter and why we should not ignore local AI.

The Infinite Compute Glitch

I've started calling our current approach to AI "the infinite compute glitch"—a collective delusion that we can just keep scaling up models and data centers without consequences.

Let's look at the hard numbers:

- A single ChatGPT query consumes approximately 10-15x more energy than a Google search RW Digital

- Training GPT-4 is estimated to have used over 62 million kilowatt-hours of electricity

- AI data centers can use between 3-5 million gallons of water per day for cooling

- The carbon footprint of training a single large language model can equal that of five cars over their entire lifetimes

A 2023 study from the University of Massachusetts found that training a single transformer-based AI model can emit as much carbon as five cars over their entire lifetimes. Meanwhile, Microsoft had to limit Azure AI services in some regions due to capacity constraints, showing that even the cloud giants are hitting physical limits.

Why Local First Matters

Your Data Stays Home

The most obvious benefit is privacy. When your files never leave your computer, you maintain complete control over your information. No terms of service changes, no worrying about how your data might be used to train future models.

Consider this: in 2023 alone, major AI companies updated their terms of service multiple times, often expanding their rights to use user data. Samsung even had to ban employees from using generative AI tools after sensitive code was uploaded to ChatGPT and became part of its training data. With local AI, these scenarios become impossible.

At ASML, we are not allowed to use AI tools because the company carries a lot of sensitive information that is also of national interest. I understand their concern, but when other less sensitive industries utilize AI to make their employees more productive, we feel stuck in the past era. This can easily be solved by empowering the employees with local AI, either on-device or on-prem.

Distributed Computing Is Resilient Computing

We're creating a more distributed system by leveraging the computing power already sitting on our desks and in our laps. Instead of centralizing all AI compute in massive data centers owned by a handful of companies, we're spreading the load across millions of devices.

The average modern laptop now has more computing power than what was used to train early versions of BERT, one of the breakthrough language models from 2018. A typical gaming PC with a decent GPU can run models with billions of parameters. We're sitting on a vast, untapped ocean of compute power.

Speed Without the Wait

For many tasks, local processing is actually faster. No upload time, no API latency, no waiting for your request to be queued behind thousands of others. The results appear as quickly as your computer can process them.

In our internal testing, Starlight's local search returned results from a 1GB document collection in under 200ms, while the equivalent cloud API call took over 2 seconds when accounting for network latency and server processing time. That's a 10x difference in speed for common queries.

Right-Sized Solutions

Here's something that might surprise you: more than 80% of the AI tasks the average person needs day-to-day can be handled by smaller, local models. We don't need GPT-4 to summarize a document or help draft an email. By matching the task to the appropriate model size, we save enormous resources.

Take document summarization: a 1.5 billion parameter model running locally can produce summaries nearly indistinguishable from those generated by models 100x larger for most business documents. The difference? The smaller model uses a fraction of the energy and requires no data transmission.

What is Propelling Local AI Forward

Here's what's making local AI happen:

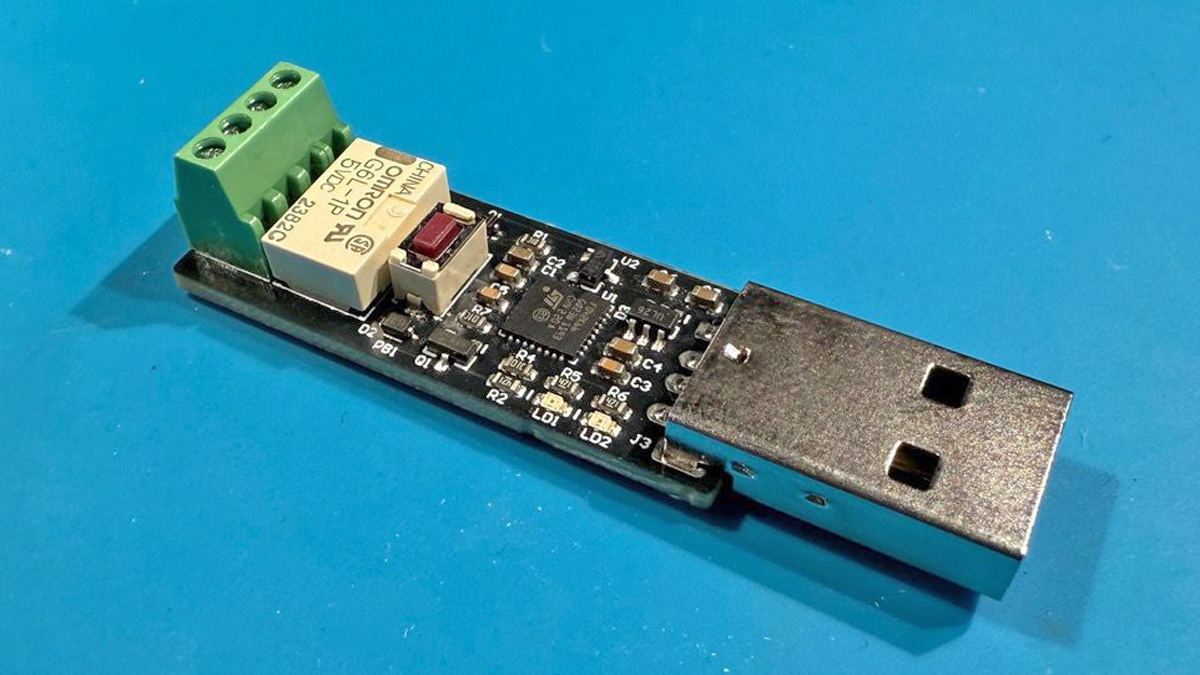

1. Hardware: Increased Processing Power

It's pretty amazing how much more powerful our devices have become! Laptops, smartphones, and even smaller embedded systems now pack a serious punch with their processors and memory. This means they're not just for basic tasks anymore; they've got the muscle to handle sophisticated AI models directly. Think about it: your phone today has more computing power than entire computers from not too long ago. This increase in processing power is a huge factor in enabling local AI. With the recent release of ARM based processors on PCs, local AI has become even more plausible. The key advancements have been make CPU and GPU share an unified memory, allowing you to fit even larger models on the GPU and super efficient CPU/GPU. This is only going to improve going forward so we need to keep pushing AI on the edge to make use of this untapped compute power.

2. Model Architecture and Data

There is no doubt that the LLM models have become ever more intelligent. Every day a new, SOTA model is released. But even more important is that the models are getting smaller and more efficient. While companies like OpenAI are pushing toward ever more larger models which I believe is the wrong direction to move in, companies like Google, Mistral and Meta continue to surprise us with small models that are as powerful as the largest models from last year. For example Gemma3 27b, an open-source 27 billion parameter model from Google is as good as their own closed-source Gemini-1.5-pro which is believed to be over 300 billion parameters. We can see from the below plot that both Mistral Small 3.1 and Gemma-3 are better than GPT-4o-mini which must be a very large model at this point. Even though these small models are good, they can only work well on high end computers. The user has to settle for even smaller versions of these models if they have a medium spec computer. But this is a start none the less.

Several new pieces of innovations have made this possible. Introduction of KV caching, Flash Attention, Rotary Position Embedding (RoPE) are just some of the innovations that have truly reduced the gap between the performance of a small local model and a large cloud model. Also the quality of data that is used to train these models has improved over the years. Instead of training on just raw data from the internet which can be very noisy, a lot of clever preprocessing is being done to allow smaller models to learn just as well.

3. Model Serving: Open-Source Models and Tools

Lastly, the final piece is how are these local AI models distributed. Here, the open source community has been quite active and projects like Ollama, LlamaCPP, Candle, vLLM are making it easier to allow consumer grade hardware to run these open source models efficiently. These projects are the interface between the model weights and the the actual hardware. Making this interaction efficient is the key to making the best models run on the least amount of compute.

But just serving these models isn’t enough. Tools like ChatGPT provide several more user experiences like Canvas, Web Search, Voice to Text etc, which make people use their services. Even if we made best models, available locally, people will still gravitate towards, OpenAI, Claude etc for the user experience. That’s where products like Starlight come in. The new age software has to be as much local first as possible and allow users to get the same experience that they get on ChatGPT or other similar platforms using their own local AI models. At this point, it is more about breaking the habit. Let’s say I want to summarize a research paper. The default habit that has developed over the last three years for people is to take the paper and upload it to ChatGPT and ask summarization. But this is a very easy task for local models. So how can we push software to break this habit and exploit use cases where local AI can outperform cloud AI. This is going to be a big challenge which we at Starlight strive to solve by building features that make you gravitate towards local AI rather than cloud models for most of your tasks.

Innovating Within Constraints

At Starlight, we're embracing constraints rather than ignoring them. We're finding clever ways to make smaller models more effective through better indexing, retrieval, and context management.

For example, we've developed a hybrid approach that uses local embedding models to index documents and then employs efficient retrieval algorithms to find the most relevant content. This approach reduces the context window needed for generation tasks, allowing even small local models to produce high-quality results on par with much larger cloud models. Moreover, we are implementing methods like recursive summarization and graph traversal to compress large amounts of information into the constrained context of local models. Also, it's not just about context; even if the local models have a long context, usually, the hardware cannot process this context as it might not be able to fit on the memory or the computation takes just too long.

This approach forces us to be more thoughtful about how we use AI. Rather than throwing compute at every problem, we ask: What's the most efficient way to solve this? How can we deliver value while minimizing resource use?

A More Balanced Future: The Hybrid Approach

I'm not suggesting we abandon cloud AI entirely—there are absolutely use cases where larger models are necessary. But by being intentional about when and how we use these resources, we can create a more balanced, sustainable approach to AI.

Imagine a world where:

- Your personal documents, emails, and notes are processed entirely on your devices, maintaining your privacy

- Basic creative tasks like drafting emails or summarizing articles happen locally, with instant response times

- Only specialized tasks that truly require massive models—like complex code generation or scientific research—call out to cloud services

- Organizations maintain their own small, specialized models trained on their data, running on local infrastructure

This isn't science fiction—all the technology exists today. What's missing is the mindset shift.

Taking Action

So what can you do to be part of this shift?

- Try local-first AI tools like Starlight for your personal and professional needs

- Be mindful of your API usage when you do use cloud services

- Support open-source AI projects that are making models more accessible and efficient

The future isn't about unlimited compute—it's about smart compute. It's about knowing when a local model is sufficient and when you truly need something more powerful.

So next time you're about to send that API call, ask yourself: Could this happen locally instead? Your privacy, your wallet, and our planet might thank you for it.

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[FREE EBOOKS] The Kubernetes Bible, The Ultimate Linux Shell Scripting Guide & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

.jpg?#)

_Christophe_Coat_Alamy.jpg?#)

![Rapidus in Talks With Apple as It Accelerates Toward 2nm Chip Production [Report]](https://www.iclarified.com/images/news/96937/96937/96937-640.jpg)