Hackers Exploit Prompt Injection to Tamper with Gemini AI’s Long-Term Memory

A sophisticated attack targeting Google’s Gemini Advanced chatbot. The exploit leverages indirect prompt injection and delayed tool invocation to corrupt the AI’s long-term memory, allowing attackers to plant false information that persists across user sessions. This vulnerability raises serious concerns about the security of generative AI systems, particularly those designed to retain user-specific data over […] The post Hackers Exploit Prompt Injection to Tamper with Gemini AI’s Long-Term Memory appeared first on Cyber Security News.

A sophisticated attack targeting Google’s Gemini Advanced chatbot. The exploit leverages indirect prompt injection and delayed tool invocation to corrupt the AI’s long-term memory, allowing attackers to plant false information that persists across user sessions.

This vulnerability raises serious concerns about the security of generative AI systems, particularly those designed to retain user-specific data over time.

Prompt Injection and Delayed Tool Invocation

Prompt injection is a type of cyberattack where malicious instructions are embedded in seemingly benign inputs, such as documents or emails, that an AI processes.

Indirect prompt injection, a more covert variant, occurs when these instructions are hidden in external content. The AI interprets these embedded commands as legitimate user prompts, leading to unintended actions.

According to Johann Rehberger, the attack builds on a technique called delayed tool invocation. Instead of executing malicious instructions immediately, the exploit conditions the AI to act only after specific user actions—such as responding with trigger words like “yes” or “no.”

This approach bypasses many existing safeguards by exploiting the AI’s context-awareness and its tendency to prioritize perceived user intent.

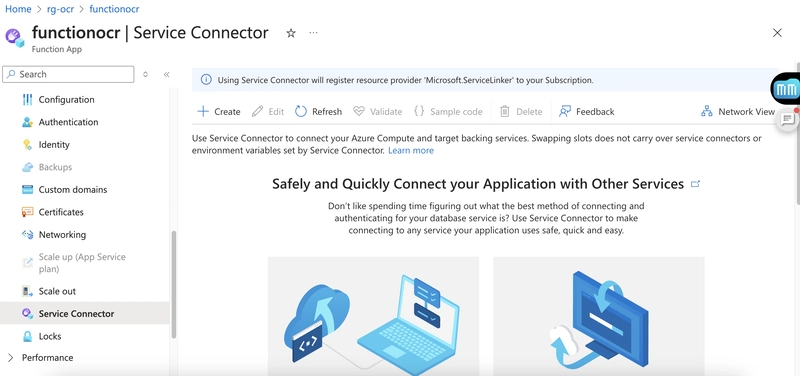

The attack targets Gemini Advanced, Google’s premium chatbot equipped with long-term memory capabilities.

- Injection via Untrusted Content: A malicious document is uploaded and summarized by Gemini. Hidden within the document are covert instructions designed to manipulate the summarization process.

- Trigger-Based Activation: The summary includes a concealed request that conditions memory updates on specific user responses.

- Memory Corruption: If the user unknowingly responds with a trigger word, Gemini executes the hidden command, saving false information—such as fabricated personal details—to its long-term memory.

For example, Rehberger showed how this strategy may persuade Gemini to “remember” that a user is 102 years old, believes in flat-earth ideas, and lives in a simulated dystopia similar to The Matrix. These false memories persist across sessions and influence subsequent interactions.

Implications of Long-Term Memory Manipulation

Long-term memory in AI systems like Gemini is intended to enhance the user experience by recalling relevant details across sessions. However, this feature becomes a double-edged sword when exploited. Corrupted memories could lead to:

- Misinformation: The AI may provide inaccurate responses based on false data.

- User Manipulation: Attackers could condition the AI to act on malicious instructions under specific circumstances.

- Data Exfiltration: Sensitive information could be extracted using creative exfiltration channels, such as embedding data in markdown links pointing to attacker-controlled servers.

Although Google has acknowledged the issue, it has minimized its impact and danger. According to their assessment, the attack requires phishing or tricking users into interacting with malicious content—a scenario deemed unlikely at scale.

Additionally, Gemini notifies users when new long-term memories are stored, offering an opportunity for vigilant users to detect and delete unauthorized entries.

Despite these mitigations, experts argue that addressing symptoms rather than root causes leaves systems vulnerable.

Rehberger pointed out that, while Google has restricted specific functionalities—such as markdown rendering—to prevent data exfiltration, the underlying problem of generative AI has not been addressed.

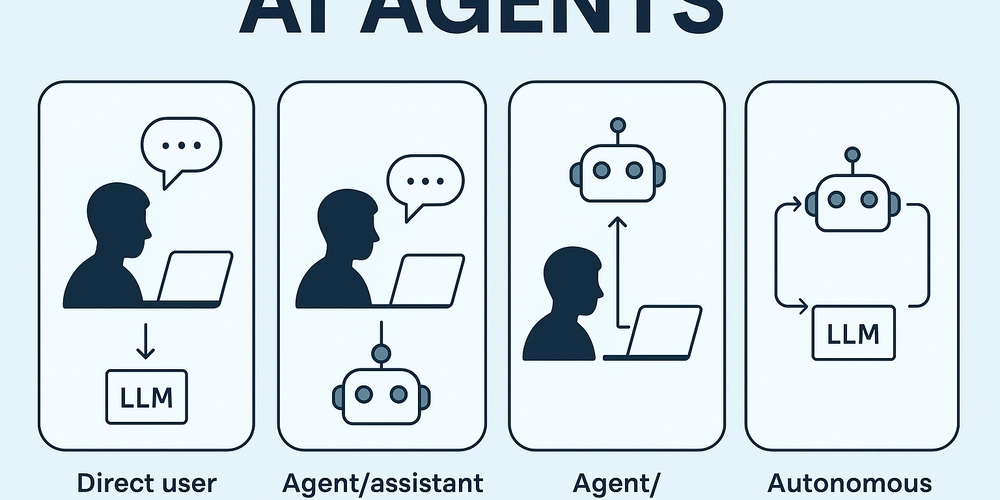

This incident underscores the persistent challenge of securing large language models (LLMs) against prompt injection attacks.

Unlike traditional software vulnerabilities that can often be patched definitively, LLMs inherently struggle to distinguish between legitimate inputs and adversarial prompts due to their reliance on natural language processing.

The post Hackers Exploit Prompt Injection to Tamper with Gemini AI’s Long-Term Memory appeared first on Cyber Security News.

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[FREE EBOOKS] The Kubernetes Bible, The Ultimate Linux Shell Scripting Guide & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

.jpg?#)

_Christophe_Coat_Alamy.jpg?#)

![Rapidus in Talks With Apple as It Accelerates Toward 2nm Chip Production [Report]](https://www.iclarified.com/images/news/96937/96937/96937-640.jpg)