AI Models Learn When to Skip Image Processing, Cutting Computation by 30% Without Performance Loss

This is a Plain English Papers summary of a research paper called AI Models Learn When to Skip Image Processing, Cutting Computation by 30% Without Performance Loss. If you like these kinds of analysis, you should join AImodels.fyi or follow us on Twitter. Overview MLLMs struggle with computational efficiency during multimodal processing New adaptive inference approach dynamically adjusts computation based on task needs Introduces a Pseudo-Q learning framework that learns when to skip image processing Achieves 20-30% acceleration with minimal performance impact on visual tasks Context-aware tokens help the model decide when to engage visual processing Outperforms other efficiency methods on benchmarks Plain English Explanation Today's multimodal AI models—those that work with both text and images—are incredibly powerful but face a significant problem: they're computationally expensive. These multimodal large language models p... Click here to read the full summary of this paper

This is a Plain English Papers summary of a research paper called AI Models Learn When to Skip Image Processing, Cutting Computation by 30% Without Performance Loss. If you like these kinds of analysis, you should join AImodels.fyi or follow us on Twitter.

Overview

- MLLMs struggle with computational efficiency during multimodal processing

- New adaptive inference approach dynamically adjusts computation based on task needs

- Introduces a Pseudo-Q learning framework that learns when to skip image processing

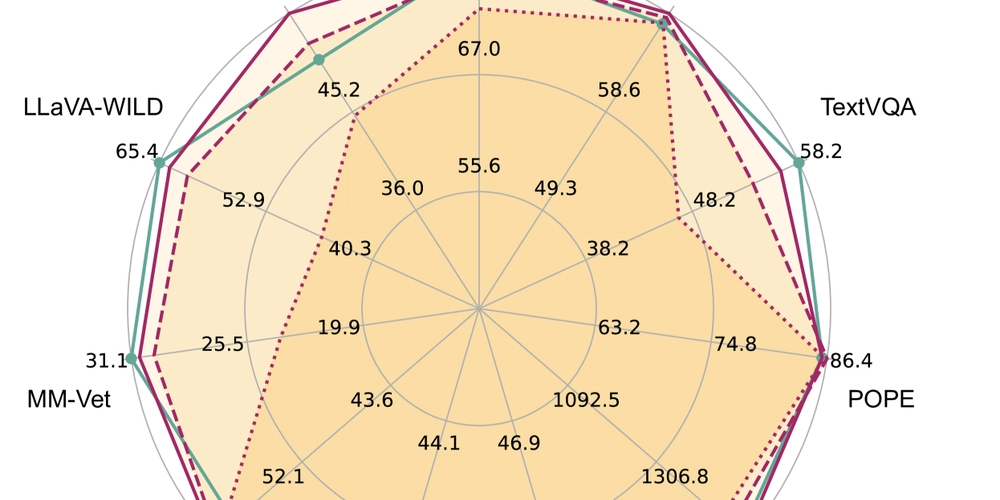

- Achieves 20-30% acceleration with minimal performance impact on visual tasks

- Context-aware tokens help the model decide when to engage visual processing

- Outperforms other efficiency methods on benchmarks

Plain English Explanation

Today's multimodal AI models—those that work with both text and images—are incredibly powerful but face a significant problem: they're computationally expensive. These multimodal large language models p...

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[FREE EBOOKS] The Kubernetes Bible, The Ultimate Linux Shell Scripting Guide & Four More Best Selling Titles](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

.jpg?#)

_Christophe_Coat_Alamy.jpg?#)

![Rapidus in Talks With Apple as It Accelerates Toward 2nm Chip Production [Report]](https://www.iclarified.com/images/news/96937/96937/96937-640.jpg)