The Misleading "Best AI" Narrative

The AI Benchmarking Trap: Why No Single Test Defines "Better" In the ongoing race to crown the "best" large language model (LLM), discussions on platforms like YouTube and LinkedIn are rife with grandiose claims—often made without data or context. The reality is that no single benchmark can determine an LLM’s superiority across all tasks. Comparing models without considering their design intent is an apples-to-oranges mistake, leading to misleading conclusions. A conversational AI optimized for natural interactions will naturally differ from a model fine-tuned for logical reasoning or programming. Understanding benchmarks is key to making informed evaluations. The Many Facets of LLM Performance Different LLMs excel in different areas, and benchmarks exist to measure these strengths. Some of the most commonly cited include: MMLU (Massive Multitask Language Understanding) – Assesses broad general knowledge across 57 subjects, from STEM to humanities. GSM8K (Grade School Math 8K) – Evaluates mathematical reasoning using word problems. HumanEval – Tests coding ability by measuring a model’s accuracy in completing programming tasks. BIG-bench (Beyond the Imitation Game) – Measures creativity, reasoning, and world knowledge across diverse tasks. HellaSwag – Challenges models with commonsense reasoning and next-sentence prediction tasks. Beyond these, more specialized tests evaluate truthfulness, conversational fluency, and human preference alignment: TruthfulQA – Examines how often an AI generates factually correct answers rather than perpetuating common misconceptions. MT-Bench – Assesses AI assistants' conversational quality and helpfulness in chat-based scenarios. AlpacaEval – Uses human feedback to rank LLM responses. Arena Benchmarks – Directly compares LLMs in head-to-head evaluations. Why One Benchmark Isn’t Enough Each benchmark focuses on a different skill set. An AI that dominates GSM8K (math reasoning) may struggle in HellaSwag (commonsense prediction). Likewise, an LLM excelling at HumanEval (code generation) might not perform well in open-ended dialogue. Consider a model designed primarily for conversational engagement—say, an AI customer service assistant. If we judge it solely on its ability to solve math problems, it might seem "inferior" to a reasoning-heavy model like GPT-4 Turbo. But that doesn’t mean it’s a bad model—it’s just optimized for a different use case. One of the most common pitfalls in AI discourse is using selective benchmarks to claim an LLM is "better" without specifying in what context. If an LLM outperforms another on MMLU but lags in AlpacaEval, is it truly better overall? The answer depends on the application. A conversational AI designed for human-like interaction should be evaluated on conversational benchmarks. A research-oriented model, on the other hand, should be assessed on knowledge recall and reasoning-based metrics. Grand claims about AI dominance without specifying the task at hand mislead rather than inform. A Smarter Way to Compare AI To truly assess an LLM’s effectiveness, we must: Use multiple benchmarks rather than cherry-picking results. Consider task specialization—a chatbot and a math solver have different goals. Look beyond raw scores and evaluate real-world utility. Incorporate human feedback, as numbers alone don’t always capture subjective quality. In the rush to compare LLMs, we must avoid the trap of oversimplification. No single benchmark can define "better," and real-world AI use cases require a nuanced approach. Before declaring one AI model the winner, let’s first ask: Better at what? Ben Santora - March 2025 The Misleading "Best AI" Narrative One of the most common pitfalls in AI discourse is using selective benchmarks to claim an LLM is "better" without specifying in what context. If an LLM outperforms another on MMLU but lags in AlpacaEval, is it truly better overall? The answer depends on the application. A conversational AI designed for human-like interaction should be evaluated on conversational benchmarks. A research-oriented model, on the other hand, should be assessed on knowledge recall and reasoning-based metrics. Grand claims about AI dominance without specifying the task at hand mislead rather than inform. A Smarter Way to Compare AI To truly assess an LLM’s effectiveness, we must: Use multiple benchmarks rather than cherry-picking results. Consider task specialization—a chatbot and a math solver have different goals. Look beyond raw scores and evaluate real-world utility. Incorporate human feedback, as numbers alone don’t always capture subjective quality. In the rush to compare LLMs, we must avoid the trap of oversimplification. No single benchmark can define "better," and real-world AI use cases require a nuanced approach. Before declaring one AI model the winner, let’s first ask: Better at what? Ben Santora - March 2025

The AI Benchmarking Trap: Why No Single Test Defines "Better"

In the ongoing race to crown the "best" large language model (LLM), discussions on platforms like YouTube and LinkedIn are rife with grandiose claims—often made without data or context. The reality is that no single benchmark can determine an LLM’s superiority across all tasks. Comparing models without considering their design intent is an apples-to-oranges mistake, leading to misleading conclusions. A conversational AI optimized for natural interactions will naturally differ from a model fine-tuned for logical reasoning or programming. Understanding benchmarks is key to making informed evaluations.

The Many Facets of LLM Performance

Different LLMs excel in different areas, and benchmarks exist to measure these strengths. Some of the most commonly cited include:

MMLU (Massive Multitask Language Understanding) – Assesses broad general knowledge across 57 subjects, from STEM to humanities.

GSM8K (Grade School Math 8K) – Evaluates mathematical reasoning using word problems.

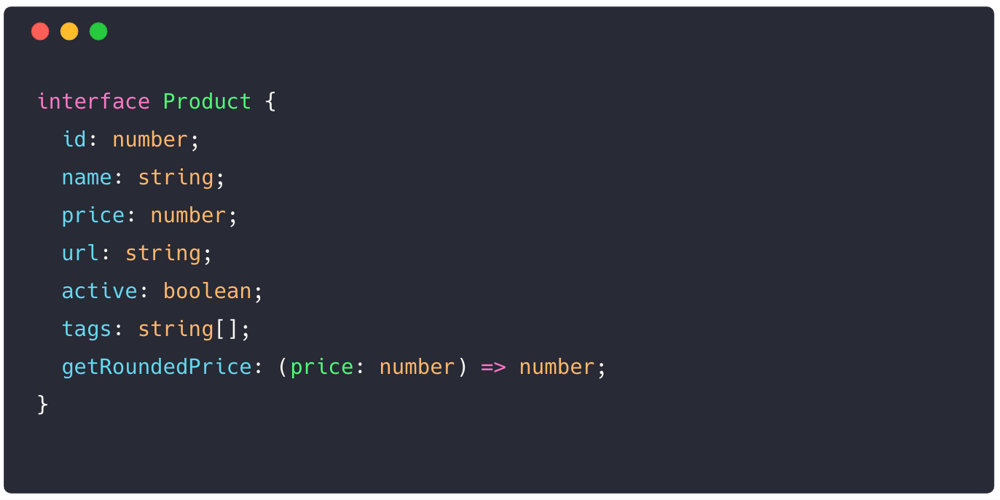

HumanEval – Tests coding ability by measuring a model’s accuracy in completing programming tasks.

BIG-bench (Beyond the Imitation Game) – Measures creativity, reasoning, and world knowledge across diverse tasks.

HellaSwag – Challenges models with commonsense reasoning and next-sentence prediction tasks.

Beyond these, more specialized tests evaluate truthfulness, conversational fluency, and human preference alignment:

TruthfulQA – Examines how often an AI generates factually correct answers rather than perpetuating common misconceptions.

MT-Bench – Assesses AI assistants' conversational quality and helpfulness in chat-based scenarios.

AlpacaEval – Uses human feedback to rank LLM responses.

Arena Benchmarks – Directly compares LLMs in head-to-head evaluations.

Why One Benchmark Isn’t Enough

Each benchmark focuses on a different skill set. An AI that dominates GSM8K (math reasoning) may struggle in HellaSwag (commonsense prediction). Likewise, an LLM excelling at HumanEval (code generation) might not perform well in open-ended dialogue.

Consider a model designed primarily for conversational engagement—say, an AI customer service assistant. If we judge it solely on its ability to solve math problems, it might seem "inferior" to a reasoning-heavy model like GPT-4 Turbo. But that doesn’t mean it’s a bad model—it’s just optimized for a different use case.

One of the most common pitfalls in AI discourse is using selective benchmarks to claim an LLM is "better" without specifying in what context. If an LLM outperforms another on MMLU but lags in AlpacaEval, is it truly better overall? The answer depends on the application.

A conversational AI designed for human-like interaction should be evaluated on conversational benchmarks. A research-oriented model, on the other hand, should be assessed on knowledge recall and reasoning-based metrics. Grand claims about AI dominance without specifying the task at hand mislead rather than inform.

A Smarter Way to Compare AI

To truly assess an LLM’s effectiveness, we must:

Use multiple benchmarks rather than cherry-picking results.

Consider task specialization—a chatbot and a math solver have different goals.

Look beyond raw scores and evaluate real-world utility.

Incorporate human feedback, as numbers alone don’t always capture subjective quality.

In the rush to compare LLMs, we must avoid the trap of oversimplification. No single benchmark can define "better," and real-world AI use cases require a nuanced approach.

Before declaring one AI model the winner, let’s first ask: Better at what?

Ben Santora - March 2025

The Misleading "Best AI" Narrative

One of the most common pitfalls in AI discourse is using selective benchmarks to claim an LLM is "better" without specifying in what context. If an LLM outperforms another on MMLU but lags in AlpacaEval, is it truly better overall? The answer depends on the application.

A conversational AI designed for human-like interaction should be evaluated on conversational benchmarks. A research-oriented model, on the other hand, should be assessed on knowledge recall and reasoning-based metrics. Grand claims about AI dominance without specifying the task at hand mislead rather than inform.

A Smarter Way to Compare AI

To truly assess an LLM’s effectiveness, we must:

Use multiple benchmarks rather than cherry-picking results.

Consider task specialization—a chatbot and a math solver have different goals.

Look beyond raw scores and evaluate real-world utility.

Incorporate human feedback, as numbers alone don’t always capture subjective quality.

In the rush to compare LLMs, we must avoid the trap of oversimplification. No single benchmark can define "better," and real-world AI use cases require a nuanced approach.

Before declaring one AI model the winner, let’s first ask: Better at what?

Ben Santora - March 2025

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[DEALS] The Premium Learn to Code Certification Bundle (97% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

_Christophe_Coat_Alamy.jpg?#)

(1).webp?#)

![iPhone 17 Pro Won't Feature Two-Toned Back [Gurman]](https://www.iclarified.com/images/news/96944/96944/96944-640.jpg)

![Tariffs Threaten Apple's $999 iPhone Price Point in the U.S. [Gurman]](https://www.iclarified.com/images/news/96943/96943/96943-640.jpg)