Building a High-Performance Web Scraper with Python

Introduction This article explores the architecture and implementation of a high-performance web scraper built to extract product data from e-commerce platforms. The scraper uses multiple Python libraries and techniques to efficiently process thousands of products while maintaining resilience against common scraping challenges. Technical Architecture The scraper is built on a fully asynchronous foundation using Python's asyncio ecosystem, with these key components: Network Layer: aiohttp for async HTTP requests with connection pooling DOM Processing: BeautifulSoup4 for HTML parsing Dynamic Content: Playwright for JavaScript-rendered content extraction Data Processing: pandas for data manipulation and export Implementation Highlights Concurrency Management The scraper implements a worker pool pattern with configurable concurrency limits: # Concurrency settings self.max_workers = int(os.getenv('MAX_WORKERS')) self.max_connections = int(os.getenv('MAX_CONNECTIONS')) # TCP connection pooling connector = aiohttp.TCPConnector( limit=self.max_connections, resolver=resolver # Custom DNS resolver ) This prevents overwhelming the target server while maximizing throughput. Resilient Network Requests The network layer implements sophisticated retry logic with exponential backoff: async def fetch_url(self, session, url): retries = 0 while retries

Introduction

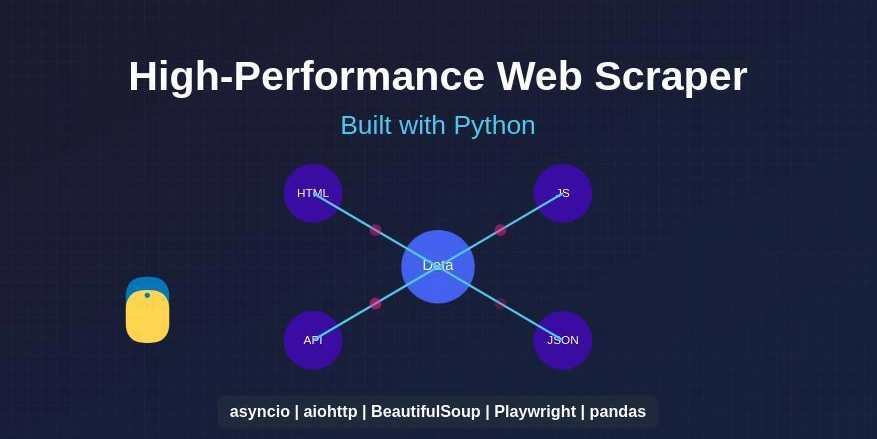

This article explores the architecture and implementation of a high-performance web scraper built to extract product data from e-commerce platforms. The scraper uses multiple Python libraries and techniques to efficiently process thousands of products while maintaining resilience against common scraping challenges.

Technical Architecture

The scraper is built on a fully asynchronous foundation using Python's asyncio ecosystem, with these key components:

-

Network Layer:

aiohttpfor async HTTP requests with connection pooling -

DOM Processing:

BeautifulSoup4for HTML parsing -

Dynamic Content:

Playwrightfor JavaScript-rendered content extraction -

Data Processing:

pandasfor data manipulation and export

Implementation Highlights

Concurrency Management

The scraper implements a worker pool pattern with configurable concurrency limits:

# Concurrency settings

self.max_workers = int(os.getenv('MAX_WORKERS'))

self.max_connections = int(os.getenv('MAX_CONNECTIONS'))

# TCP connection pooling

connector = aiohttp.TCPConnector(

limit=self.max_connections,

resolver=resolver # Custom DNS resolver

)

This prevents overwhelming the target server while maximizing throughput.

Resilient Network Requests

The network layer implements sophisticated retry logic with exponential backoff:

async def fetch_url(self, session, url):

retries = 0

while retries < self.max_retries:

try:

headers = {

'User-Agent': self.user_agent.random,

# Additional headers omitted for brevity

}

async with session.get(url, headers=headers, timeout=self.request_timeout) as response:

if response.status == 200:

return await response.read()

elif response.status == 429:

# Rate limit handling

retry_after = int(response.headers.get('Retry-After',

self.retry_backoff ** (retries + 2)))

await asyncio.sleep(retry_after)

# Retry with exponential backoff

retries += 1

wait_time = self.retry_backoff ** (retries + 1)

await asyncio.sleep(wait_time)

except (asyncio.TimeoutError, aiohttp.ClientError) as e:

logger.warning(f"Network error: {e}")

retries += 1

Hybrid Content Extraction

The scraper employs a two-phase extraction approach:

- Static HTML Parsing: Uses BeautifulSoup to extract readily available content

- Dynamic Content Extraction: Uses Playwright to handle JavaScript-rendered elements

async def fetch_product(self, session, url, page):

# Static content extraction

with concurrent.futures.ThreadPoolExecutor() as executor:

loop = asyncio.get_event_loop()

product = await loop.run_in_executor(

executor,

partial(self.scrape_product_html, content, url)

)

# Dynamic content extraction

image_url, description = await self.scrape_dynamic_content_playwright(page, url)

product.image_url = image_url

product.description = description

This approach optimizes for both speed and completeness.

DNS Resilience

The scraper implements DNS fallbacks to handle potential DNS resolution issues:

try:

import aiodns

resolver = aiohttp.AsyncResolver(nameservers=["8.8.8.8", "1.1.1.1"])

except ImportError:

logger.warning("aiodns library not found. Falling back to default resolver.")

resolver = None

Data Processing Pipeline

The scraper implements a thread-safe queue for handling scraped data:

# Thread-safe queue for results

self.results_queue = queue.Queue()

# Data processing

def save_results_from_queue(self):

products = []

while not self.results_queue.empty():

try:

products.append(self.results_queue.get_nowait())

except queue.Empty:

break

if products:

df = pd.DataFrame(products)

# Save to CSV with proper encoding and escaping

df.to_csv(

filename,

index=False,

encoding='utf-8-sig',

escapechar='\\',

quoting=csv.QUOTE_ALL

)

Performance Optimizations

Several techniques are employed to maximize throughput:

- Batch Processing: Products are processed in configurable batches

- Random Delays: Randomized delays between requests prevent detection

- Connection Pooling: TCP connection reuse reduces overhead

- ThreadPoolExecutor: CPU-bound tasks are offloaded to prevent blocking the event loop

- Sampling: For large datasets, statistical sampling is used to estimate total counts

Error Handling and Reliability

The scraper implements comprehensive error handling:

try:

# Scraping logic

except Exception as e:

logger.error(f"Error in scrape_all_products: {e}")

# Save any results in queue before exiting

self.save_results_from_queue()

raise

This ensures that even if the scraper crashes, partial results are saved.

Conclusion

The architecture outlined here demonstrates how to build a high-performance web scraper that balances speed, reliability, and target server courtesy. By leveraging asynchronous programming, connection pooling, and hybrid content extraction techniques, the scraper can efficiently process thousands of products while maintaining resilience against common scraping challenges.

Key takeaways:

- Asynchronous programming is essential for high-performance web scraping

- Hybrid static/dynamic extraction maximizes data completeness

- Proper error handling and resilience mechanisms are crucial for production use

- Configurable parameters allow for fine-tuning based on target site characteristics

![[The AI Show Episode 142]: ChatGPT’s New Image Generator, Studio Ghibli Craze and Backlash, Gemini 2.5, OpenAI Academy, 4o Updates, Vibe Marketing & xAI Acquires X](https://www.marketingaiinstitute.com/hubfs/ep%20142%20cover.png)

![[DEALS] The Premium Learn to Code Certification Bundle (97% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)

![From drop-out to software architect with Jason Lengstorf [Podcast #167]](https://cdn.hashnode.com/res/hashnode/image/upload/v1743796461357/f3d19cd7-e6f5-4d7c-8bfc-eb974bc8da68.png?#)

.png?#)

_Christophe_Coat_Alamy.jpg?#)

.webp?#)

![Apple Considers Delaying Smart Home Hub Until 2026 [Gurman]](https://www.iclarified.com/images/news/96946/96946/96946-640.jpg)

![iPhone 17 Pro Won't Feature Two-Toned Back [Gurman]](https://www.iclarified.com/images/news/96944/96944/96944-640.jpg)

![Tariffs Threaten Apple's $999 iPhone Price Point in the U.S. [Gurman]](https://www.iclarified.com/images/news/96943/96943/96943-640.jpg)